🚀 为什么要用 Docker 部署OpenClaw?

环境隔离:不需要在你的宿主机上安装乱七八糟的库文件。 一键启动:配置好 docker-compose,剩下的交给程序。 跨平台:无论是 Linux、macOS 还是 Windows,只要有 Docker,体验完全一致。

说到底还是因为之前用VMware跑Ubuntu虚拟机体验太差,持续运行太占内存了🫠!!!。为此,我将介绍如何通过Docker部署OpenClaw,并配置本地Ollama模型,实现零成本使用OpenClaw的方案,同时介绍OpenClaw的使用案例,包括新闻热搜查询、天气查询和股市信息查询等功能。

参考https://openclaws.io/zh/blog/openclaw-docker-deployment

🛠️ 准备工作

至少4GB可用内存 至少10GB可用磁盘空间 网络连接正常 安装好Docker Desktop、Git

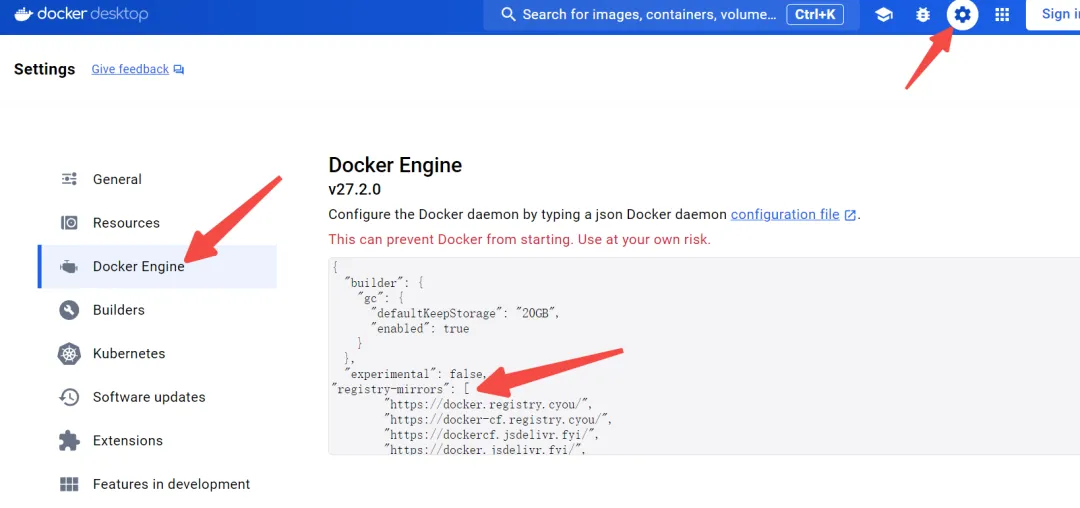

Docker Desktop可以从官网下载安装,注意,要给Docker Desktop添加换镜像源:https://www.docker.com/products/docker-desktop/

"registry-mirrors": ["https://docker.registry.cyou/","https://docker-cf.registry.cyou/","https://dockercf.jsdelivr.fyi/","https://docker.jsdelivr.fyi/","https://dockertest.jsdelivr.fyi/","https://mirror.aliyuncs.com/","https://dockerproxy.com/","https://mirror.baidubce.com/","https://docker.m.daocloud.io/","https://docker.nju.edu.cn/","https://docker.mirrors.sjtug.sjtu.edu.cn/","https://docker.mirrors.ustc.edu.cn/","https://mirror.iscas.ac.cn/","https://docker.rainbond.cc/","https://jq794zz5.mirror.aliyuncs.com" ]📦 部署步骤

参考https://docs.openclaw.ai/install/docker部署。

第一步:运行Ollama容器

为了免费运行OpenClaw,我们选择使用本地模型。

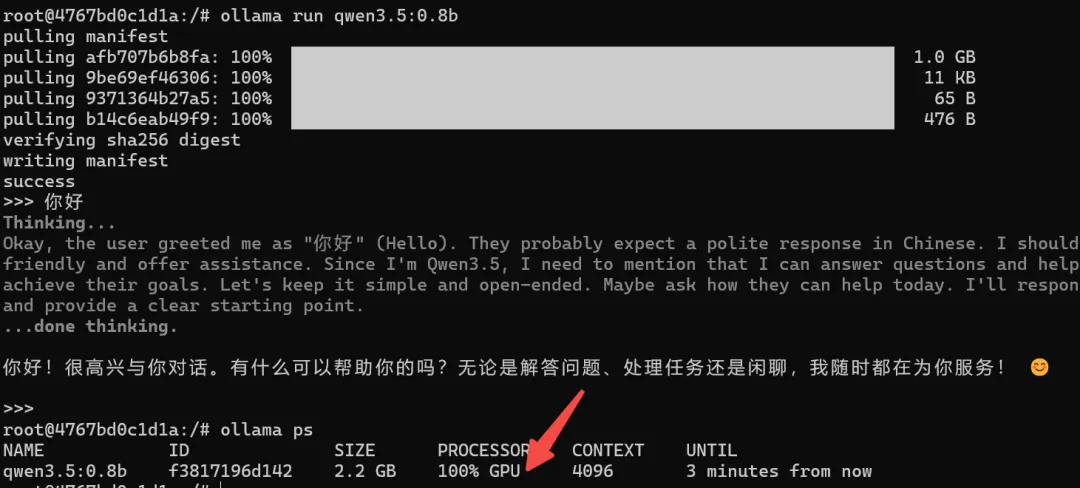

docker run -d -v ollama:/root/.ollama -p 11434:11434 -e DISPLAY=host.docker.internal:0.0 --pid=host --gpus=all --name ollama ollama/ollama# 以管理员身份进入容器docker exec -u root -it ollama /bin/bash# 下载模型,参考https://ollama.com/library/qwen3.5ollama pull qwen3.5:0.8b# 测试模型ollama run qwen3.5:0.8b# 经过测试qwen3.5:0.8b的运行效果不好,更换为deepseek-r1:8bollama run deepseek-r1:8b# 运行服务ollama serve查看ollama是否使用GPU,可通过下述的百分比查看,如果大于0则说明使用了GPU

ollama ps

第二步:克隆项目

git clone https://github.com/openclaw/openclaw.git# 如果更新项目,则运行以下代码# git pull

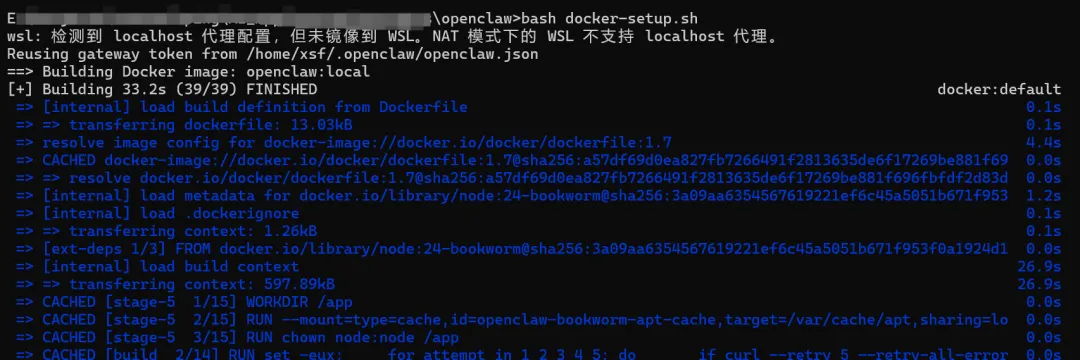

第三步:构建或运行容器

# 打开科学上网cd openclawbash docker-setup.sh# 重新运行容器# docker compose up -d openclaw-gateway

第四步:配置openclaw

运行至第三步结束时,系统将提示配置OpenClaw。

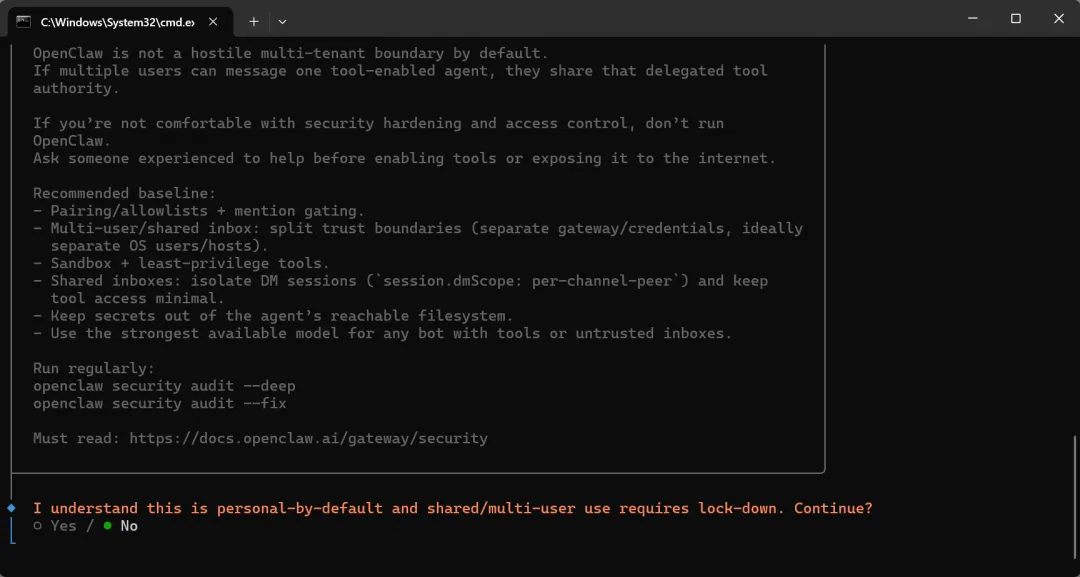

以下是OpenClaw的配置信息。

以下是OpenClaw的配置信息。

◆ I understand this is personal-by-default and shared/multi-user use requires lock-down. Continue?│ ● Yes / ○ No└◆ Setup mode│ ● QuickStart (Configure details later via openclaw configure.)│ ○ Manual◆ Config handling│ ○ Use existing values│ ● Update values│ ○ Reset└◆ Model/auth provider│ ○ Anthropic│ ○ BytePlus│ ○ Chutes│ ○ Cloudflare AI Gateway│ ○ Copilot│ ○ Custom Provider│ ○ DeepSeek│ ○ Google│ ○ Hugging Face│ ○ Kilo Gateway│ ○ LiteLLM│ ○ Microsoft Foundry│ ○ MiniMax│ ○ Mistral AI│ ○ Moonshot AI (Kimi K2.5)│ ● Ollama (Cloud and local open models)│ ○ OpenAI│ ○ OpenCode│ ○ OpenRouter│ ○ Qianfan│ ○ Qwen (Alibaba Cloud Model Studio)│ ○ SGLang│ ○ Synthetic│ ○ Together AI◆ Ollama base URL│ http://host.docker.internal:11434└◆ Ollama mode│ ○ Cloud + Local│ ● Local (Local models only)└◆ Default model│ ○ Keep current (ollama/qwen3.5:0.8b)│ ● Enter model manually│ ○ ollama/bge-m3:latest│ ○ ollama/glm-4.7-flash│ ○ ollama/qllama/bge-large-zh-v1.5:latest│ ○ ollama/qwen3.5:0.8b│ ○ ollama/smartwang/bge-large-zh-v1.5-f32.gguf:latest└◆ Default model│ ollama/qwen3.5:0.8b█└◆ Select channel (QuickStart)│ ○ Telegram (Bot API)│ ○ WhatsApp (QR link)│ ○ Discord (Bot API)│ ○ IRC (Server + Nick)│ ○ Google Chat (Chat API)│ ○ Slack (Socket Mode)│ ○ Signal (signal-cli)│ ○ iMessage (imsg)│ ○ LINE (Messaging API)│ ○ Mattermost (plugin)│ ○ Nextcloud Talk (self-hosted)│ ○ Feishu/Lark (飞书)│ ○ BlueBubbles (macOS app)│ ○ Zalo (Bot API)│ ○ Synology Chat (Webhook)│ ○ Nostr (NIP-04 DMs)│ ○ Microsoft Teams (Teams SDK)│ ○ Matrix (plugin)│ ○ Zalo (Personal Account)│ ○ Tlon (Urbit)│ ○ Twitch (Chat)│ ● Skip for now (You can add channels later via `openclaw channels add`)◆ Search provider│ ○ Brave Search│ ● DuckDuckGo Search (experimental) (Free web search fallback with no API key required · key-free)│ ○ Exa Search│ ○ Firecrawl Search│ ○ Gemini (Google Search)│ ○ Grok (xAI)│ ○ Kimi (Moonshot)│ ○ Perplexity Search│ ○ Tavily Search│ ○ Skip for now└◆ Configure skills now? (recommended)│ ○ Yes / ● No└◆ Enable hooks?│ ◻ Skip for now│ ◼ 🚀 boot-md (Run BOOT.md on gateway startup)│ ◻ 📎 bootstrap-extra-files│ ◼ 📝 command-logger (Log all command events to a centralized audit file)│ ◼ 💾 session-memory (Save session context to memory when /new or /reset command is issued)└第五步:测试openclaw

进入控制台前,先配置openclaw无需配对设备。

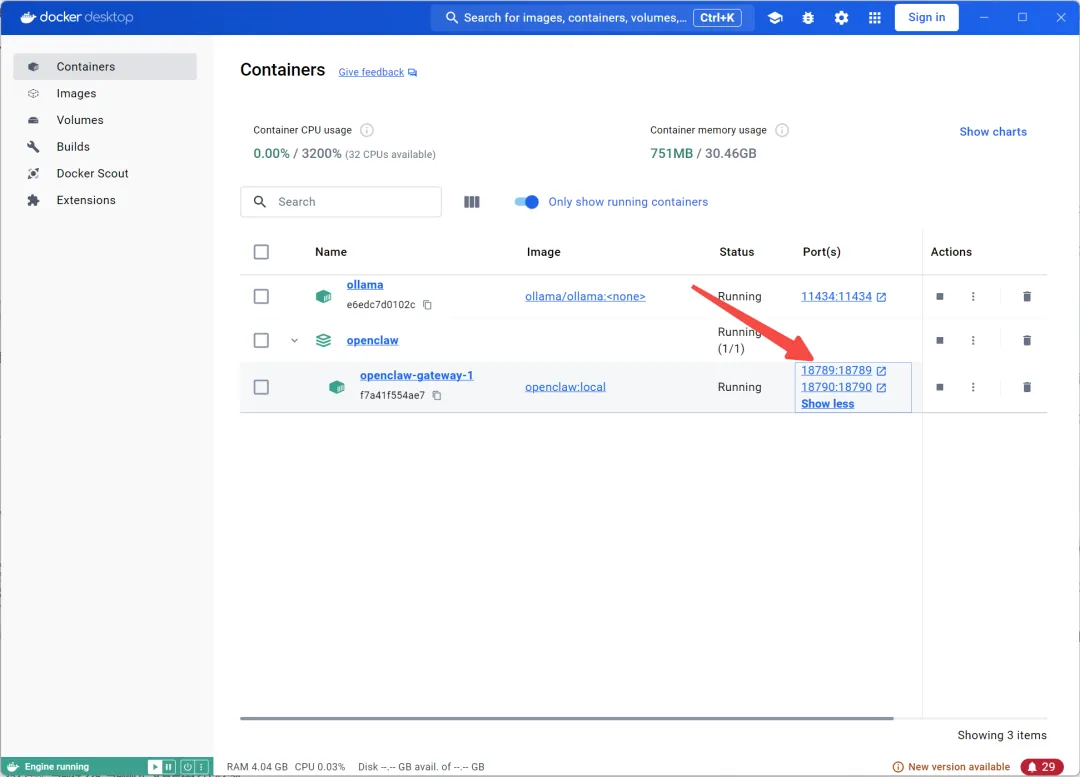

openclaw gateway --port 18789 --allow-unconfigured 点击容器端口后,系统将自动跳转至OpenClaw控制台界面,即可在此与OpenClaw进行交互对话。

点击容器端口后,系统将自动跳转至OpenClaw控制台界面,即可在此与OpenClaw进行交互对话。

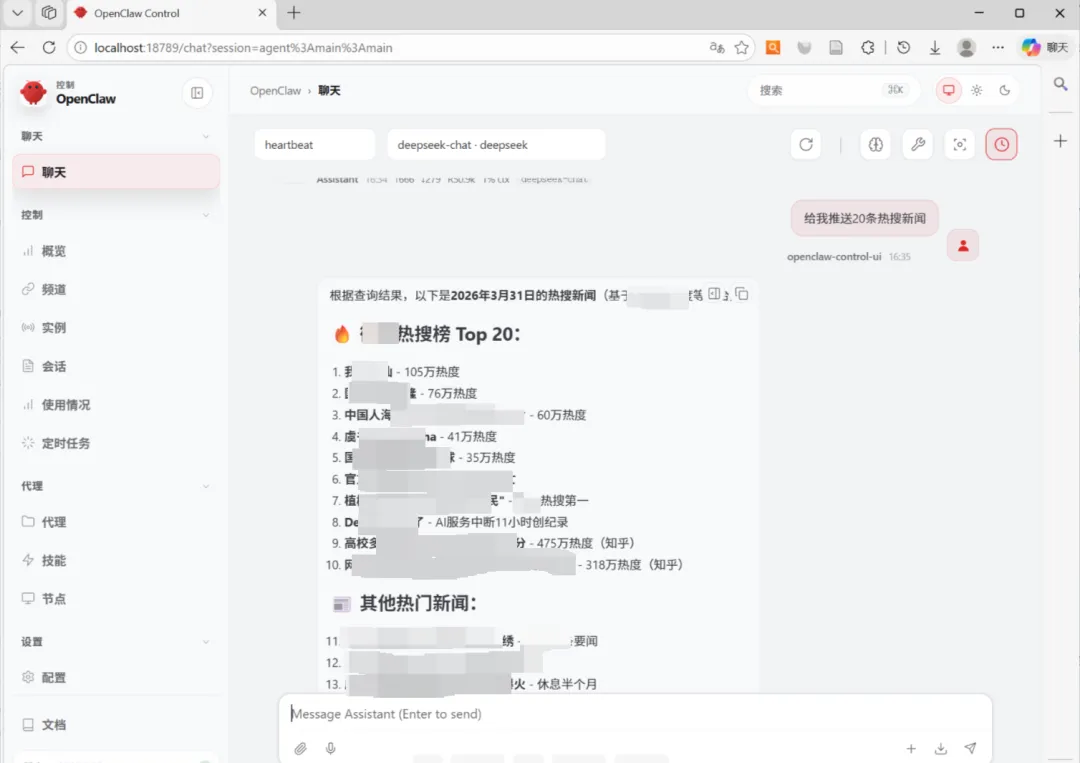

因使用的浏览器是duckduckgo,所以要打开科学上网才可使用浏览器功能

其他

1、安装skills

docker exec -u root -it openclaw-openclaw-gateway-1 /bin/bash# 安装clawhub的skill:npm i -g [skill-name]npm i -g clawhub2、更换模型

docker exec -it openclaw-openclaw-gateway-1 /bin/bash# 在指定的步骤添加apiopenclaw onboard🎁使用案例

新闻热搜查询

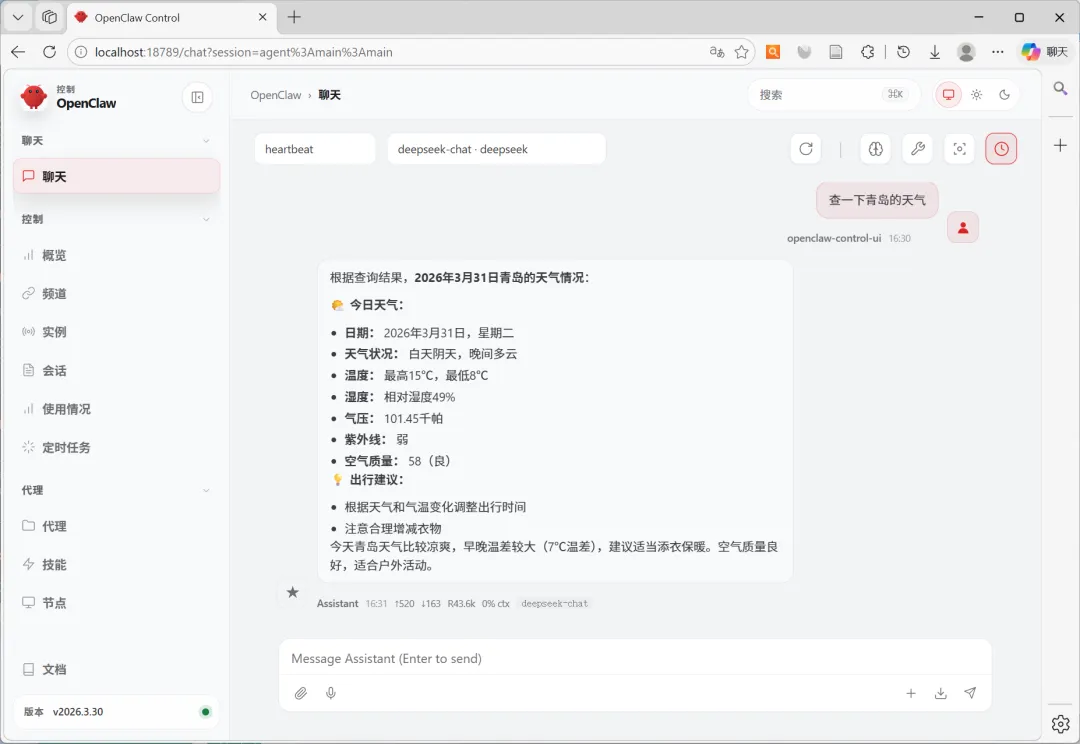

天气查询

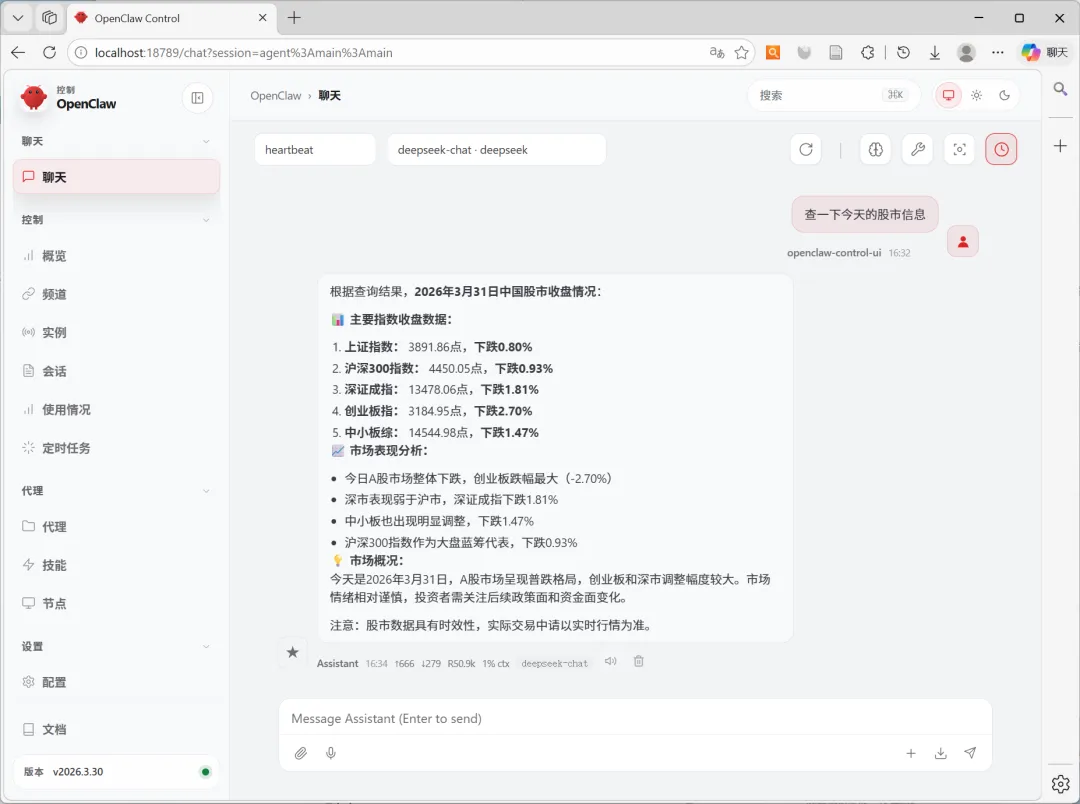

股市信息查询

夜雨聆风

夜雨聆风