Do NOT Say A Word First When Receiving These Calls

▲Click above to subscribe点击上方蓝字关注我们

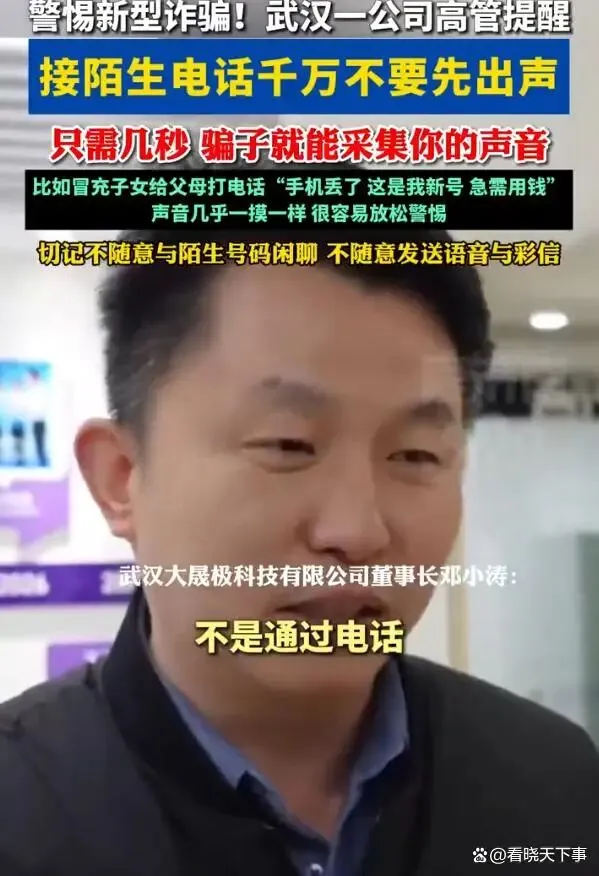

Advances in AI voice cloning have made it possible to replicate a person’s voice with as little as five seconds of an audio sample, and criminals increasingly relying on the technology to scam unsuspecting individuals.

Voice is a unique biometric identifier, much like a fingerprint. But as AI-powered voice synthesis becomes more accessible, the barrier to stealing and cloning someone’s voice has dropped dramatically.

According to industry experts in the voice recognition field, just five to ten seconds of recorded speech is now enough to extract and recreate a person’s voice with high accuracy.

These samples can be harvested from everyday sources: casual phone conversations, social media voice messages, video clips, or even a brief “hello” when picking up a call from an unknown number.

Cybersecurity specialists warn that open-source voice models allow almost anyone to clone a voice in minutes and make it say whatever the attacker chooses.

How It Works

A new wave of fraud is spreading rapidly: criminals obtain a short voice sample of a target, use AI to clone it, then call the target’s friends and relatives pretending to be in distress.

The approach exploits one of the most fundamental forms of human trust: recognizing a loved one’s voice.

Real-World Cases

In one case reported in the United States, a mother identified only as Rachel received a call that appeared to come from her college-aged daughter. The caller ID showed her daughter’s name and photo.

The voice on the line, crying, said she had been in an accident and was being kidnapped. The scammers kept Rachel on the phone for nearly two hours, instructing her to wire her entire savings—$3,270—through Walmart and Walgreens.

It’s only when her real daughter called on another line did Rachel realize she had been scammed.

Cybersecurity experts note that as little as two seconds of a person’s voice can be enough to create a convincing fake.

The scale of AI-powered fraud has reached industrial proportions. According to Vyntra’s 2026 fraud trends report, global scam losses have reached $442 billion over the past year. The report found that 70 percent of adults worldwide have experienced at least one scam attempt, with 23 percent ultimately losing money.

The International AI Safety Report 2026 notes that research suggests listeners mistake AI-generated voices for real speakers up to 80 percent of the time.

The report warns that synthetic content is now a direct threat to fraud controls, as cloned voices have been used to persuade victims to transfer money by exploiting trust-based approval processes.

How to Protect Yourself

Authorities and cybersecurity experts recommend the following precautions:

-Don’t answer calls from unknown numbers

If you do pick up, avoid speaking first. Even saying “Hello? Who is this?” can provide enough audio for a clone.

-Limit public voice recordings

Avoid posting voice messages, audio clips, or videos with clear speech on public social media accounts. Voice messages sent through apps or text can also be intercepted.

-Use a family code word

Establish a secret word or phrase with family members that can be used to verify identity in an emergency. If someone calls claiming to be a relative in distress, ask for the code word before taking any action.

-Verify through other channels

If you receive a call from a family member or colleague asking for money, hang up and call them back on a number you know is theirs. Do not rely on caller ID, which can be spoofed.

-Watch for red flags

AI-generated voices may lack natural breathing sounds, have flat emotional tone, or feature unnatural pauses. If something sounds “off,” trust your instinct and verify.

-Be suspicious of urgency

Scammers create time pressure to prevent victims from thinking clearly. Any unexpected request for money—especially if it demands immediate action—should be treated with extreme caution.

Sources: GMW, CCTV News, Hubei Daily, UN Office on Drugs and Crime, Hogan Lovells, The Fintech Times, WDTV

外语圈: BAFLA’s Chinese channel 中文频道

Contributions will be appreciated!

欢迎投稿!

-

Woman Spends ¥200,000 to Find 10 Quality Men, Gets Cheated! -

“Clever” Shoppers Use AI to Fake Damaged Goods for Refunds -

Foreign Boyfriend Accused of Scamming Chinese Influencer Out of 1 Million Yuan

夜雨聆风

夜雨聆风