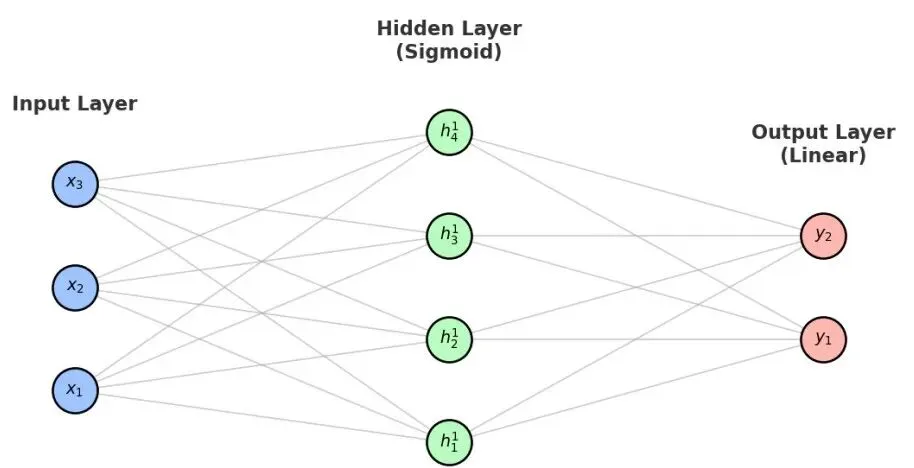

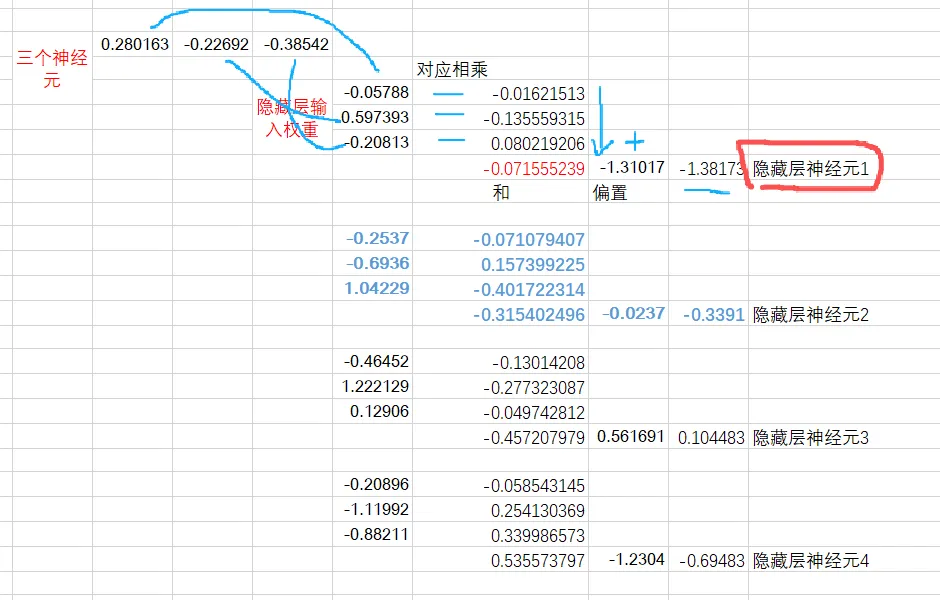

输入层: [[ 0.28016255 -0.22691806 -0.38542348] [-0.62147375 -0.04227238 0.83113161] [-0.72065683 1.34015883 -1.15663046] [-0.93960809 -0.10327481 -0.38309941] [ 0.25712053 -0.47601475 0.92826205]]输入->隐藏权重: [[-0.05787758 -0.25370774 -0.46452347 -0.20896135] [ 0.59739324 -0.69363904 1.22212876 -1.1199213 ] [-0.20813264 1.04228812 0.12906015 -0.88211173]]隐藏层偏置: [-1.31017352 -0.02368456 0.56169127 -1.23040283]

0.28016255 -0.22691806 -0.38542348

隐藏层:根据三个神经元的值和输入隐藏权重,计算每个神经元的值输入层权重+偏置运算: [[-1.38172876 -0.33908706 0.10448329 -0.69482905] [-1.47244297 1.02958851 0.90598409 -1.78634804] [-0.22712928 -1.97597702 2.38502504 -1.56040853] [-1.23775146 -0.11296324 0.82250329 -0.58046492] [-1.80262466 1.20878089 -0.01969693 -1.56986287]]

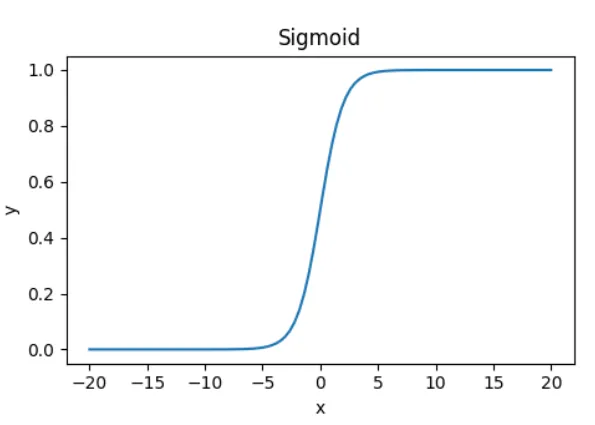

后续运算还有“非线性激活”,隐藏层的值变为0-1的值。hidden = 1/(1+np.exp(-pre_hidden))

pred_out = np.dot(hidden, weights[2]) + weights[3]

隐藏->输出权重: [[ 0.08112603 1.25424186] [-1.38500489 -0.86261395] [-0.1308602 -1.30589645] [ 0.27579799 -1.30921772]]输出层偏置: [ 0.23831534 -0.07974761]输出层: [[ 0.95523837 -1.30332173] [ 0.5752935 -0.14659753] [-0.13773833 1.2507798 ] [ 0.90996906 -0.12079895] [ 1.38856383 -0.09266748]]

mean_squared_error = np.mean(np.square(pred_out - outputs))

mse = feed_forward(inputs, outputs, weights)print("均方误差 (MSE):", mse)

def feed_forward(inputs, outputs, weights): pre_hidden = np.dot(inputs, weights[0]) + weights[1] print("输入层权重+偏置运算:",pre_hidden) hidden = 1/(1+np.exp(-pre_hidden)) pred_out = np.dot(hidden, weights[2]) + weights[3] mean_squared_error = np.mean(np.square(pred_out - outputs)) return mean_squared_error

夜雨聆风

夜雨聆风