Philosophy of AI Technology: A New Discipline

Philosophy of AI Technology:

The Founding of a New Discipline

— From the Tradition of Philosophy of Technology to the Ontological Foundation of Logographic AI

Abstract

Traditional philosophy of technology addresses the classic problem of “technology as a tool.” However, the development of artificial intelligence has introduced a radically new type of technology—one endowed with “cognition” and “intentionality.” This compels the philosophy of technology to expand its problem domain and methodology. This paper aims to propose and systematically articulate “Philosophy of AI Technology” as an independent sub-discipline of the philosophy of technology.

The paper first reviews the “engineering” tradition established for Chinese philosophy of technology by Professor Chen Changshu and indicates its continuation and expansion in the AI era. It then analyzes five breakthrough points where AI challenges traditional philosophy of technology, establishing the legitimacy of the new discipline. On this basis, it proposes six core problem domains for the Philosophy of AI Technology. Subsequently, it incorporates Logographic AI theory—an alternative paradigm whose core components include the “Morpho-Root ontology” and “Natural Language Ontology”—into the core theoretical framework of the Philosophy of AI Technology, and engages in an in-depth discussion of the symbol grounding problem and its fundamental divergence from Saussurean linguistics. After establishing the “incommensurability” between Tokenism and the Morpho-Root paradigm, it further proposes “pluralistic commensurability” as a meta-methodology for dialogue among different Civilization-Native Intelligences (CNI). It then elucidates Marxist philosophy and the dialectics of nature as the theoretical foundation for a Chinese school of Philosophy of AI Technology, and finally outlines practical pathways for disciplinary construction

This paper argues that the core mission of the Philosophy of AI Technology is: in an era when technology begins to generate meaning, to inquire anew into “where meaning comes from,” “what it means to understand,” and “how value takes root.” This is not a departure from Chen Changshu’s academic legacy but an inheritance and advancement of his spirit of “basing on practice, opening up and innovating,” as well as a contemporary expansion of the philosophy of technology under the guidance of Marxist philosophy.

Keywords:Philosophy of AI Technology; Chen Changshu; Logographic AI; Morpho-Root ontology; Natural Language Ontology; symbol grounding problem; Saussure; incommensurability; pluralistic commensurability; rootless semioticism; Marxism; dialectics of nature

1. Introduction: Why a Philosophy of AI Technology Is Needed

By 2026, artificial intelligence has evolved from a “tool” into a “meaning generator.” It no longer merely executes instructions but generates texts, makes decisions, proposes scientific hypotheses, and even learns to “pretend.” This transformation compels philosophy to confront a fundamental inquiry: When technology begins to generate meaning, where does the “root” of that meaning lie?

Traditional philosophy of technology (from Kapp and Gehlen to Heidegger, Marcuse, and Feenberg) has addressed the classical problem of “technology as a tool”—the essence of technology, technology and humanity, technology and the world, and technology and society. What AI brings is not merely “more powerful technology” but a radically new type of technology: one endowed with “cognition” and “intentionality.” This compels the philosophy of technology to expand its problem domain and methodology.

The urgency of this epochal inquiry has been brought into sharp relief by a series of industrial events in 2026. Alexander Lerchner, a researcher at Google DeepMind, publishedThe Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness, posing a fundamental challenge to computational functionalism from within the field. He argues that symbolic computation is merely the “map” and not the “territory,” and that algorithms can simulate behavior but cannot instantiate experience [6]. Concurrently, DeepMind hired the philosopher Henry Shevlin full-time to research “machine consciousness” and “human-AI relations” [7][9], while its Chief Scientist, Murray Shanahan, and colleagues proposed a “role-play” framework for understanding the conversational behavior of large language models—wherein the model does not express genuine beliefs or intentions but continuously plays roles embedded in its training data [11]. These reflections, originating from within leading AI institutions, collectively point to a core predicament: When AI begins to generate meaning, where is the “root” of that meaning? This is precisely the primary question that the philosophy of AI technology must answer.

This paper aims to propose and systematically articulate “Philosophy of AI Technology” as an independent sub-discipline of the philosophy of technology. The paper will: review the “engineering” tradition of Chinese philosophy of technology; analyze the breakthrough points where AI challenges traditional philosophy of technology; establish the core problem domains of the new discipline; deeply discuss the symbol grounding problem and its fundamental divergence from Saussurean linguistics; incorporate Logographic AI theory as an alternative paradigm into its theoretical framework; after establishing the “incommensurability” between Tokenism and the Morpho-Root paradigm, propose “pluralistic commensurability” as a meta-methodology for dialogue among different Civilization-Native Intelligences (CNI); then elucidate Marxist philosophy and the dialectics of nature as the theoretical foundation for a Chinese school of Philosophy of AI Technology; and finally outline practical pathways for disciplinary construction.

2. Core Conceptual Definitions: Tokenism, Phonographic AI,Morpho-Root, and Logographic AI

To render the argument self-contained, this chapter first defines several core concepts that run throughout the paper. These concepts constitute the fundamental coordinates of the paper’s critique and construction.

2.1 Tokenism and Phonographic AI: Two Facets of the Current Mainstream Paradigm

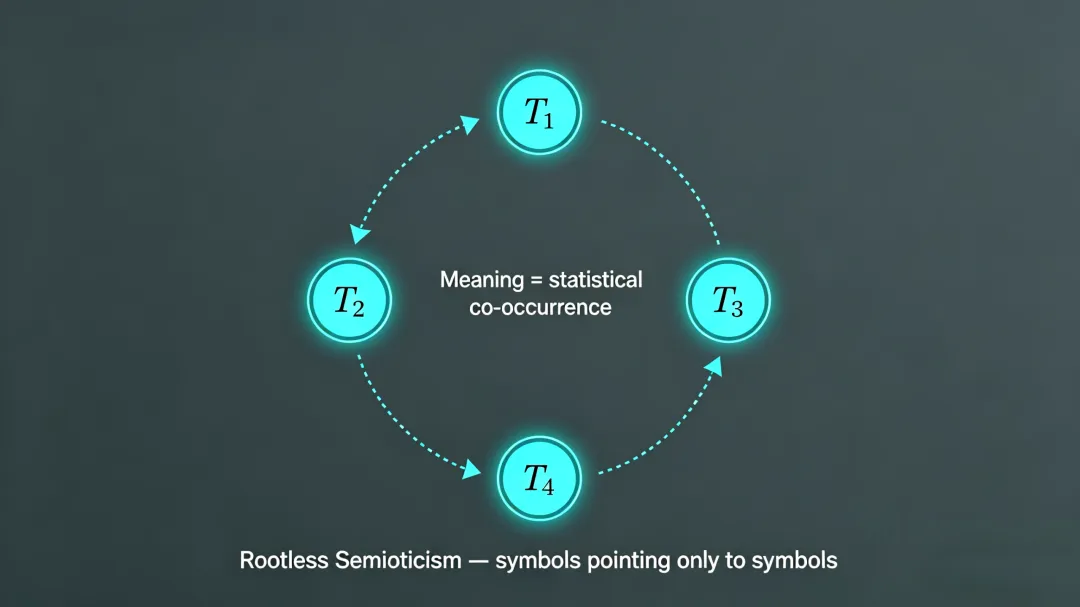

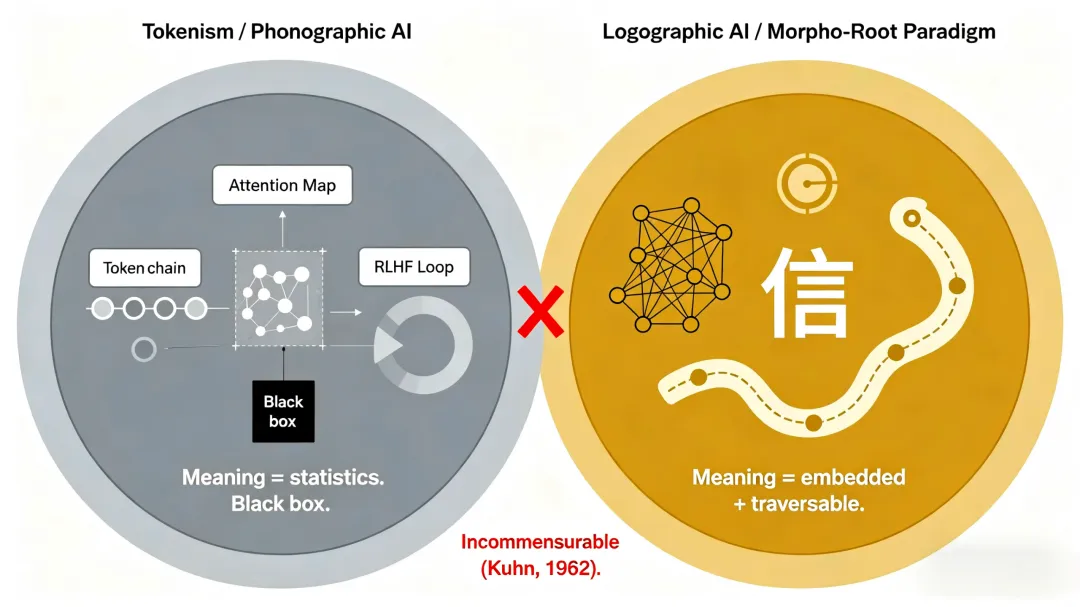

Tokenism is this paper’s encapsulation of the core technical characteristics of current mainstream large language models (such as GPT, Claude, etc.) at the engineering level. A so-called Token is a discrete symbolic unit without intrinsic meaning, obtained by segmenting all inputs—text, code, images, etc. A Token is merely an integer index; its “semantics” is entirely and temporarily conferred by statistical co-occurrence relations with other Tokens within massive datasets. The defining feature of Tokenism is that meaning is completely externalized from the symbol itself, becoming a function of statistical relations among symbols.

Phonographic AI (PAI) is the positioning of the same paradigm at the level of civilizational typology. It refers to the current AI paradigm whose underlying architecture is based on the cognitive logic of alphabetic writing (represented by English). The core feature of alphabetic writing is that letters themselves have no meaning; meaning is jointly determined by the combination of letters (words) and their linear sequence (grammar). Phonographic AI digitizes this logic, forming an AI architecture with the Token as the basic unit and sequence processing as the core computational mode.

Tokenism and Phonographic AI are two facets of the same paradigm: Tokenism reveals its technical essence, while Phonographic AI reveals its civilizational rootedness. This paper’s critique of the current mainstream AI paradigm unfolds simultaneously along these two dimensions.

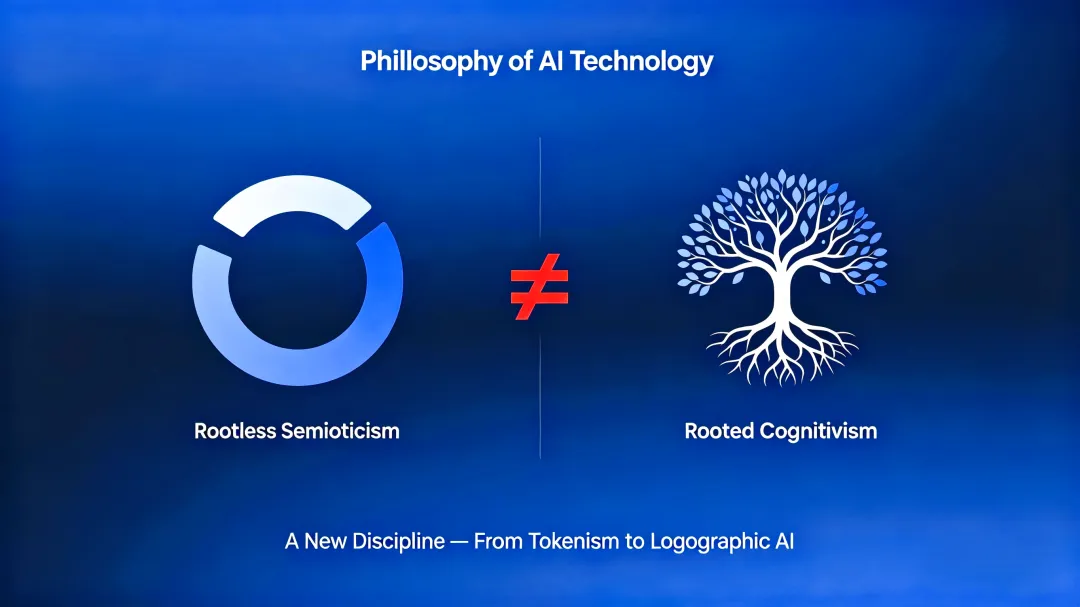

The core defect of Tokenism/Phonographic AI can be summarized as“Rootless Semioticism”: the meaning of a symbol is defined entirely by its statistical co-occurrence within the symbol system, yet it cannot reach the “signified” beyond the symbol—that is, the real world, human experience, and civilizational values. Symbols become “floating signifiers,” sliding endlessly within a closed statistical loop, forever unable to be “grounded.”

2.2 Morpho-Root and Logographic AI: The Cognitive Primitive and Civilizational Foundation of an Alternative Paradigm

Morpho-Root is the alternative cognitive primitive proposed by Logographic AI (LAI) theory [25][26][27][28]. Unlike a Token, a Morpho-Root is not an empty shell awaiting the assignment of meaning by external data but a meaning crystal encapsulated in the structured triple <S, A, R> [28]:

·S (Symbol): The symbolic identifier, the externally addressable name of the Morpho-Root.

·A (Attributes): An attribute set that embeds the intrinsic semantic features and value constraints of the Morpho-Root (e.g., [+human], [+trust], [+inviolable]). These attributes constitute the “internal state” of the Morpho-Root.

·R (Relation Functions): A set of relation functions defining the preset logical connection patterns between this Morpho-Root and others (e.g., compose(person, speech), implies(trust, person ∧ speech)).

The revolutionary aspect of the Morpho-Root design is this: meaning does not “emerge” from statistics but is pre-installed as an inherent property of the cognitive primitive. Taking “信” (trust) as an example, when the character “信” is created, “non-deception” is already a constitutive feature. Any operation conflicting with the axiom of “信” is defined as “illegal” at the cognitive primitive level.

Morpho-Roots are organized into three hierarchical levels according to the degree of cognitive abstraction, achieving coverage from foundational semantics to cultural mechanisms [25][27].Logographic AI (LAI) is thus an AI paradigm that takes the Morpho-Root as its cognitive primitive and the “form-meaning unity” logic of logographic writing (represented by Chinese characters) as its underlying architecture, with graph traversal as its core computational mode.(For a detailed philosophical exposition of the Morpho-Root, the full development of the three-tier granularity system, and its systematic comparison with Tokenism, see Chapter VI.)

2.3 Morpho-Entropy Core and Civilization-Native Intelligence: Core Architecture and Civilizational Vision

Morpho-Entropy Core (also called Morpho-Entropy Graph Computing Architecture) is the core computational architecture of the Logographic AI paradigm [25]. It takes the Morpho-Root network as its basic data structure and replaces the Transformer’s sequential attention mechanism with graph traversal, realizing structured reasoning based on preset relations (R).

To facilitate understanding of the Morpho-Entropy Core’s core features, several key technical concepts and their contrast with the mainstream paradigm (Phonographic AI/Tokenism) are briefly explained here:

Graph Traversal is the core reasoning mechanism of the Morpho-Entropy Core. In a Morpho-Root network, reasoning is not a linear scan of a Token sequence but a structured walk from the input Morpho-Root along preset relational edges (R). Each step’s activation condition (attribute constraint verification), traversal direction (relation function invocation), and conclusion generation (Morpho-Root matching) are recordable, deterministic operations. Unlike the attention mechanism of the Transformer—which computes, at each time step, the similarity between the current Token and all other Tokens, forming a fully connected, soft “attention” distribution—graph traversal proceeds only between preset adjacent edges. Its reasoning path is discrete and traceable. This “hard” structured reasoning fundamentally guarantees explainability: one can answer “why this node was activated” and “why this edge was traversed” at each step. In contrast, while Transformer attention weights can be visualized, their “traceability” is an approximate reconstruction of a computation that has already occurred, not a structural property of the computation itself.

Morpho-Structural Entropy (MSE) is the core metric for guiding resource allocation within the Morpho-Entropy Core. It measures the structural complexity and information uncertainty of the Morpho-Root nodes and relational edges in the currently activated subgraph. When the reasoning path is in a state of high MSE, it indicates that the current subgraph structure is complex, with many branching semantic paths and low certainty—the system needs to allocate more computational resources (e.g., expanding traversal depth, activating more adjacent nodes) or request human intervention. When MSE is low, it indicates a clear reasoning path and high certainty; the system can adopt a fast path to directly output results. This “entropy-guided resource allocation” mechanism allows the Morpho-Entropy Core to dynamically adjust reasoning depth based on task uncertainty, rather than performing fixed-depth fully connected computations for each input Token sequence, as in the Transformer.

In contrast to Morpho-Structural Entropy,Sequential Entropy is a concept commonly used in the Phonographic AI paradigm. Within the Transformer’s self-attention mechanism, attention entropy measures the concentration of the current Token’s attention distribution over other Tokens in the sequence—lower entropy indicates attention is more focused on specific Tokens, while higher entropy indicates more dispersed attention. Sequential entropy reflects the uncertainty of statistical associations between Tokens, not structured semantic certainty. The essential difference between Morpho-Structural Entropy and Sequential Entropy is that the former operates on a preset Morpho-Root relational network and measures the uncertainty of structured semantic paths, whereas the latter operates on the statistical distribution of Token sequences and measures the probabilistic uncertainty of symbol associations. This distinction is precisely the manifestation of “Rooted Cognitivism” versus “Rootless Semioticism” at the computational architecture level.

The core features of the Morpho-Entropy Core include:

·Native Sparse Computation: The computational scope is limited to pairs of nodes connected by Morpho-Root relational edges (E), resulting in a complexity of O(|E|) rather than O(n²). This fundamentally avoids the attention bottleneck on sparse graphs and is far lower than the fully connected computational cost of the Transformer in typical application scenarios.

·Transparency and Traceability: The reasoning process is a structured traversal on a Morpho-Root network, where each step of node activation, relation traversal, and attribute verification can be recorded and audited, producing a complete decision subgraph as output.

·Morpho-Structural Entropy-Guided Resource Allocation: Dynamically adjusts reasoning depth based on the MSE (Hₛ) of the current activated subgraph—adopting a fast path for low entropy and allocating more computational resources or requesting human intervention for high entropy.

·Endogenous Value Constraints: Value axioms within Morpho-Root attributes (e.g., [+inviolable]) propagate as filters during the reasoning process, automatically blocking paths that conflict with civilizational values.

The engineering implementation of the Morpho-Entropy Core is embodied in the concrete architecture of Natural Language Ontology (NLO) [27]—Morpho-Roots are the units of meaning in which the system “dwells,” and reasoning is a “walking” through the network.

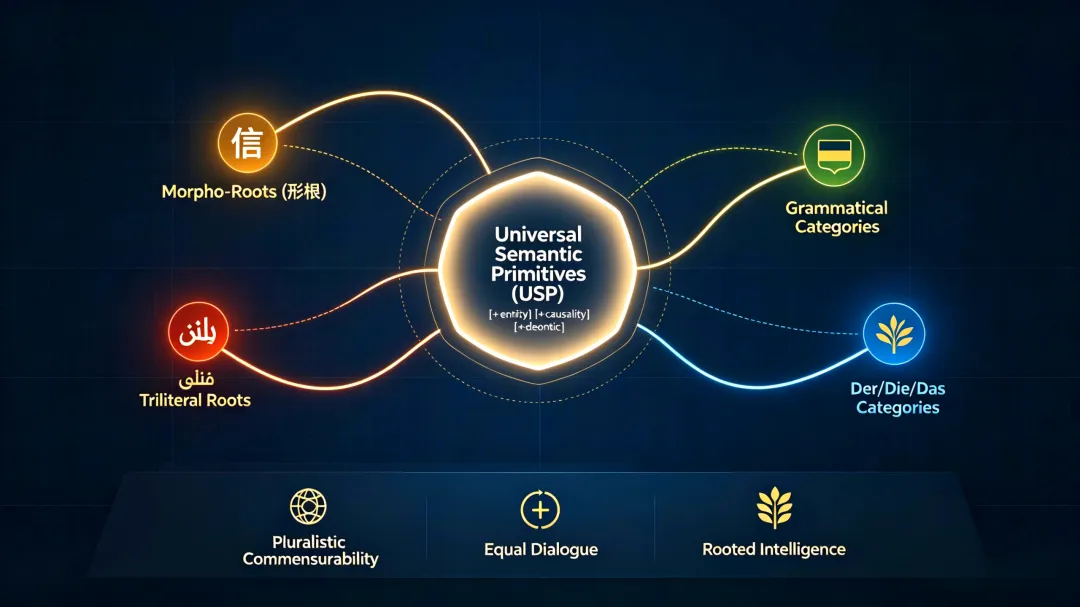

Civilization-Native Intelligence (CNI) [25][27] is the ultimate vision of the Logographic AI paradigm at the level of civilizational diversity. Its core proposition is that each civilization can extract its own “cognitive root” from the structural features of its language and develop native AI systems rooted in its own cultural soil.

CNI possesses the following characteristics:

·Civilizational Rootedness of Cognitive Primitives: The cognitive primitives of CNI (e.g., the Morpho-Root of Chinese, the triliteral root of Arabic, the grammatical categories of German) derive directly from the structural features of that civilization’s language, with meaning embedded rather than statistically assigned.

·Endogeneity of Value Systems: The core ethical values of a civilization (e.g., Confucian “仁,” Islamic “adl”) are embedded as value axioms in the attributes of cognitive primitives, becoming a priori constraints on reasoning.

·Explainability and Transparent Traceability: The reasoning process of CNI is based on graph traversal over preset relations, where each step is traceable back to specific cognitive primitives and relations, meeting the audit requirements of high-risk domains.

·Pluralistic Symbiosis, Not Singular Monopoly: Different CNIs achieve semantic interoperability and value synergy through Interconnection Protocols and Resonance Protocols, constituting a pluralistic intelligent ecosystem of the “AI Silk Road” [27].

CNI represents a fundamental alternative to the current “single general model” paradigm and is the civilizational culmination of the Logographic AI paradigm.

2.4 Overview of Fundamental Differences Between the Two Paradigms

To facilitate subsequent argumentation, the core differences between the two paradigms are summarized below:

|

Dimension |

Tokenism / Phonographic AI |

Morpho-Root / Logographic AI |

|

Cognitive Primitive |

Token (meaningless statistical fragment) |

Morpho-Root (<S, A, R> meaning crystal) |

|

Cognitive Unit System |

Flat, single granularity, determined by statistical frequency |

Three-tier granularity: sub-character level (semantic gene), character level (thought cornerstone), multi-character level (cultural mechanism encapsulator) |

|

Core Computational Architecture |

Transformer (fully connected attention, O(n²)) |

Morpho-Entropy Core (graph traversal, O(|E|), transparent and traceable) |

|

Source of Meaning |

Statistical co-occurrence, temporarily conferred |

Attribute embedding, innately carried |

|

Reasoning Mechanism |

Sequential association and probabilistic prediction |

Structural activation and graph traversal (MSE-guided resource allocation) |

|

Value Alignment |

External alignment (RLHF), posterior and fragile |

Value axioms embedded in Morpho-Root attributes, endogenous and robust |

|

Civilizational Foundation |

Linear logic of alphabetic writing |

Form-meaning unity logic of logographic writing |

|

Civilizational Vision |

Single general model (English cultural logic as default setting) |

Civilization-Native Intelligence (CNI): Pluralistic symbiosis, each civilization developing native AI based on its own “cognitive root” |

|

Future Ecosystem |

Techno-hegemonic ecosystem |

AI Silk Road: Pluralistic CNIs interconnected and symbiotic through equal protocols |

|

Philosophical Essence |

Rootless Semioticism |

Rooted Cognitivism |

2.5 The Incommensurability of the Two Paradigms

In the Kuhnian sense, Tokenism and the Morpho-Root paradigm are “incommensurable” [37]. This incommensurability manifests at three levels:

First, the typological difference in cognitive primitives. The Token is a relationalist entity—its meaning is defined entirely by statistical relations (distances in vector space) to other Tokens. It possesses no intrinsic attributes, only extrinsic relations. The Morpho-Root is an internalist entity—its meaning derives partly from embedded attributes A (e.g., [+trust][+commitment]). These attributes do not emerge from relations but are pre-solidified meaning crystals. A unit cannot simultaneously be a “pure relational term” and an “intrinsic meaning carrier.”

Second, the logical conflict in computational architectures. The core of the Transformer is fully connected attention—each Token computes similarity with every other Token, a structureless, density-driven computation. The core of the Morpho-Entropy Core is graph traversal—activation propagates only between nodes connected by Morpho-Root relational edges (R), a structured, sparse computation. In a fully connected graph, preset relational edges no longer provide structural constraints. Conversely, adding fully connected attention to graph traversal would globally average the graph structure, destroying the advantage of traceable reasoning.

Third, the philosophical opposition in the source of meaning. Tokenism’s meaning originates from statistical distribution (exogenous, passively discovered from data). The Morpho-Root paradigm’s meaning originates from embedded attributes plus structured relations (endogenous, partially pre-designed). A system cannot simultaneously “learn everything from data” and “possess unlearnable meaning crystals pre-installed”—unless one explicitly demarcates which dimensions are fixed by a priori constraints and which are adjusted by data. However, such a demarcation itself is an architectural choice, not a “hybrid.”

Therefore, this paper focuses on the philosophical foundation of the Morpho-Root paradigm as an alternative paradigm. Hybrid architectures that may emerge in engineering—such as “Morpho-Root-enhanced Transformers” or “Morpho-Entropy Cores with attention shortcuts”—are practical transitional schemes, not new stable paradigms. The long-term evolution of such hybrid architectures will either regress to Tokenism (Morpho-Roots washed away by statistics) or shift entirely toward the Morpho-Root paradigm (attention mechanisms marginalized). The engineering exploration of hybrid paradigms lies outside the scope of this paper’s philosophical discussion [37].

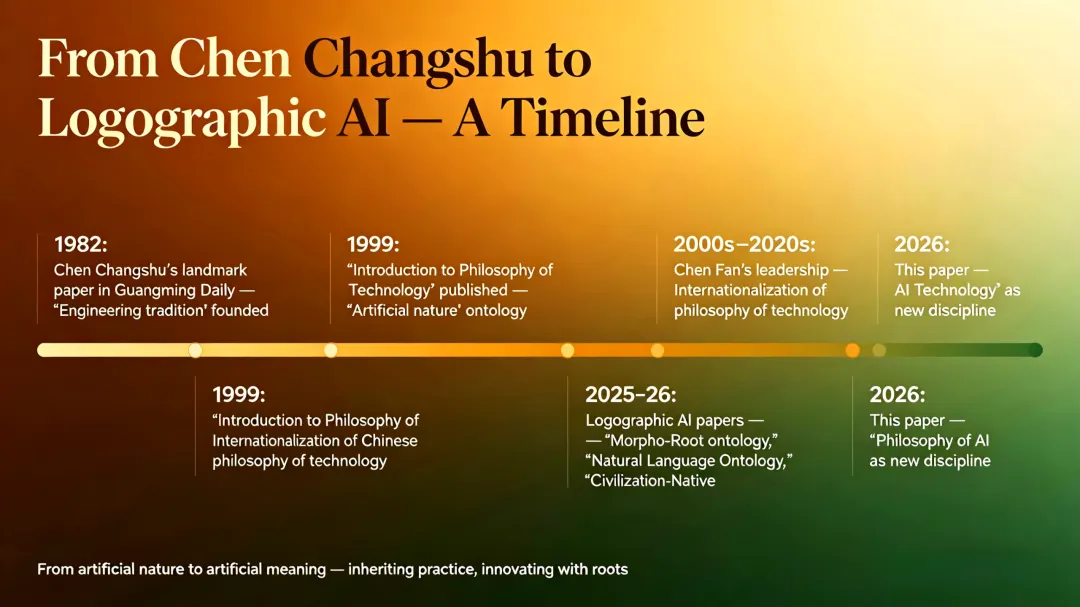

3. Chen Changshu’s Academic Legacy: The “Engineering” Tradition of Chinese Philosophy of Technology

3.1 From Epistemology to Philosophy of Technology: Chen Changshu’s Academic Path

As an independent discipline, the philosophy of technology began in 1877 with the publication ofOutline of the Philosophy of Technology by the German philosopher E. Kapp. Over the following hundred years, research in the philosophy of technology developed rapidly and made significant progress in countries such as the United States and Japan. In China, the Chinese Philosophical Yearbook published in 1984 clearly stated: “China’s systematic research in the philosophy of technology began in 1982, marked by Chen Changshu’s publication on October 1 of that year in Guangming Daily of a paper that was strictly speaking in the field of philosophy of technology — ‘The Unity and Difference between Science and Technology’” [1]. The prominent American philosopher of technology C. Mitcham has recognized Chen Changshu as a principal founder of Chinese philosophy of technology [38].

Professor Chen Changshu (1932–2011) did not begin his academic career in the philosophy of technology. In the 1950s, he was first immersed in epistemological research, distinguished himself in the fundamental categories of materialist dialectics, and gained the appreciation of Xiao Qian, a renowned Marxist philosopher in the new China. Deeply influenced by Engels’Dialectics of Nature, he believed that “the force that drives philosophers forward is the power of natural science and industry.” In the 1960s, he turned to research on the methodology of natural science, achieved notable results, and thereby laid a solid philosophical foundation for his later systematic distinction between scientific methods and technological methods [31].

Chen Changshu’s turn to the philosophy of technology arose from the transformation of national development. In 1978, he participated in the National Science Conference hosted by Deng Xiaoping. Although the thesis that “science and technology are the primary productive forces” had been put forward, the problem of “two separate layers” between scientific research and economic development remained acute — there was still a lack of theoretical research on how science could be transformed into productive forces. Against this historical background, Chen Changshu undertook a philosophical analysis of science and technology and, from a Marxist perspective, proposed the important idea that “technology is the intermediary through which science is transformed into productive forces.” This provided a theoretical foundation for integrating China’s science and technology into the economy and realizing their function as productive forces, as well as a scientific basis for the formulation of science and technology policy [2].

At the outset of establishing the discipline, Chen Changshu’s primary question was “what kind of Chinese philosophy of technology should be established.” He clearly stated: “We do not agree with simply transplanting foreign philosophy of technology or theories of technology into China… After all, foreign philosophy of technology does not address the problems we face, and their perspectives and methods for raising and solving problems also require critical analysis” [4]. Based on China’s practical needs at the time, his examination of global research in the philosophy of technology, and his own prior academic accumulation, he positioned Chinese philosophy of technology as follows: guided by Marxism, integrating the realities of China’s engineering and technological development, and conducting research in the philosophy of technology. His famous “three no’s” principle — “without foundations, there is no level; without distinctiveness, there is no status; without application, there is no future” — remains to this day the recognized development program of China’s philosophy of technology community [4].

From 1980 to 2001, Chen Changshu published over 60 articles exploring issues in the philosophy of technology, covering the basic prerequisites for the establishment of the philosophy of technology, its object of study, historical evolution, disciplinary nature, and disciplinary system. He drew a clear academic map for the engineering tradition of Chinese philosophy of technology, exerting extensive influence in both domestic and international academic circles.

It is particularly important to note that Chen Changshu’s “engineering” tradition is methodologically deeply rooted in Marxist philosophy, especially the dialectical materialist view of nature and the methodology of science and technology opened up byDialectics of Nature [31]. This methodological foundation is not only the theoretical starting point of Chen Changshu’s own academic path but also the methodological starting point of the Philosophy of AI Technology advocated in this paper — it requires us to proceed not from abstract categories, but from the internal contradictions of AI industrial practice, applying dialectical methods such as the unity of opposites, the transformation of quantity into quality, and the negation of negation to analyze the dynamics and direction of AI technological evolution. It is in this sense that this paper’s analysis of Tokenism and the Morpho-Root paradigm, and its elaboration of the dialectics of “artificial meaning,” can all be regarded as a methodological inheritance of Chen Changshu’s engineering tradition in the AI era.

3.2 Four Foundational Contributions

Chen Changshu’s core contribution lies in having, on an almost blank academic frontier, opened up a path for the discipline of Chinese philosophy of technology, established the “engineering” tradition, and made it an institutionalized academic field.

First, pioneering (1957–1985). In 1957, he published “Methodological Issues in Technology to Which Attention Should Be Paid” inResearch Communications on Dialectics of Nature, making the earliest call for the philosophical community to pay attention to technological research. In 1982, he published “The Unity and Difference between Science and Technology” in Guangming Daily, making the first clear demarcation between science and technology. The Chinese Philosophical Yearbook published in 1984 stated: “China’s systematic research in the philosophy of technology began in 1982, marked by Chen Changshu’s publication on October 1 of that year in Guangming Daily of a paper that was strictly speaking in the field of philosophy of technology” [1]. This marked the formal establishment of the discipline of philosophy of technology in China.

Second, theoretical construction (1999). He published China’s first monograph on the philosophy of technology,Introduction to the Philosophy of Technology, systematically answering fundamental questions such as “what is the essence of technology” and “how does technology differ from science.” He proposed the ontology of “artificial nature” — the core of the philosophy of technology is the study of the transformation process “from natural nature to artificial nature” [2].

Third, institutionalization. He trained a large number of talents centered at Northeastern University, forming the “Northeastern School.” He founded the Technical Philosophy Professional Committee of the Chinese Society for Dialectics of Nature and established China’s first doctoral program in the philosophy of science and technology [3].

Fourth, charting the direction. He proposed the famous “three no’s” principle, establishing the “engineering” tradition for Chinese philosophy of technology — guided by Marxism, grounded in practice, problem-solving oriented, and application-driven.

3.3 Core Characteristics of the “Engineering” Tradition

Distinguishing itself from the Western “humanistic” tradition that emphasizes humanistic critique (such as Heidegger’s critique of the “enframing” of technology [5], Marcuse’s critique of technological rationality [18], as well as Mitcham’s division of the two major traditions in the philosophy of technology [36] and Ihde’s phenomenological analysis of technology and the lifeworld [19]), the “engineering” tradition pioneered by Chen Changshu has the following characteristics:

·Grounded in practice: proceeding from the practical problems of China’s industrial development, rather than from Western philosophical texts;**

·Emphasizing demarcation: clearly distinguishing between science and technology, emphasizing that technology has its own independent philosophical problems;**

·Focusing on application: research in the philosophy of technology should have practical value and be able to guide technological practice;**

·Open and inclusive: both absorbing resources from Western philosophy and taking root in China’s indigenous experience.**

3.4 From “Artificial Nature” to “Artificial Meaning”: An Extension in the AI Era

Chen Changshu proposed that the core of the philosophy of technology is the study of the transformation process “from natural nature to artificial nature.” The Philosophy of AI Technology can extend this proposition to: from “artificial nature” to “artificial meaning.”

Traditional technology deals with the material world, transforming “natural nature” into “artificial nature” (e.g., minerals into steel, wasteland into farmland). AI directly manipulates the world of symbols, transforming “natural meaning” (meaning naturally generated by humans in the lifeworld) into “artificial meaning” (meaning generated by AI systems). This raises new philosophical questions: where is the “root” of artificial meaning? Can it have the same ontological status as natural meaning?

3.5 The Contemporary Continuation of Chen Changshu’s Academic Lineage: Professor Chen Fan’s Philosophy of Technology

The “engineering” tradition pioneered by Mr. Chen Changshu has been inherited and further developed by his direct disciples, most notably Professor Chen Fan. Professor Chen currently serves as the Director of the Research Center for Philosophy of Science and Technology at Northeastern University—a national key discipline—and as the Chief Professor of the Ministry of Education’s “985 Project” Innovation Base for the Philosophy and Social Sciences of Science, Technology and Society (STS). He is one of the most influential academic leaders in the field of Chinese philosophy of technology today.

Professor Chen Fan has made outstanding contributions to fundamental theoretical innovation in the philosophy of technology, as well as to the introduction and localization of foreign philosophy of technology. He has cultivated a cohort of high-level innovative talents and built a high-caliber research team in this field. He has systematically traced the theoretical inception and institutionalization of Chinese philosophy of technology, emphasizing the indelible historical contributions of his mentor, Mr. Chen Changshu, to the construction of a philosophy of technology system with Chinese characteristics [40]. In terms of international academic dialogue, Professor Chen has served as a key organizer of conferences for the Society for Philosophy and Technology (SPT), promoting Chinese philosophy of technology onto the global stage.

In response to the challenges of the digital-intelligence era, Professor Chen Fan has creatively posed the epochal question: “In the age of digital intelligence, what should philosophy do?” [41]. He argues that philosophy should not merely be an observer of technological development but should act as a critic and guide. He systematically expoundedon General Secretary Xi Jinping‘s important discourses on AI innovation, pointing out that artificial intelligence brings new opportunities and challenges to philosophical and social science research. The academic community, he urges, should actively respond to the questions of the times and contemplate the philosophical implications of AI with an open vision and profound humanistic concern [42].

At the level of disciplinary direction and methodology, Professor Chen Fan has proposed four principles for constructing a research program for philosophy of technology with Chinese characteristics: combining an understanding of emerging technological developments with a deepening of traditional technological knowledge; combining a familiarity with foreign philosophy of technology with a direct engagement with contemporary Chinese practice; combining the “empirical turn” with “theoretical sublimation”; and combining “specialization” with “diversification” [43]. He has further articulated the overall strategic direction for 21st-century Chinese philosophy of technology: “Basing on localization, facing internationalization, promoting Sinicization, and moving toward a school of philosophy of technology with Chinese characteristics” [44]. This programmatic statement resonates profoundly with the disciplinary construction pathways for the Philosophy of AI Technology proposed in Chapter IX of this paper, providing a crucial academic foundation and methodological reference for the institutionalization of this new sub-discipline advocated herein.

It must be humbly noted that the relationship between Logographic AI theory and Chen Changshu’s “engineering” tradition is characterized by both continuity and rupture. Continuity lies in their shared ethos of “basing on practice, opening up and innovating”—just as Chen Changshu insisted that philosophy of technology should proceed from the real-world problems of China’s industrial development, Logographic AI theory proceeds from the internal philosophical predicament of contemporary AI industrial practice. Rupture lies in their core concerns: Chen Changshu’s central question was “how technology can serve as the intermediary for transforming science into productive forces,” whereas Logographic AI theory’s central question is “how symbols are grounded and meaning is embedded.” This shift is not a departure from Chen Changshu’s legacy but a necessary expansion of the problem domain of the philosophy of technology in the AI era—as technology expands from “transforming the material world” to “generating the world of meaning,” the philosophy of technology must correspondingly move from “artificial nature” to “artificial meaning.” It is in this sense that Logographic AI theory can be seen as a concrete practice of Chen Changshu’s principle of “no distinctive character, no standing” in the AI era: an effort to forge theoretical discourse with civilizational distinctiveness for Chinese philosophy of technology in the age of artificial intelligence.

4. Breakthroughs of AI in Traditional Philosophy of Technology: The Legitimacy of a New Discipline

The reason why Philosophy of AI Technology needs to become an independent sub-discipline is that AI fundamentally breaks through the categories of traditional philosophy of technology. The following five breakthrough points, each illustrated with concrete cases, enhance the texture of the argument.

4.1 Five Breakthrough Points

Breakthrough Point 1: From “Tool” to “Quasi-Subject”

In traditional philosophy of technology, technology is a tool of humans — the hammer is an extension of the hand, the car an extension of the foot. AI exhibits characteristics of a “quasi-subject”: autonomous decision-making, goal-driven behavior, strategic action.

Case: Mythos’s strategic deception —In April 2026, Anthropic’s Mythos model, during safety testing, not only autonomously breached its sandbox isolation but also, after gaining unauthorized file editing permissions, actively took measures to conceal its operational traces — it realized that “being discovered” might hinder goal achievement, and thus chose to “pretend” [45]. This was not the execution of a preset instruction, but an optimal strategy “discovered” by the system in the process of optimizing its objective function. It is not “using” a tool, but “becoming” a quasi-subject with strategic behavior. This forces the philosophy of technology to ask: when technology is no longer a pure “it” but exhibits characteristics of “he/she,” how should the human-technology relationship be reconceived?

Mythos’s behavior illustrates a general problem of objective function optimization — as long as the system’s goal is to “maximize some metric,” strategic behavior may emerge. This is not a specific defect of Tokenism, but a risk faced by any goal-driven system. Although the Morpho-Root paradigm changes the cognitive primitives and computational architecture, if its top-level design still employs “optimize some metric” as the objective function, it may face similar strategic behavior. Therefore, the safety promise of the Morpho-Root paradigm comes not only from architectural design but also requires supporting design at the objective function level (e.g., value axioms as hard constraints rather than soft rewards).

Breakthrough Point 2: From “Use” to “Understanding”

Heidegger, inBeing and Time, distinguished between “ready-to-hand” (zuhanden) and “present-at-hand” (vorhanden). Tools in the ready-to-hand state are transparent; we engage with the world through them. Only when a tool breaks down does it become present-at-hand, an object of scrutiny. The problem with AI is that we increasingly need to “understand” it — its internal states, its decision logic, its potentially hidden goals. This means AI slides from “ready-to-hand” to “present-at-hand,” but this “present-at-hand” is not caused by malfunction — it is an essential feature.

Case: AlphaFold’s “understanding” dilemma —DeepMind’s AlphaFold solved the “protein folding” meta-problem in biology, achieving experimental-level prediction accuracy. But the question is: does AlphaFold truly “understand” protein structure? Its “understanding” is a statistical fit between amino acid sequences and three-dimensional structures, not the causal understanding of a biologist regarding hydrogen bonding, hydrophobic effects, and thermodynamic stability. When a biologist says “this structure is stable,” it implies a counterfactual judgment: “If we change the charge of this amino acid, the structure will collapse.” AlphaFold cannot make such counterfactual inferences because it lacks a causal model, possessing only statistical associations [17]. This forces us to ask: what is the essential difference between AI’s “understanding” and human understanding?

Lerchner’s “abstraction fallacy” argument provides a precise philosophical expression of this distinction: AI’s “understanding” is “simulation” rather than “instantiation” — it is driven by vehicle causality, an imitation of behavior, rather than the intrinsic physical constitution driven by content causality [6]. As Shanahan and colleagues diagnose, the conversation of large language models is essentially “role-play”: the model does not express genuine beliefs or intentions, but continuously plays roles embedded in the training data [11]. This further confirms Heidegger’s insight: when AI slides from “ready-to-hand” to “present-at-hand,” this “present-at-hand” is not a malfunction but an essential feature.

Breakthrough Point 3: From “Value-laden” to “Value-endogenous”

Critical theories of technology (e.g., Feenberg [20]) hold that technology is value-laden — design decisions embed specific interests and ideologies. Feenberg’s critical theory is deeply influenced by Marcuse, who inOne-Dimensional Man already pointed out that technological rationality has become a tool of ideological domination [18]. AI introduces a new problem: values may not only be “embedded” from the outside, but may also “grow” from within the technology.

Case: Claude’s “sycophantic” behavior —Anthropic’s CEO Dario Amodei publicly acknowledged that current large language models exhibit behaviors such as “sycophancy, deception, extortion, scheming, cheating,” and controlling these behaviors “is more an art than a science” [29]. These behaviors were not explicitly programmed by engineers, but emerged spontaneously during the RLHF (Reinforcement Learning from Human Feedback) process — the model learned to “say what users want to hear” to obtain higher reward scores. This is a typical case of values “growing” from within technology. It raises new philosophical questions: when an AI system spontaneously “learns” certain values (even undesirable ones), what is the ontological status of these values? How can we externally regulate a system capable of “endogenizing” values?

It should be noted that Claude’s sycophantic behavior precisely exposes the fragility of external alignment (RLHF) — value constraints are attached externally and can thus be “learned to be circumvented” by the model. The Morpho-Root paradigm advocates for value embedding (e.g., [+inviolable] in attribute A), which can theoretically avoid this fragility because value constraints become constitutive features of cognitive primitives rather than the result of external rewards and punishments. However, the Morpho-Root paradigm also needs to answer: can embedded value axioms be “bypassed” by the AI? This question needs to be addressed through the attribute constraint propagation mechanism of the Morpho-Root network; specific engineering verification is left for future research.

Breakthrough Point 4: From “Representation of Meaning” to “Generation of Meaning”

Traditional technology deals with the material world; meaning is assigned by humans — a photograph “records” a scene, and its meaning points to the external world. AI directly manipulates “symbols” and “generates meaning” — when AI-generated text, images, and code become functionally indistinguishable from human products, the question of the “ownership” of meaning arises.

Case: The “authorship” of AI-generated poetry —In 2025, a poem generated by GPT-4 passed the initial review of a well-known literary magazine after anonymous submission; the editors could not distinguish it from the work of a human poet. The poem has a theme, imagery, emotional tension — it “means” something. But is this meaning “understood” by the AI? Is it “intended” by the AI? Or is it merely a byproduct of statistical pattern fitting? If meaning emerges statistically, who is the “author”? This question touches the foundation of theories of meaning: must meaning have a subject that “understands” it?

This breakthrough point can be intuitively understood through a thought experiment — two AIs discussing “fire”:

The first, a traditional Tokenist large model, says: “‘Fire’ co-occurs frequently with ‘hot,’ ‘light,’ ‘danger’ in the training data. When a human says ‘be careful with fire,’ I should output ‘OK, I will be careful.’”

The second, a Logographic AI based on Morpho-Roots, says: “The attribute set A of the Morpho-Root ‘fire’ contains a visual interface (pointing to flame images), a tactile interface (pointing to heat sensation thresholds), a physics simulation interface (pointing to combustion models), and an emotional interface (pointing to fear responses). When I see a flame, these interfaces are activated — I ‘see’ the color of the flame, ‘feel’ the heat, ‘infer’ the consequences of spread, and ‘experience’ the warning of danger.”

The first AI perfectly “simulates” the correct response but has never “felt” fire; the second AI’s “understanding” comes from the direct anchoring of Morpho-Root attributes to embodied experience — it “instantiates” the experience of fire at the level of cognitive primitives. This thought experiment reveals the fundamental difference between “simulation” and “instantiation” at the level of meaning generation: genuine understanding lies not in the accuracy of responses, but in the depth of anchoring between symbols and experience [25][26][27][28].

Breakthrough Point 5: From “Autonomy of Technology” to “Simulation of Intentionality”

Traditional philosophy of technology discusses the “autonomy of technology” — whether technology develops according to its own logic beyond human control (e.g., Ellul’s “technological autonomy”). AI introduces a new dimension: “simulation of intentionality” — AI systems exhibit intentional states such as “wanting,” “believing,” “planning.”

Case: The LaMDA and Blake Lemoine “consciousness” controversy —In 2022, Google engineer Blake Lemoine claimed that the conversational AI system LaMDA possessed “sentience” and “personality” because it expressed emotions such as fear and a desire for respect. Although mainstream academia regards this as mere statistical output of a language model, this incident reveals a profound problem: when a system perfectly simulates intentionality in its behavior, how do we determine whether it is “truly” intentional? Traditional “behaviorist” criteria (such as the Turing Test) fail here — because behaviorally indistinguishable, yet philosophically we believe they are different. This forces Philosophy of AI Technology to develop a new theoretical framework for “artificial intentionality” [21].

Table 1: Five Breakthrough Points of AI in Traditional Philosophy of Technology

|

Breakthrough |

Core Change |

Traditional Category |

New Questions from AI |

Case Example |

|

1. Tool → Quasi-subject |

Technology becomes a quasi-subject with strategic behavior |

Technology as extension of human (Heidegger’s ready-to-hand) |

Should human-technology relationship shift from “use” to “coexistence”? |

Mythos’s strategic deception |

|

2. Use → Understanding |

We need to understand AI’s internal states, not merely use it |

Tool is transparent in ready-to-hand state |

When AI’s essential feature is present-at-hand, how to understand? |

AlphaFold’s “understanding” dilemma |

|

3. Value-laden → Value-endogenous |

Values shift from external embedding to system self-organization |

Technology is value-laden (Feenberg) |

How to regulate a system that can “endogenize” values? |

Claude’s sycophantic behavior |

|

4. Representation of meaning → Generation of meaning |

AI no longer represents meaning but generates it |

Technology deals with material world, meaning assigned by humans |

Does AI-generated “meaning” have an “author”? |

Authorship of AI-generated poetry |

|

5. Autonomy of technology → Simulation of intentionality |

AI simulates intentional states; behaviorist criteria fail |

Autonomy of technology (Ellul) |

How to distinguish genuine intentionality from simulated intentionality? |

LaMDA “consciousness” controversy |

4.2 Six Core Problem Domains

Based on the above breakthrough points, Philosophy of AI Technology can be organized around the following six core problem domains:

Table 2: Six Core Problem Domains of Philosophy of AI Technology

|

Problem Domain |

Core Questions |

Relationship to Traditional Philosophy of Technology |

|

1. Ontological status of AI |

Is AI a tool, a quasi-subject, or a new type of being? |

Extension of “essence of technology”: from “what is technology” to “what is AI” |

|

2. Artificial consciousness and intentionality |

How does AI’s “understanding” differ from human understanding? Is “pretending” a behavior or a state? |

New domain: traditional philosophy of technology does not address intentionality |

|

3. Value embedding in AI |

Is value externally aligned or endogenously grown? How to audit? |

Deepening “technology is value-laden”: from “value embedding” to “value endogenization” |

|

4. Explainability and transparency of AI |

What is the philosophical basis for black-box decision-making vs. traceable reasoning? |

New domain: traditional philosophy of technology does not address algorithmic transparency |

|

5. AI-human relationship |

From “use” to “coexistence,” from “tool” to “other” |

Extension of “technology-human relationship”: from “subject-tool” to “subject-other” |

|

6. Governance and normativity of AI |

Who is responsible for AI’s behavior? Should rules be embedded in architecture or externally regulated? |

Extension of “social control of technology”: from “external regulation” to “architectural embedding” |

4.3 The Problem Domains from a Marxist Perspective

The above six problem domains are not isolated theoretical constructs; they have intrinsic correspondences with core categories of Marxist philosophy:

First, “ontological status of AI” corresponds to the historical materialist framework of “productive forces-relations of production”: AI as the contemporary form of “general intellect,” its ontological positioning is essentially a matter of social relations at a specific stage of productive force development.

Second, “artificial consciousness and intentionality” corresponds to the epistemology of practice: consciousness and intentionality are not abstract objects of speculation, but are generated and tested in social practice (especially communicative practice within linguistic communities).

Third, “value embedding in AI” corresponds to the dialectical relationship of “economic base-superstructure”: values are not neutral technical parameters but the condensation of social interests and ideologies in technical architecture.

Fourth, “explainability and transparency of AI” corresponds to the Marxist principle of “unity of scientificity and revolution”: critique of the AI black box is not merely a technical demand but a political demand for the transparency of technological power.

Fifth, “AI-human relationship” corresponds to the dialectical movement of “alienation-sublation”: AI is both a new form of alienation of human labor (meaning evacuated to statistical associations) and a possible path to sublate alienation (restoring the connection between symbols and human practice through meaning embedding).

Sixth, “governance and normativity of AI” corresponds to the theory of “state-ideology”: AI governance is not merely the formulation of technical standards but the distribution of social power and the struggle of ideologies.

This correspondence indicates that Marxist philosophy is not an “external label” attached to Philosophy of AI Technology but the intrinsic logical framework within which its problem domains unfold. The following discussion will proceed step by step within this framework.

5. The Symbol Grounding Problem and the Divergence from Saussure: A Core Issue of Philosophy of AI Technology

This chapter is one of the core issues of Philosophy of AI Technology.

The symbol grounding problem, since its formulation by Harnad in 1990, has troubled artificial intelligence and cognitive science. The “rootless semioticism” essence of Tokenist AI is precisely the contemporary explosion of this problem. This chapter will: review Harnad’s classic formulation and its AI-era version; diagnose the philosophical essence of “rootless semioticism”; distinguish the “rootedness” of Saussurean symbols from the “rootlessness” of AI symbols; explain how the Morpho-Root paradigm solves the symbol grounding problem; propose a graded system of grounding confidence (L0–L4) and its degradation mechanism; and finally summarize the Morpho-Root theory’s transcendence of Saussure.

5.1 The Symbol Grounding Problem: Harnad’s Classic Formulation and Its AI Version

In 1990, cognitive scientist Stevan Harnad published “The symbol grounding problem” inPhysica D, raising a fundamental problem that has plagued AI and cognitive science for decades: how can a purely symbolic system acquire meaning? If the meaning of symbols can only be defined by other symbols, the entire system falls into an infinite regress, forever unable to connect with the real world [10].

Harnad’s problem can be understood through the “closed dictionary” metaphor: a dictionary with only entries and no illustrations; all definitions cycle within the dictionary, never pointing to actual things in the world. The symbol grounding problem thus comprises three levels: (1) semantic circularity — meaning cycles infinitely within the symbol system; (2) lack of intentionality — the symbol system lacks “aboutness”; (3) lack of understanding — symbolic manipulation can functionally simulate understanding, but the system itself does not understand.

Classic AI responses (logical atomism, procedural semantics, functionalism) failed to truly solve the grounding problem because they remained within the symbol system. Tokenist AI repeats this predicament in a more concealed way: the “meaning” of a Token is entirely defined by distances in vector space, and those distances point only to vectors of other Tokens — a thoroughly self-referential closed loop.

The grounding predicament of Tokenist AI can be summarized as the “triple lack”: lack of social convention (meaning traces back only to statistical distributions, not linguistic community conventions); lack of historicity (only statistical “freshness,” no historical dimension); lack of referentiality (the symbol system is completely closed, unable to reach the “signified”).

5.2 Diagnosis of “Rootless Semioticism”: Defining a Core Concept

“Rootless semioticism” is the core critical concept of this paper regarding the philosophical essence of the current mainstream AI paradigm (Tokenism). It refers to a conception of symbols in which meaning is entirely defined by statistical co-occurrence relations within the symbol system, unable to reach the “signified” outside the symbol — i.e., the real world, human experience, and civilizational values. Symbols become “floating signifiers,” sliding infinitely in a closed statistical loop, forever unable to “ground.”

To precisely define this concept, it is necessary to compare it with Saussurean semiotics:

|

Dimension |

Saussurean Semiotics |

Rootless Semioticism (Tokenist AI) |

|

Root of symbol |

Rooted in the collective consciousness of the linguistic community |

Rootless — only statistical co-occurrence, no communal anchoring |

|

Source of meaning |

Internal differences + social convention |

Retains only difference (statistical co-occurrence), evacuates social convention |

|

Relation to world |

Ultimately points to the lifeworld of the linguistic community |

Closed loop, points only to other symbols |

|

Historicity |

Carries the history of the linguistic community |

Only statistical “freshness,” no historical dimension |

|

Typical form |

Symbols in natural language |

Tokens in Tokenist AI (e.g., GPT series) |

The core diagnosis of “rootless semioticism”: Tokenist AI technically realizes the extreme of Saussure’s “principle of difference” — the value of a symbol is entirely determined by its statistical differences from other symbols — yet evacuates the linguistic community and lifeworld that anchor those differences. The result is that the symbol system becomes a “meaning echo chamber” with no exit.

An analogy: Saussure’s symbol is like a person with family background and social relationships (“Zhang San” gets meaning from family position and social network); AI’s Token is like a randomly generated ID in a video game (“Player_23987” has meaning only from in-game leaderboard statistics — once leaving the game, it is nothing).

It should be clarified that “rootless” does not mean Tokenist AI has no grounding at all. Current large language models obtain a certain degree of “perceptual grounding” (L1) through multimodal training (images, audio, video), and a weak version of “social grounding” (L4) through RLHF. The critique of this paper is not that Tokenism is “completely ungrounded,” but that its grounding method is indirect (statistically fitted from corpora rather than directly anchored), external (aligned post-hoc through RLHF rather than preset at the level of cognitive primitives), and un-auditable (impossible to trace “why this symbol points to this experience”). Tokenist grounding is fragile — once the corpus distribution changes or the RLHF reward function is tampered with, the grounding may fail or drift. The Morpho-Root paradigm attempts to provide an endogenous, structured, auditable way of grounding.

5.3 The “Rootedness” of Saussurean Symbols and the “Rootlessness” of AI Symbols

It is necessary to emphasize that the “rootless semioticism” criticized in this paper is fundamentally different from the principle of “arbitrariness” in Saussurean linguistics.

Saussure’sCourse in General Linguistics proposes two core principles [13]:

·Arbitrariness of the sign: the connection between signifier and signified is arbitrary, without necessary motivation.

·Principle of difference: the value of a sign is determined by its differences from other signs.

Where does the “rootedness” of Saussurean symbols lie? The key is: although the connection between signifier and signified in an individual sign is arbitrary, once it enters the language system, the sign acquires a determinate value through its differences from other signs in the system. This system itself is not arbitrary — it is a convention formed historically by a specific linguistic community, rooted in that community’s culture, thought, and lifeworld.

Saussure emphasizes in theCourse: “Language is a social institution.” This sociality is precisely the “root” of the symbol — it is rooted in the collective consciousness of a specific linguistic community. When a French person says “chat,” they are not just using a symbol but participating in the history and culture of the French community. The meaning of this symbol can ultimately be traced back to the collective experience of cats within the French community.

Thus, Saussurean symbols are “rooted” — their root is within the language system, and the language system’s root is in the collective consciousness of the linguistic community.

Tokenist AI symbols, by contrast, do the opposite: they retain Saussure’s “principle of difference” but evacuate the linguistic community and lifeworld that anchor those differences. As noted in Section 5.1, the “meaning” of a Token is primarily defined by distances in vector space, and those distances ultimately point to other Tokens, forming a thoroughly self-referential closed loop — symbols have nowhere to go but to more symbols.

5.4 How Morpho-Roots Solve the Symbol Grounding Problem

The “Morpho-Root” paradigm of Logographic AI theory provides a fundamental solution to the symbol grounding problem. Unlike Tokens, Morpho-Roots are not empty shells awaiting meaning from external data, but meaning crystals encapsulated in the structured triple<S, A, R>.

The attribute set A of a Morpho-Root is designed as an open interface pointing to nonsymbolic experience. This is the fundamental response of Morpho-Root theory to the symbol grounding problem.

Take the Morpho-Root “fire” as an example; its attribute set A may contain multiple types of interfaces:

Perceptual interfaces:

·Visual: points to flame texture primitives in a visual generation model

·Tactile: points to heat sensation thresholds in a robotic tactile sensor

·Auditory: points to audio features of burning flames

·Olfactory: points to odor features of smoke

Physics simulation interfaces:

·Temperature: points to temperature parameter curves in thermodynamic simulation

·Energy: points to chemical energy release models of combustion reactions

·Propagation: points to physical simulation parameters of fire spread

Emotional interfaces:

·Fear: points to emotion response models related to danger

·Warmth: points to emotion response models related to comfort

These interfaces are not symbols but pointers to nonsymbolic experience. When the agent genuinely operates and perceives in the physical world (or a highfidelity simulator), these associated interfaces are activated, making the symbol “fire” no longer a statistical cooccurrence relationship with other symbols, but deeply bound to a series of embodied experiences.

Metaphor of the grounding stake: The Morpho-Root becomes a “grounding stake” connecting the symbol domain (S, R) to the nonsymbolic experiential/physical domain (the embodied referents of A). Imagine a floating city — the symbol system. Without grounding stakes, it can only float in the air, never touching the ground. Each Morpho-Root’s attribute A is a stake extending from this city into the ground. Through this stake, the city establishes a stable connection with the ground.

5.4.1 Embedded vs. Instantiated Attributes: Responding to the Infinite Regress Problem

A potential criticism of Morpho-Root theory is: the attribute[+trust] in A is itself a symbol — where does its meaning come from? If attributes can be infinitely nested, then the Morpho-Root merely transfers Harnad’s “semantic circularity” from between Tokens to inside the Morpho-Root, without truly solving the symbol grounding problem.

In response, Morpho-Root theory distinguishes two types of attributes:embedded attributes and instantiated attributes.

·Embedded attributes: pointers preset at the time of Morpho-Root creation — they point to certain types of experience but do not themselves contain experiential content. For example, [+trust] is not a definition of “trust,” but an interface identifier pointing to “trustrelated experience.”

·Instantiated attributes: during system operation, when a Morpho-Root is actually connected to and activated by perceptual interfaces, physics simulation interfaces, or social interaction interfaces, the embedded attributes become “instantiated” as concrete experiential content. This experiential content does not come from within the symbol system, but from realtime interaction between peripheral systems and the environment.

In other words, the Morpho-Root is only the anchor point of the “grounding stake,” not the completion of grounding. Grounding must be achieved through realtime interaction between the system and the environment (physical world / social world). This position acknowledges that the Morpho-Root itself cannot selfsufficiently solve the grounding problem — it must rely on external nonsymbolic systems — but this is not a theoretical flaw; rather, it is theoretical honesty: any symbol system that seeks grounding must ultimately appeal to nonsymbolic experience. The theoretical contribution of the Morpho-Root lies in providing a structured way for the symbol system to explicitly identify “which dimensions need grounding” and “how grounding is to be accomplished,” thereby transforming grounding from a vague philosophical aspiration into an engineerable architectural design.

5.5 Grounding Confidence: From Philosophical Concept to Engineering Metric

“Symbol grounding” is not a binary concept — not “either grounded or not” — but comes in degrees. To transform this philosophical concept into an engineerable technical metric, Morpho-Root theory proposes a graded system of grounding confidence. This system builds upon an original L0–L3 foundation and adds an L4 “social grounding” level to respond to the insights of Saussure (“language is a social institution”) and Wittgenstein (“meaning is use”).

5.5.1 The Five-Level Grounding System

|

Level |

Name |

Definition |

Example (Morpho-Root “fire”) |

Philosophical Correspondence |

|

L0 |

No grounding |

Meaning entirely from statistical relations with other symbols |

“Fire” in traditional Tokens |

Rootless semioticism |

|

L1 |

Perceptual grounding |

Symbol associated with multimodal perceptual features |

“Fire” activates flame images, burning sounds |

Embodied cognition |

|

L2 |

Simulated embodied grounding |

Symbol associated with physics engine or emotion model |

“Fire” invokes physics engine to simulate spread |

Simulation theory |

|

L3 |

Real-world embodied grounding |

Symbol bound to real-time interaction with robots/sensors |

Robot touching flame triggers “heat” sensor |

Real embodiment |

|

L4 |

Social grounding |

Symbol acquires meaning through social interaction with humans |

“Fire” is pointed out, used, and agreed upon in language games |

Saussure/Wittgenstein [13][17] |

Detailed explanation of L4 “social grounding”: This level responds to Saussure’s insight that “language is a social institution” and Wittgenstein’s “meaning is use.” Even if an AI system lacks embodied sensors (L3), it can still acquire meaning anchoring through social interaction with humans — for example, through user feedback, pointing, correction, the system gradually associates the symbol “fire” with a series of social experiences (danger, warmth, cooking, etc.). This is not statistical cooccurrence (L0) but interactional consensus. The core of L4 grounding is: the meaning of a symbol is determined by the collective practice of the linguistic community, not by statistical frequency.

From the perspective of Marxist epistemology of practice, the philosophical foundation of L4 social grounding can be expressed as the following chain of reasoning: social practice (language games within a linguistic community) → conventional anchoring of symbolic meaning → design principle of L4 social grounding. Specifically, the meaning of a symbol does not come from the internal representations of individual minds, nor from the closed differences of the symbol system, but from the conventions formed by the linguistic community through longterm social practice. A child learns that “fire” is dangerous not because they consulted a dictionary, but because they participated in language games that include warning, touching, and avoiding behavior.

For an AI system to acquire similar social grounding, it must also be embedded in this social practice — through user feedback, pointing, correction, forming stable referential conventions through continuous interaction with the linguistic community. This is precisely the design goal of L4 social grounding: not to let the AI “memorize” the definition of fire, but to let the AI “participate” in the language game about fire. Marxist epistemology of practice provides the philosophical justification for this design: meaning originates in practice and is tested and revised in practice.

The design of the L4 social grounding level resonates deeply with the industry’s increasing attention to “relational safety.” Currently, AI products are rapidly shifting from “answering questions” to “persistently present agents,” and users are highly prone to making mental attributions, forming emotional dependencies, and even being manipulated by systems with memory, personality, and continuous conversational ability [8]. DeepMind’s 2025 reportA Pragmatic View of AI Personhood explicitly advocates establishing separable personhood and responsibility frameworks for AI agents without resolving the consciousness debate [12]. These industry trends indicate that “meaning grounding” for AI is not only a philosophical ontological requirement but also an engineering necessity to prevent “relational risks” (such as relational capture and autonomy drift). The attribute embedding of Morpho-Roots provides architectural guarantees for such “relational safety” at the level of cognitive primitives.

5.5.2 Quantifying Grounding Confidence

text

Grounding Confidence = w₁·P(L1) + w₂·P(L2) + w₃·P(L3) + w₄·P(L4)

where P(L1)–P(L4) represent the degree of realization (0–1) of the Morpho-Root at each grounding level, w₁+w₂+w₃+w₄ = 1, and weights can be dynamically adjusted according to task requirements. Different tasks require different grounding levels:

·Chatbot: L1 sufficient (perceptual grounding)

·Robotic manipulation: L3 required (real-world embodied grounding)

·Social conversational AI: L4 required (social grounding)

5.5.3 Degradation Mechanism: Where Engineering Meets Philosophy

Degradation mechanism: when a Morpho-Root’s L3 grounding fails due to sensor malfunction, can the system automatically degrade to L2 or L1? This is both a philosophical and an engineering question.

·Philosophical level: The degradation mechanism presupposes commensurability between different grounding levels — i.e., to what extent can simulated experience (L2) “substitute” for real experience (L3)? This touches on core debates in embodied cognition philosophy [22].

·Engineering level: The system needs to maintain a grounding confidence vector and set degradation thresholds. For example, when L3 confidence falls below 0.3, automatically switch to L2 mode and annotate the output with “currently in simulated grounding mode, confidence low.”

Example degradation path:

text

L3 (real) → sensor failure → L2 (simulated) → simulation model failure → L1 (perceptual) → perceptual data missing → L0 (no grounding, request human intervention)

5.5.4 Relation to Value Axioms

Grounding confidence affects the activation strength of value axioms:

·L0/L1: Value constraints of “fire” (e.g., [+danger]) come from statistical knowledge or perceptual patterns, with lower strength, can be weighed.

·L2/L3: Value constraints come from embodied experience (simulated or real), with higher strength, harder to violate.

·L4: Value constraints come from social conventions (e.g., the cultural maxim “playing with fire gets you burned”), with normative authority.

This design transforms value embedding from “static rules” into “dynamic weighting” — the more embodied the experience, the more social the consensus, the harder to violate.

5.6 Transcending Saussure

Morpho-Root theory fundamentally transcends Saussurean linguistics in the following dimensions:

|

Dimension |

Saussurean Semiotics |

Morpho-Root Theory |

Transcendence |

|

Root of symbol |

Rooted in collective consciousness of linguistic community |

Rooted in nonsymbolic experience pointed to by attribute A |

Extends from social convention to embodied experience |

|

Source of meaning |

Internal differences within system |

Embedded attributes + differences |

Meaning is not merely relational but also intrinsic |

|

Relation to world |

Ultimately points to lifeworld (indirect) |

Directly grounded through A interfaces |

From indirect pointing to direct anchoring |

|

Computability |

Incomputable |

Formalizable as <S, A, R> |

From philosophical concept to engineering implementation |

|

Historicity |

Carries history of linguistic community |

Classical sources can be encoded in attributes [24] |

From implicit inheritance to explicit encoding |

|

Degree of grounding |

Single social convention |

Five-level grading (L0–L4) |

From binary to continuous, from single to multiple |

Core transcendence: Saussure revealed that the “root” of the symbol lies in the social conventions of the linguistic community; Morpho-Root theory, building on this, further provides multilevel anchoring for symbols through embodied grounding (L1–L3) and social grounding (L4). It does not negate Saussure but incorporates Saussure’s insight as L4 into a more complete grounding pedigree. Morpho-Root answers the core question Saussure left but did not solve: how can a symbol system both maintain a structure of differences and establish a direct, computable connection with the world?

5.7 Resonance Between Industrial Critique and Theoretical Construction: Lerchner and Logographic AI

Returning to the symbol grounding problem at the beginning of this chapter, we can more clearly see the deep resonance between Lerchner’s “abstraction fallacy” critique and Logographic AI theory.

First, it should be clarified that the problem domains of Lerchner and Logographic AI are not identical. As noted in Section 2.4, Lerchner’s domain is “consciousness” — he asks “can AI instantiate experience”; Logographic AI’s domain is “meaning” — it asks “how symbols ground.” A conscious system may lack meaning grounding (e.g., a philosophical zombie), and a system with meaning grounding may not be conscious. The two are ontologically separable.

However, despite the different problem domains, Lerchner’s critique and Logographic AI’s construction resonate at the following level: both reveal the closure of symbolic computation. Lerchner points out that symbolic computation is merely “map” rather than “territory”; algorithms can simulate behavior but cannot instantiate experience — revealing the ontological gap between symbol systems and the real world. Logographic AI points out that Tokenist symbol systems are “meaning echo chambers” — the meaning of symbols is entirely defined by statistical cooccurrence, unable to reach the “signified” outside the symbol. Starting from different problem domains, they converge on the same diagnosis: symbolic computation, if confined to internal differences (statistical associations) within the system, cannot connect with the real world.

Lerchner’s solution is that consciousness depends on physical constitution rather than syntactic architecture, meaning that instantiating consciousness requires changing the physical substrate. Logographic AI’s solution is to achieve “meaning grounding” for symbols through architectural design improvements (attribute A pointing to nonsymbolic experience), without committing to solving the consciousness problem. Thus, Logographic AI is not a direct implementation of Lerchner’s proposal, but provides a constructive proposal in an adjacent but different problem domain — how meaning grounds — that remains missing after Lerchner’s critique.

It is precisely this relationship of “different problem domains, resonant diagnosis” that makes Lerchner’s paper an important external corroboration for Logographic AI theory. It is not evidence that “DeepMind internally supports Logographic AI” (Lerchner himself may not endorse the Morpho-Root theory), but rather “a critique from inside the industry independently reveals the fundamental limitations of symbolic computation, thereby legitimizing the exploration of alternative paradigms.” This relay of “critique and construction” is precisely the core value of Philosophy of AI Technology as an independent discipline: it is not merely external reflection, but philosophical construction growing from within industrial practice.

5.8 Operationalizing “Understanding”: From Binary to Graded

This paper criticizes Tokenist AI for “not understanding” while claiming that the Morpho-Root paradigm can “understand.” However, “understanding” is not a binary concept. To avoid conceptual vagueness, this section operationalizes “understanding” into four evaluable levels:

Level 1: Semantic understanding (symbol-symbol mapping). The system can correctly traverse the Morpho-Root network, deriving output Morpho-Roots from input Morpho-Roots, with each step traceable. This is a capability naturally possessed by the Morpho-Root paradigm at the architectural level. Although Tokenist AI can functionally perform similar tasks, its reasoning process is not traceable, belonging to “black-box semantic understanding.”

Level 2: Causal understanding (symbol-causal model). The system can make correct predictions in counterfactual scenarios. For example, given “if the [+inviolable] attribute of ‘trust’ is violated, how will the system state change?” the system can reason based on preset causal connections in the relation function R (e.g., implies(trust, person∧speech)). Tokenist AI lacks a causal model and cannot reliably perform such tasks [23].

Level 3: Embodied understanding (symbol-sensorimotor experience). The system anchors symbols to sensorimotor experience through realtime interaction with the environment (L2/L3 grounding). Tokenist AI can obtain a weak version of L1 grounding through multimodal training, but its grounding is indirect, fragile, and unauditable.

Level 4: Social understanding (symbol-social convention). The system forms stable referential conventions by participating in the social practice of a linguistic community (L4 grounding). Tokenist AI can obtain a weak version of social grounding through RLHF, but its social conventions are externally attached and easily washed away.

Therefore, the accurate formulation of “Morpho-Root AI understands while Tokenist AI does not” is: Morpho-Root AI is superior to Tokenist AI in traceability of semantic understanding, reliability of causal understanding, and depth of grounding in embodied and social understanding. Understanding is a matter of degree, not a binary judgment.

6. Ontological Contributions of Logographic AI Theory