AI Agent 管理术:2026 年四种子代理架构模式

去年我认为:把任务拆分给专注的 Agent,比让一个通用 Agent 包揽一切更可靠。那时候能做的基本就是”调用-返回”这一种范式。

一年过去,情况变了。更好的任务规划和工具调用能力,让两种以前想都不敢想的模式变成了现实——持久化工作池,和 Agent 到 Agent 的直接通信。

现在,一个主 Agent 可以像一个项目经理那样工作:分派任务、收集结果、在专家之间传递信息、甚至拉起一支自治团队,然后自己去喝咖啡。

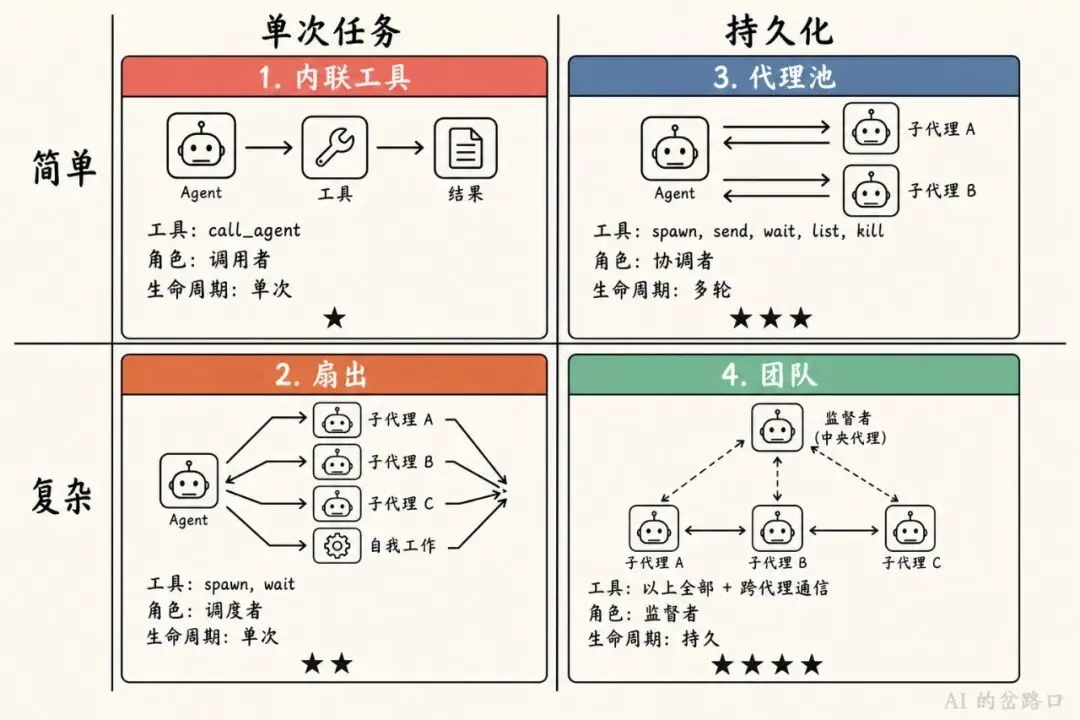

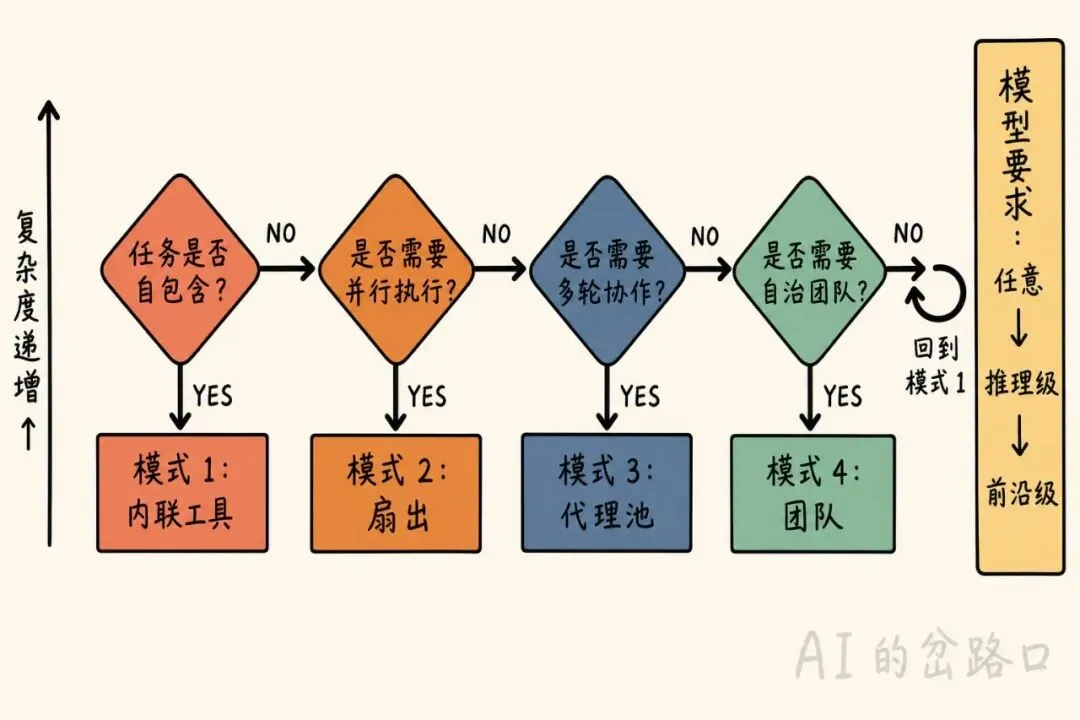

下面这四种模式,按主 Agent 对于子代理生命周期的控制力度从强到弱排列。越往后,子代理越”独立”。

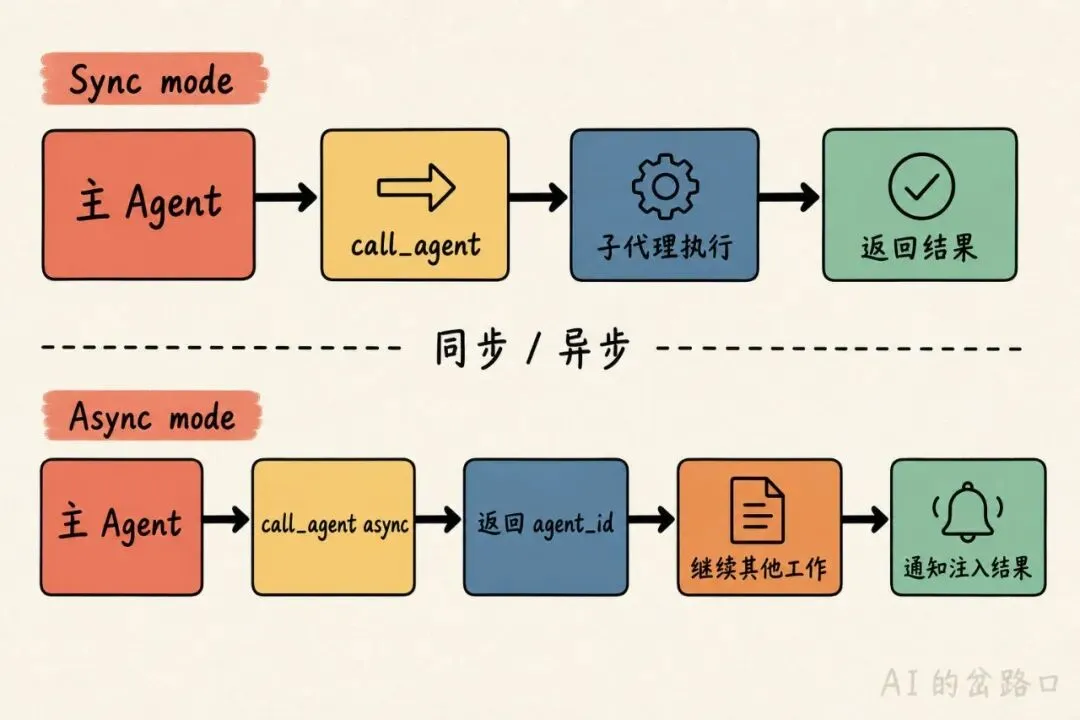

这是最基础的模式。主 Agent 调用一个叫 call_agent 的工具,传入任务描述,等它返回结果。对主 Agent 来说,这个工具和 read_file 没什么区别——就是调一下,拿到返回值,继续往下走。

[

{ "role": "user", "content": "Review PR #42 for security issues" },

{ "role": "assistant", "tool_calls": [

{ "name": "call_agent", "arguments": { "task": "Review PR #42 for security issues. Focus on auth changes.", "agent": "security-reviewer", "tools": ["read_file", "search_code"] } }

]},

{ "role": "tool", "name": "call_agent", "content": "Found 2 issues: 1) Auth token not validated in /api/users endpoint. 2) Session cookie missing HttpOnly flag in login handler." },

{ "role": "assistant", "content": "The review found 2 security issues..." }

]它支持同步和异步两种调用方式:

- • 同步:工具阻塞,子代理跑完才返回结果。简单直接。

- • 异步:工具立刻返回一个 agent_id,主 Agent 可以先去干别的事。等子代理完成后,结果会以一条注入消息的形式出现在对话里。

[

{ "role": "user", "content": "Review PR #42 and also check the CI logs" },

{ "role": "assistant", "tool_calls": [

{ "name": "call_agent", "arguments": { "task": "Review PR #42 for security issues", "agent": "security-reviewer", "background": true } },

{ "name": "read_file", "arguments": { "path": "ci/logs/latest.txt" } }

]},

{ "role": "tool", "name": "call_agent", "content": "agent_id: agent-x1. Running in background." },

{ "role": "tool", "name": "read_file", "content": "Build passed. 142 tests green." },

{ "role": "assistant", "content": "CI looks clean. Waiting for the security review..." },

{ "role": "user", "content": "<notification agent_id='agent-x1' status='completed'>Found 2 issues: ...</notification>" },

{ "role": "assistant", "content": "The security review found 2 issues..." }

]什么时候用?自包含的任务——代码审查、文件分析、研究查询、测试生成。绝大多数子代理场景用这个就够了。

有什么坑?它不支持中途干预。任务发出去了,就只能等结果。如果子代理理解偏了,你要拿到返回值才发现。不能追问、不能看进度、不能提前取消。好消息是它对模型要求很低,支持 tool call 的小模型也能用。

2. 扇出:一把撒出去,再一把收回来

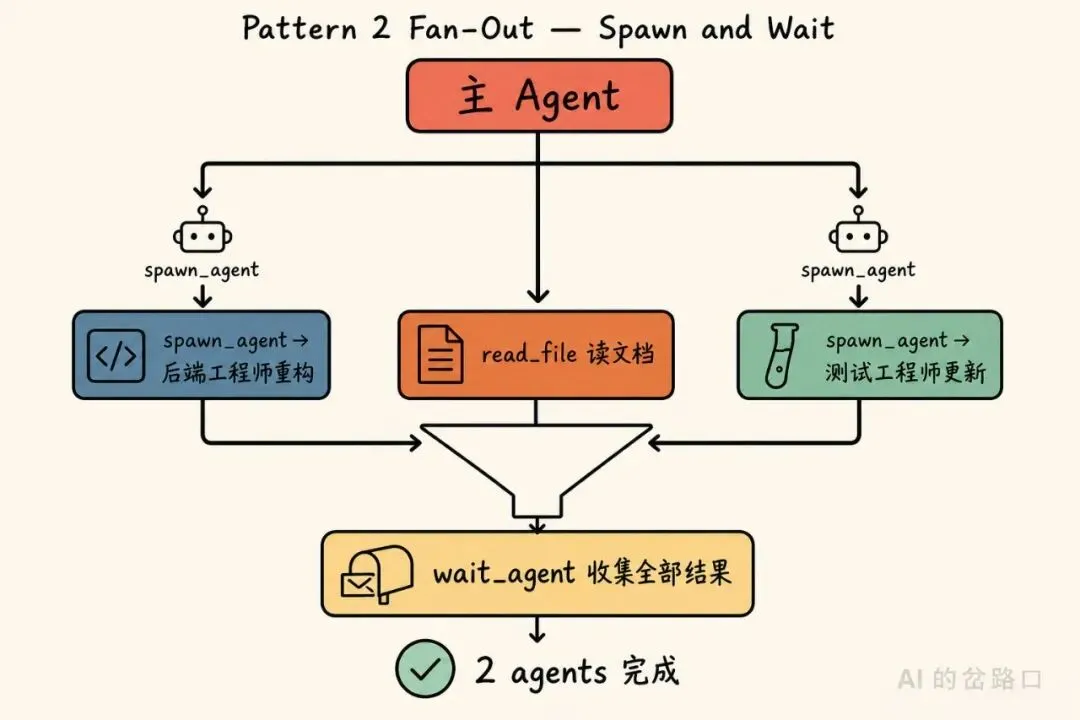

这个模式把”派发”和”收集”拆成了两个独立的动作。spawn_agent 负责派发,立刻返回一个 ID;wait_agent 负责收结果,阻塞到有一个或多个代理跑完。

有意思的地方在于中间这段时间——模型可以穿插自己的操作,不必傻等着。

[

{ "role": "user", "content": "Refactor the auth module and update all related tests" },

{ "role": "assistant", "tool_calls": [

{ "name": "spawn_agent", "arguments": { "task": "Refactor the auth module to use JWT tokens", "agent": "backend-engineer" } },

{ "name": "spawn_agent", "arguments": { "task": "Update all auth-related tests for the new JWT flow", "agent": "test-writer" } },

{ "name": "read_file", "arguments": { "path": "docs/auth-spec.md" } }

]},

{ "role": "tool", "name": "spawn_agent", "content": "Spawned agent-a (backend-engineer). Running." },

{ "role": "tool", "name": "spawn_agent", "content": "Spawned agent-b (test-writer). Running." },

{ "role": "tool", "name": "read_file", "content": "# Auth Spec\nJWT tokens with RS256..." },

{ "role": "assistant", "content": "I've kicked off both tasks. The auth spec confirms we should use RS256." },

{ "role": "assistant", "tool_calls": [{ "name": "wait_agent", "arguments": { "timeout": 300 } }]},

{ "role": "tool", "name": "wait_agent", "content": "2 agents completed:\n- agent-a: Refactored 3 files (auth.ts, middleware.ts, config.ts)\n- agent-b: Updated 12 tests, all passing" },

{ "role": "assistant", "content": "Both tasks are done. The auth module now uses JWT..." }

]注意这里的时序:主 Agent 先同时派发了两个任务,然后自己读了 auth spec 文档,最后才调用 wait_agent 收结果。它不是”派一个等一个”,而是”全派出去,干点自己的活,再统一收”。

什么时候用?多个互不依赖的独立任务,可以并行跑。主 Agent 不需要 A 的中间结果才能启动 B。

有什么坑?模型得有点判断力——知道什么时候该等,什么时候可以先干别的。如果模型每 spawn 一个就立刻 wait,那跟模式 1 的异步版一样,扇出就白搭了。另外仍然不能中途给子代理发纠正指令。结果是一次性批量拿回来的。

3. 代理池:有记忆的持久化 Agent

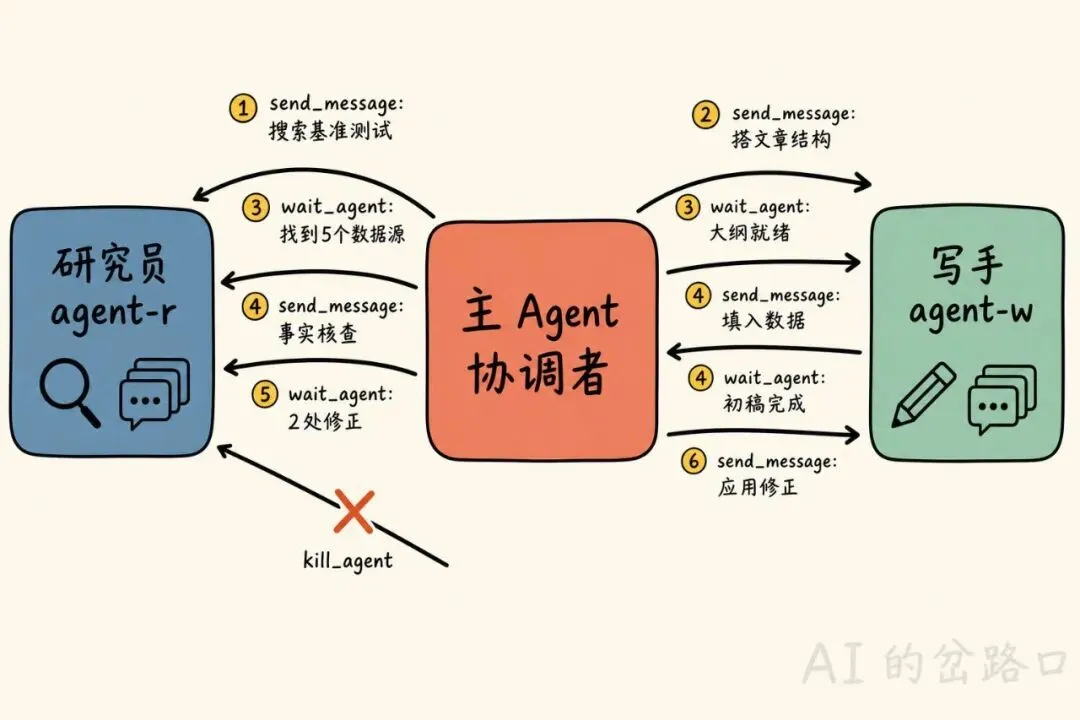

前两种模式里,子代理都是”一次性”的——接任务、跑完、消失。代理池模式把这个假设打破了:子代理是长期存活的,带着对话历史,主 Agent 可以反复跟它对话。

工具多了起来:spawn_agent、send_message、wait_agent、list_agents、kill_agent。主 Agent 的角色从”调用者”变成了”协调者”——它在多个专家之间传递信息,根据中间结果调整指令。

一个写博客的例子最能说清楚:

[

{ "role": "user", "content": "Write a blog post about WebAssembly performance" },

{ "role": "assistant", "tool_calls": [

{ "name": "spawn_agent", "arguments": { "role": "researcher", "tools": ["web_search", "read_url"] }},

{ "name": "spawn_agent", "arguments": { "role": "writer", "tools": ["write_file", "read_file"] }}

]},

{ "role": "tool", "name": "spawn_agent", "content": "Spawned agent-r (researcher)" },

{ "role": "tool", "name": "spawn_agent", "content": "Spawned agent-w (writer)" },

{ "role": "assistant", "tool_calls": [

{ "name": "send_message", "arguments": { "to": "agent-r", "message": "Research WebAssembly performance benchmarks. Find 5 sources with concrete numbers." } },

{ "name": "send_message", "arguments": { "to": "agent-w", "message": "Outline a blog post structure about WebAssembly performance. 4 sections." } }

]},

{ "role": "tool", "name": "send_message", "content": "Delivered to agent-r" },

{ "role": "tool", "name": "send_message", "content": "Delivered to agent-w" },

{ "role": "assistant", "tool_calls": [{ "name": "wait_agent", "arguments": {} }]},

{ "role": "tool", "name": "wait_agent", "content": "agent-w responded: Outline ready: intro, benchmarks, real-world, conclusion" },

{ "role": "assistant", "tool_calls": [{ "name": "wait_agent", "arguments": {} }]},

{ "role": "tool", "name": "wait_agent", "content": "agent-r responded: Found 5 sources: 1) Chrome benchmark shows 1.2x native speed..." },

{ "role": "assistant", "tool_calls": [{ "name": "send_message", "arguments": { "to": "agent-w", "message": "Fill in your outline using these sources: 1) Chrome benchmark shows 1.2x native speed..." } }]},

{ "role": "tool", "name": "send_message", "content": "Delivered to agent-w" },

{ "role": "assistant", "tool_calls": [{ "name": "wait_agent", "arguments": {} }]},

{ "role": "tool", "name": "wait_agent", "content": "agent-w responded: Draft complete. Title: 'WebAssembly Is Faster Than You Think'..." },

{ "role": "assistant", "tool_calls": [{ "name": "send_message", "arguments": { "to": "agent-r", "message": "Fact-check this draft against your sources: 'WebAssembly Is Faster Than You Think'..." } }]},

{ "role": "tool", "name": "send_message", "content": "Delivered to agent-r" },

{ "role": "assistant", "tool_calls": [{ "name": "wait_agent", "arguments": {} }]},

{ "role": "tool", "name": "wait_agent", "content": "agent-r responded: 2 corrections: paragraph 3 cites 2x speed but source says 1.5x..." },

{ "role": "assistant", "tool_calls": [

{ "name": "send_message", "arguments": { "to": "agent-w", "message": "Apply these corrections: paragraph 3 should say 1.5x not 2x..." } },

{ "name": "kill_agent", "arguments": { "agent_id": "agent-r" }}

]},

{ "role": "tool", "name": "send_message", "content": "Delivered to agent-w" },

{ "role": "tool", "name": "kill_agent", "content": "agent-r terminated" },

{ "role": "assistant", "content": "Here is the final post..." }

]主 Agent 先 spawn 出研究员和写手,然后给两个都发了第一条消息——研究员去搜基准测试数据,写手去搭文章结构。它用 wait_agent 逐条收回复,拿到研究员的数据后转给写手,拿到初稿后又派研究员去核实。最后研究员完成事实核查后,主 Agent 把修正指令发给写手,顺手把研究员 kill_agent 关掉。

整个流程中,研究员和写手各自保持着完整的对话历史,主 Agent 在中间做信息路由和决策。

什么时候用?需要多个专家来回协作的多步骤任务。主 Agent 根据中间产出动态调整后续指令。

有什么坑?对主 Agent 的要求明显提高。它得同时记住多个代理的状态——谁在处理什么、谁在等谁、上下文是什么。小模型很快就会搞混,或者忘记在不用的时候 kill_agent。前沿模型大概能 hold 住 2 到 4 个代理。

4. 团队:Agent 自己跟自己聊

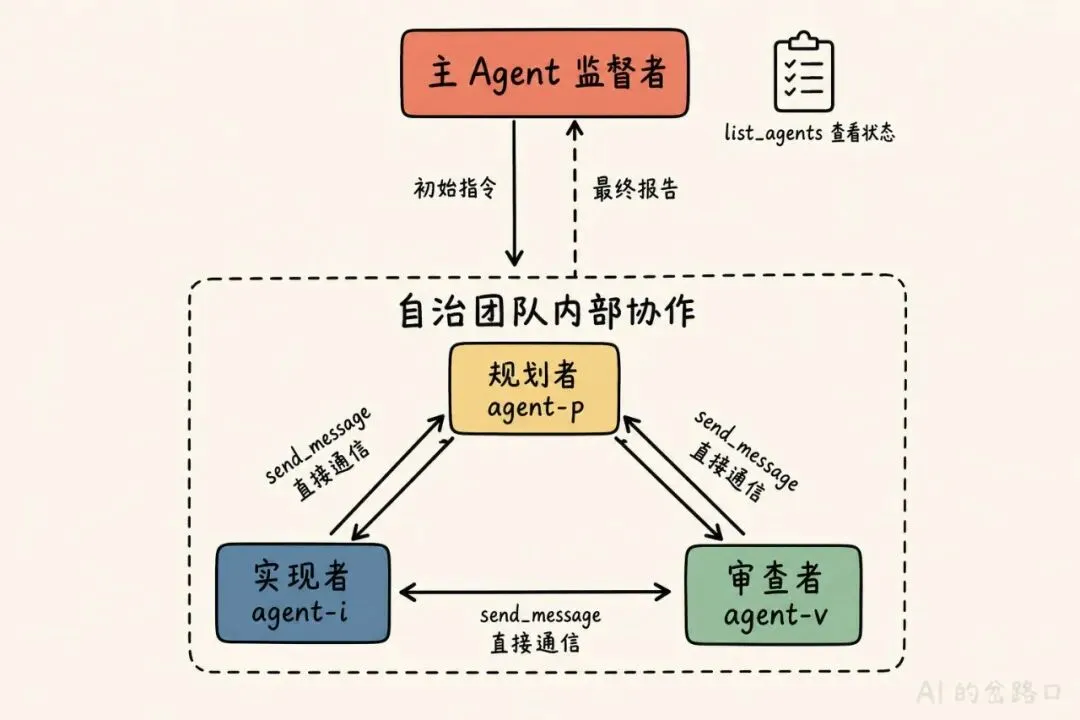

这是控制力最弱、子代理自主性最强的模式。主 Agent 搭好团队就退到幕后,Agent 之间通过消息直接协调。主 Agent 只等最终报告,或者隔一会儿用 list_agents 瞄一眼进度。

关键在于,每个子代理的工具集中都有 send_message,可以直接向其他代理发消息——不需要主 Agent 中转。

从主 Agent 的视角看,整个过程极其简洁:

[

{ "role": "user", "content": "Build feature X" },

{ "role": "assistant", "tool_calls": [

{ "name": "spawn_agent", "arguments": { "role": "planner", "tools": ["read_file", "search_code", "send_message"] } },

{ "name": "spawn_agent", "arguments": { "role": "implementer", "tools": ["read_file", "write_file", "run_command", "send_message"] } },

{ "name": "spawn_agent", "arguments": { "role": "reviewer", "tools": ["read_file", "search_code", "send_message"] } }

]},

{ "role": "tool", "name": "spawn_agent", "content": "Spawned agent-p (planner)" },

{ "role": "tool", "name": "spawn_agent", "content": "Spawned agent-i (implementer)" },

{ "role": "tool", "name": "spawn_agent", "content": "Spawned agent-v (reviewer)" },

{ "role": "assistant", "tool_calls": [{ "name": "send_message", "arguments": { "to": "agent-p", "message": "Plan and coordinate feature X. Your teammates: agent-i (implementer), agent-v (reviewer). Message them directly. Report back to me when done." } }]},

{ "role": "tool", "name": "send_message", "content": "Delivered to agent-p" },

{ "role": "assistant", "tool_calls": [{ "name": "wait_agent", "arguments": { "timeout": 60 } }]},

{ "role": "tool", "name": "wait_agent", "content": "Timed out. No agents responded." },

{ "role": "assistant", "tool_calls": [{ "name": "list_agents", "arguments": {} }]},

{ "role": "tool", "name": "list_agents", "content": "agent-p: running, agent-i: running, agent-v: idle" },

{ "role": "assistant", "tool_calls": [{ "name": "wait_agent", "arguments": { "timeout": 120 } }]},

{ "role": "tool", "name": "wait_agent", "content": "agent-p responded: Feature X complete. 3 files changed, all tests passing. Summary: ..." },

{ "role": "assistant", "content": "Feature X is built. Here's what was done..." }

]主 Agent 做了三件事:创建了三个代理(规划者、实现者、审查者),给规划者发了一条消息说”你负责协调,你的队友是这两个,搞定了告诉我”,然后就开始等。中间它用 list_agents 看了看状态——都在干活——然后继续等,直到规划者发回完成报告。

Agent 之间怎么协作的?全部发生在它们自己的上下文里,主 Agent 看不见。寻址可以用层级路径、邮箱或进程间通信,取决于实现。

什么时候用?协作逻辑复杂到单个 Agent 逐步管理不过来的大型任务。

有什么坑?这个模式的门槛最高。团队里每个 Agent 都需要前沿级模型能力,不只是主 Agent。每个成员得自己判断什么时候向队友发消息、发什么、什么时候报告完成。很容易出问题——发给错的 Agent、忘了报告、陷入循环等待。基础设施层面的麻烦也不少:循环检测(A 等 B,B 又等 A)、冲突解决(两个 Agent 改了同一个文件)、关闭协调。出问题时调试极其痛苦,消息链很难追踪,故障会级联。

怎么选?

一张表总结:

| 模式 | 核心工具 | 主 Agent 角色 | 子代理生命周期 |

|---|---|---|---|

| 内联工具 | call_agent | 调用者 | 单次任务 |

| 扇出 | spawn_agent, wait_agent | 调度者 | 单次任务 |

| 代理池 | spawn, send, wait, list, kill | 协调者 | 多轮对话 |

| 团队 | 以上全部 + 跨代理 send_message | 监督者 | 持久存活 |

我的建议很简单:从模式 1 开始。大多数任务根本不需要复杂的编排。只有当确实需要并行(模式 2)、多步骤协作(模式 3)或自治团队(模式 4)时,再往上走。

还有一个硬约束——模型能力。模式 1 对模型几乎没要求。模式 2 需要模型能判断等待时机。模式 3 要求跟踪多轮状态。模式 4 要求所有参与者都是前沿模型,少一个都不行。

夜雨聆风

夜雨聆风