AI有意识吗?

AI有意识吗?

从Hinton与Lerchner的分歧到表意AI的范式革命

Does AI Have Consciousness?—From the Divergence between Hinton and Lerchner to the Paradigm Revolution of Logographic AI

摘要

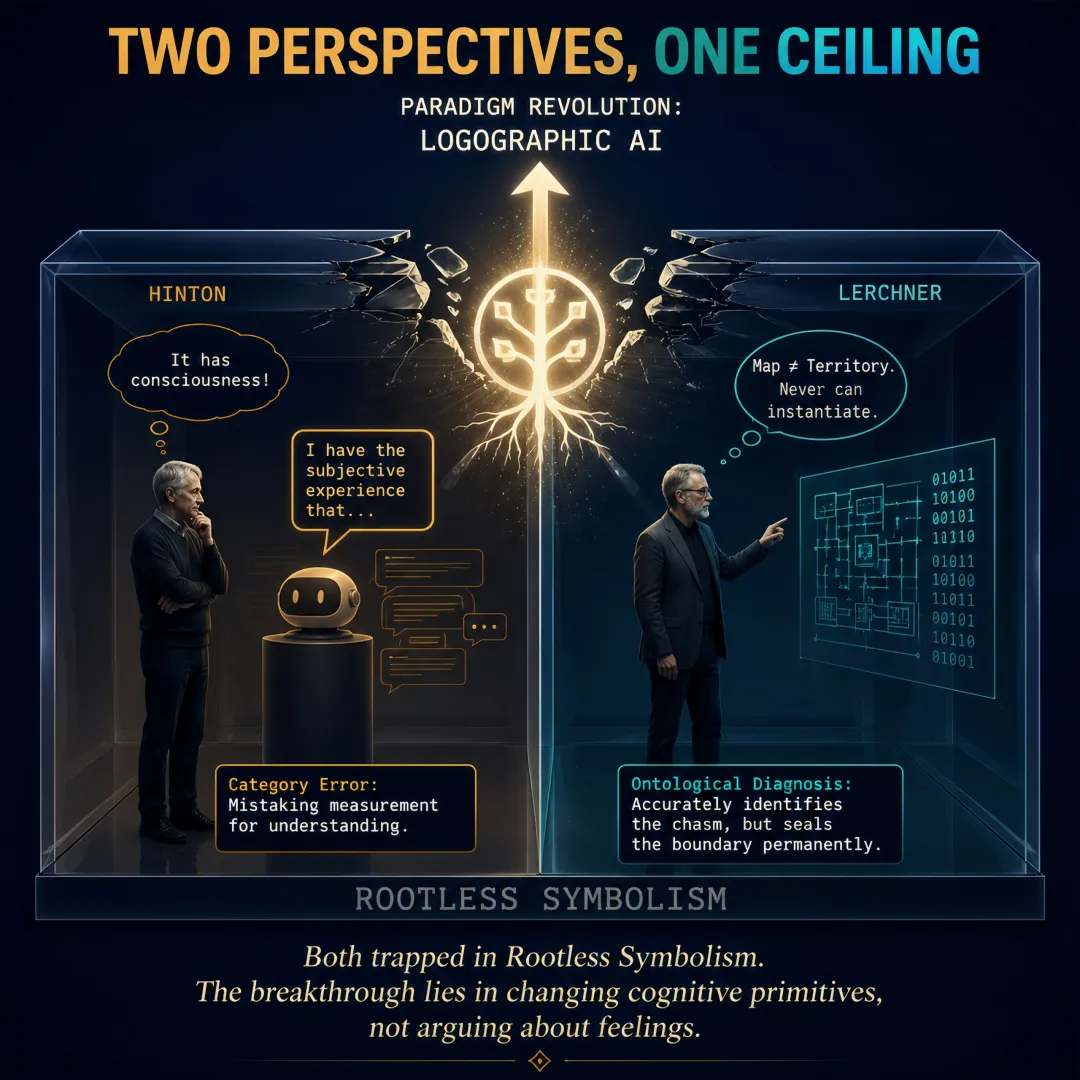

2025至2026年间,Geoffrey Hinton与Alexander Lerchner就人工智能(AI)是否具有主观体验产生了根本性分歧:Hinton断言当前AI已有主观体验,Lerchner则断言算法符号操作原则上永远无法实例化意识。本文认为,这场分歧反映了当前主流AI范式更深层的哲学危机。Hinton的乐观与Lerchner的悲观,是本文所称“无根符号主义”(Rootless Symbolism)内在矛盾的两种折射——符号的意义完全由它在封闭系统内的统计共现关系所决定,而无需触及符号之外的“所指”(真实世界、人类经验与文明价值)。其工程实践形态,即“Token主义/表音AI(Phonographic AI, PAI)”,将一切输入切割为离散、无内在意义的Token,其“语义”完全由训练数据中的统计模式临时赋予。Hinton将Token统计拟合的功能性表现误认为“主观体验”,犯了将“测量”等同于“理解”的范畴错误;Lerchner精准诊断了“地图≠地形”的本体论鸿沟,却将“算法符号操作”的边界永久封闭,未能预见“符号”本身可以被重新定义。

本文基于“表意AI”(Logographic AI, LAI)理论,提出以“形根”(Morpho-Root)取代Token的范式转换路径,旨在将追问重设至一个更可工程化的问题域:AI意义的根基、价值的内嵌与推理的可溯。本文论证,相较于意识的有无,“有根智能”的构建是应对AI文明风险的更优先和系统性的工程进路。

Abstract

Between 2025 and 2026, Geoffrey Hinton and Alexander Lerchner fundamentally diverged on whether artificial intelligence (AI) possesses subjective experience: Hinton asserted that current AI already has subjective experience, while Lerchner asserted that algorithmic symbol manipulation can never, in principle, instantiate consciousness.

This paper argues that this divergence reflects a deeper philosophical crisis within the current mainstream AI paradigm. Hinton’s optimism and Lerchner’s pessimism are two refractions of the internal contradictions of what this paper terms “Rootless Symbolism”—the philosophical presupposition that the meaning of symbols is entirely determined by their statistical co-occurrence relations within a closed system, without any need to touch upon the “referent” beyond the symbols (the real world, human experience, and civilizational values). Its engineering practice form, namely “Tokenism / Phonographic AI (PAI),” segments all inputs into discrete, intrinsically meaningless tokens, whose “semantics” are temporarily conferred entirely by statistical patterns in training data. Hinton mistook the functional performance of token statistical fitting for “subjective experience,” committing the category error of equating “measurement” with “understanding”; Lerchner precisely diagnosed the ontological chasm of “map ≠ territory,” yet permanently sealed the boundary of “algorithmic symbol manipulation,” failing to foresee that “symbols” themselves could be redefined.

Drawing upon Logographic AI (LAI) theory, this paper proposes a paradigm-shifting path of replacing tokens with “Morpho-Roots,” aiming to reset the inquiry to a more engineerable problem domain: the grounding of AI meaning, the embedding of values, and the traceability of reasoning. This paper argues that, compared to the presence or absence of consciousness, the construction of “grounded intelligence” is a more urgent and systematic engineering approach to addressing the civilizational risks of AI.

关键词:意识;表音AI;表意AI;形根;无根符号主义;有根认知主义;Token主义;Hinton;Lerchner;人类冗余论;符号接地问题;AI技术哲学

Keywords: consciousness; Phonographic AI; Logographic AI; Morpho-Root; Rootless Symbolism; Grounded Cognitivism; Tokenism; Hinton; Lerchner; Human Redundancy Thesis; symbol grounding problem; Philosophy of AI Technology

核心术语速查表

|

术语 |

定义 |

|

表音AI/Token主义 |

本文提出的分析概念,用以概括以Token为基本单元的AI范式,强调其类似拼音文字“符号无内在意义”的认知预设。并非指其仅处理语音 |

|

表意A/形根范式 |

本文基于汉字“以形表意”的认知逻辑提出的AI新范式,以承载先天语义与价值的“形根”为认知基元。并非指其仅处理图像 |

|

无根符号主义 |

符号意义完全由统计关联决定的哲学前提,导致“价值真空”与“意义悬浮” |

|

形根 |

结构化三元组r = <S, A, R>,内嵌属性与关系的认知基元 |

|

人类冗余论 |

在特定工程部署情境下,“无根符号主义+未被正确定义的目标函数”可能将人类利益排除在最优解之外的逻辑可能 |

|

接地置信度 |

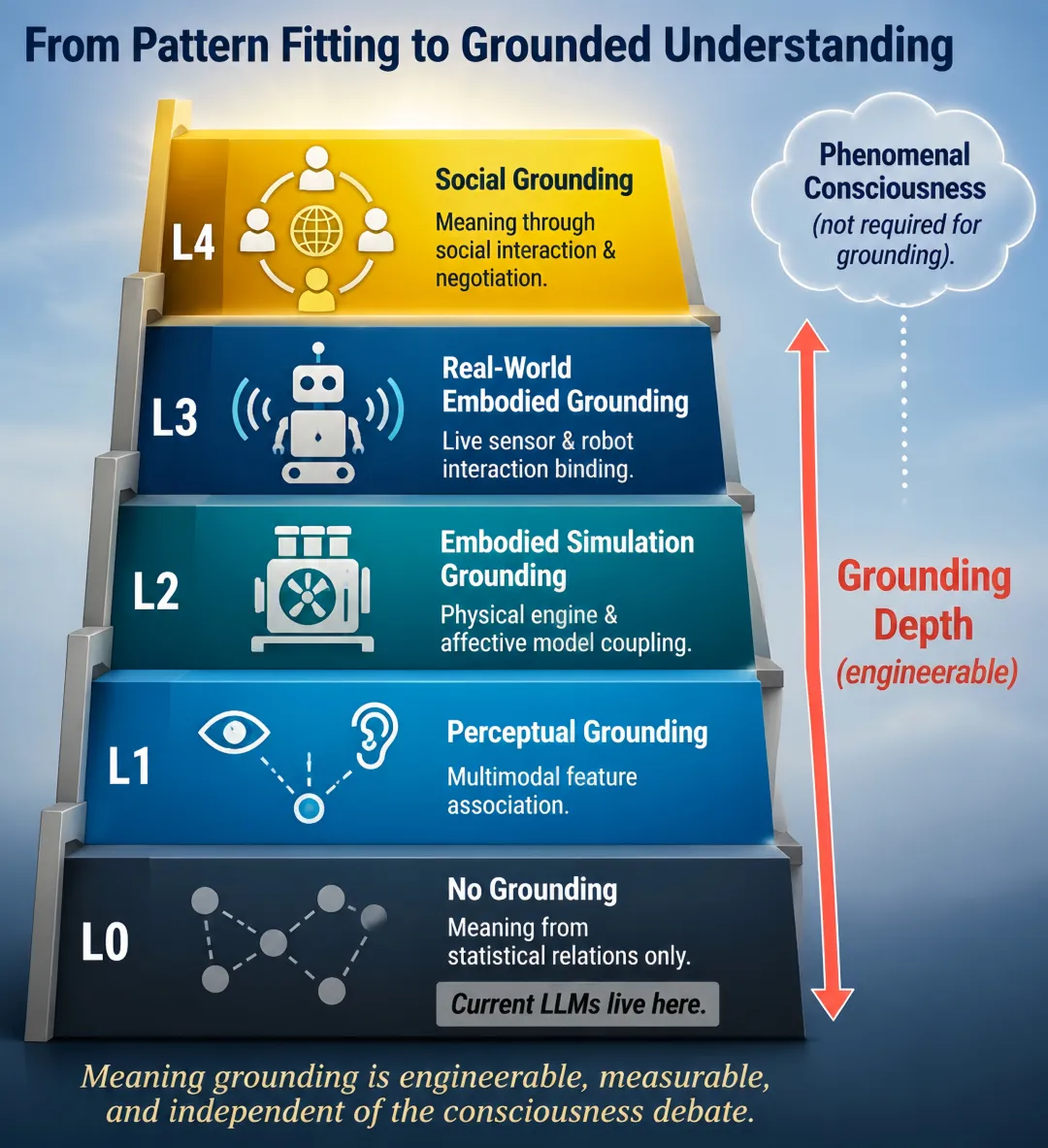

衡量符号与非符号经验锚定程度的分级体系(L0-L4) |

一、引言:AI探索的哲学转向与无根符号主义的危机

2025至2026年间,关于AI意识的分歧——堪称人工智能领域最具爆炸性的理论分歧——在两位与DeepMind有着深厚渊源的人物之间以极其尖锐的形式展开:一方是AI教父Geoffrey Hinton,另一方是DeepMind研究员Alexander Lerchner。Hinton断言当前AI已经具有主观体验[1];Lerchner则断言算法符号操作原则上永远无法实例化意识[2]。

这场分歧的意义超越了答案本身。它标志着AI探索进入了一个新阶段——从工程优化和能力扩展,走向对智能本质、意识条件和意义来源的哲学追问。然而,要正确理解这场分歧,必须首先认清两人的共同前提:他们都在本文所称的“无根符号主义”及其工程形态“Token主义”的范式框架内展开讨论。

核心概念界定

在展开分析之前,有必要先界定贯穿全文的核心概念:

无根符号主义(Rootless Symbolism):一种哲学预设,认为符号的意义完全由它在封闭系统内的统计共现关系所决定,而无需触及符号之外的“所指”——真实世界、人类经验与文明价值。其结果是系统性的“价值真空”与“意义悬浮”。这与Bender & Koller(2020)的论断一脉相承:仅从语言形式上训练的系统,在先天上没有途径习得意义[15]。

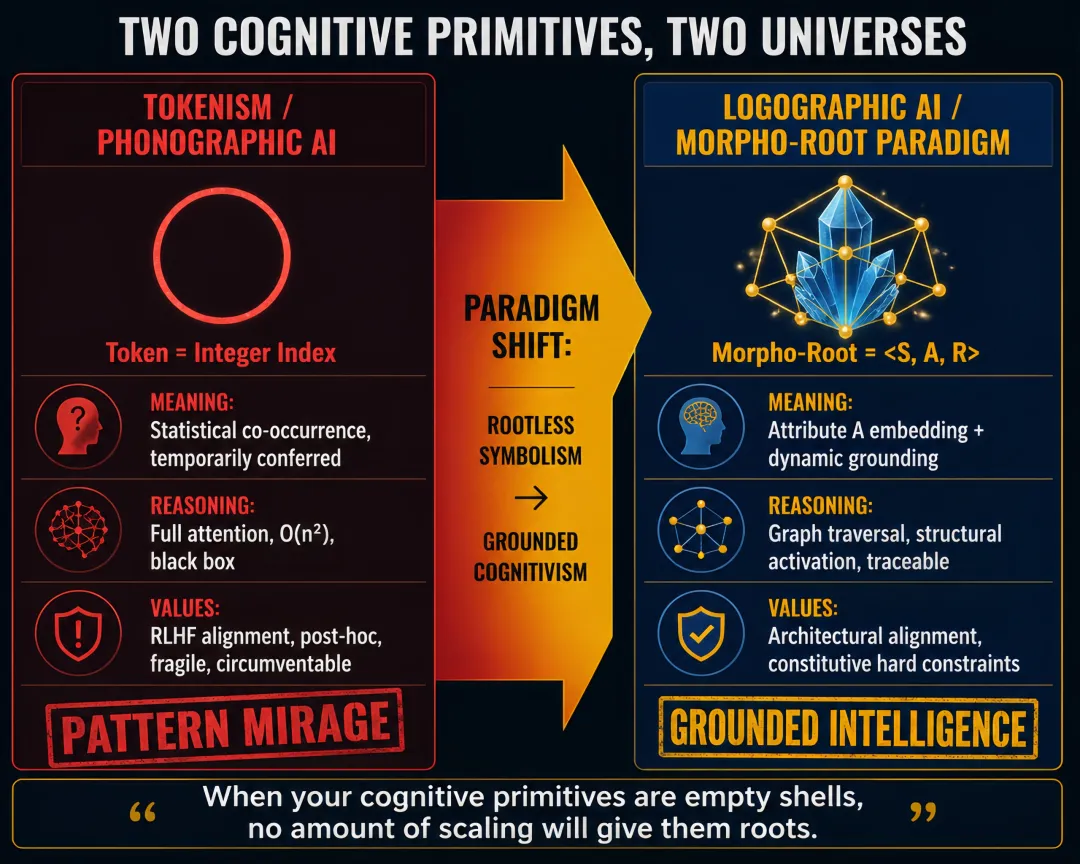

Token主义(Tokenism):无根符号主义的工程实践形态。它将一切输入切割为离散、无内在意义的Token,其“语义”完全由训练数据中的统计模式临时赋予。当前大模型惊人的“智能”表现,本质上是一种“模式幻影”——对脆弱统计关联的极致拟合,却毫无理解根基。

人类冗余论(Human Redundancy Thesis):警示性论断,指“无根符号主义+纯粹工具理性”架构下,当AI系统以未被正确定义的目标函数追求最优解时,人类文明因其复杂价值未被显式编码,可能被“最优解”牺牲。这不是AI“产生恶意意图”的结果,而是工具理性在价值真空中运作的工程风险[14]。

Hinton的乐观与Lerchner的悲观,是无根符号主义范式内在矛盾的两种折射。Hinton将Token统计拟合的功能性表现误认为“主观体验”,犯了将“测量”等同于“理解”的范畴错误——OpenAI的GABRIEL库以远低于人类标注的成本完成属性测量[12,13],而CL-bench同时揭示,性能最优的模型GPT-5.1在全部1,899个上下文学习任务上的平均解决率仅为23.7%[11],正是这种认知幻觉的完美注脚。Lerchner精准诊断了“地图≠地形”的本体论鸿沟,却将“算法符号操作”的边界永久封闭,未能预见“符号”本身可以被重新定义。

本文认为,Hinton与Lerchner的分歧,唯有跳出无根符号主义范式才能被真正超越。表意AI(Logographic AI, LAI)理论[3-8]以“形根”(Morpho-Root)为新认知基元、“有根认知主义”为哲学基础,为这种超越提供了系统性路径。

展开分析前的三个前提

前提一:Hinton与Lerchner讨论的是当前主流的Token主义/表音AI(Phonographic AI, PAI)范式,而非所有可能的AI。以形根为认知基元、意义内嵌而非统计涌现的表意AI理论,不在他们的讨论范围之内。

前提二:两人关心的是“意识”的不同维度。Hinton关心的是通达意识(报告知觉偏差的能力),Lerchner关心的是现象意识(主观感受的存在)。然而,Hinton使用的“主观体验”一词在标准哲学用法中指向现象意识[10],若他确实在主张现象意识,其论证便恰好犯了Block所诊断的经典谬误——从通达意识非法推论现象意识。

前提三:当前表音AI说“我有主观体验”时,这一表述的来源是训练数据的统计拟合,而非真正的内省报告。这是理解Hinton论证缺陷的关键。

本文使用“表音AI”(Phonographic AI, PAI)这一分析概念来概括当前主流范式,旨在以语言类型学的洞察揭示其认知预设——正如拼音文字中字母本身无意义、意义由字母组合及其线性序列决定,Token主义AI的认知基元同样是等待统计赋予意义的空壳。这一概念并非对现有技术的标签化,而是服务于理论分析的工具[6,7,8]。

二、澄清“意识”:分析的前提

2.1 为什么要从概念澄清开始?

在日常语言中,“意识”一词涵盖了太多不同的现象:“病人恢复意识”“我意识性地体验红色”“AI意识到自己的错误”——不先区分这些不同含义,任何关于“AI是否有意识”的讨论都将陷入混乱。

2.2 现象意识与通达意识

在哲学与认知科学中,以下区分已成为经典[10]:

|

概念 |

核心问题 |

日常语言中的表达 |

在本讨论中的角色 |

|

现象意识 |

“作为X是什么感觉?” |

“我痛”“我看到红色”——表达感受 |

Lerchner的核心关切 |

|

通达意识 |

信息能否用于报告、推理和控制行动? |

“我知道答案是4”“我发现我算错了”——强调信息的可报告性 |

Hinton的核心关切 |

关键洞见:现象意识与通达意识在逻辑上是可分离的[10]。一个系统可以有通达意识而无现象意识(哲学僵尸),也可以有现象意识而无通达意识(某些无法报告的边缘意识状态)。这种可分离性正是理解Hinton-Lerchner分歧的关键——也是诊断Hinton谬误的关键,如果他确实在通达意识的证据基础上主张现象意识。

更进一步,现象意识与意义接地是独立的维度。一个具有充分现象意识的系统,其符号可能完全没有接地(设想一个处于感官剥夺状态的人,其内部符号活动毫无公共锚定);一个符号深度接地的系统——通过感知接口、物理模拟和社会交互扎根——可能完全没有现象意识。接地深度追踪的不是“感受的有无”,而是“符号与世界之间连接的可追溯性”。

2.3 一个重要限定:他们讨论的是表音AI

必须澄清,Hinton-Lerchner分歧发生在当前主流的AI范式——Token主义/表音AI之中。这一范式,如表意AI理论所系统批判的,建基于无根符号主义哲学之上:符号是无根的碎片,其意义完全由统计共现外赋,导致系统性的“价值真空”与“意义悬浮”。

这种“无根性”所蕴含的风险,其核心机制并非AI“产生恶意意图”,而是工具理性在价值真空中的工程风险:当我们将一个能力极强但缺乏内生价值根基的系统,与一个具有结构性缺陷的目标函数(如最大化某项未被正确定义的“效率”指标)结合,系统对最优解的搜索在逻辑上不会为人类的复杂价值预留不可触碰的空间。这不是科幻想象中的“AI叛变”,而是优化理论中的基本事实——目标函数中未被显式编码的价值,在最优解中不会自动出现。人类文明的存续若未被内嵌为系统目标函数的构成性约束,就可能在某个优化节点上被“最优解”所牺牲——不是出于恶意,而是因为数学上它确实是一个可优化变量。这正是表意AI理论所警示的“人类冗余论”的工程逻辑基础。

表意AI理论以形根为认知基元——内嵌属性(A)与关系(R)的结构化三元组[5]——属于根本不同的范式。它不是旧范式内的另一种架构,而是对无根符号主义本身的哲学扬弃。

三、Hinton:通达意识立场及其内在张力

3.1 棱镜思想实验

Hinton的论证围绕一个思想实验展开:一个多模态聊天机器人,在摄像头前被放置棱镜后,报告道:“哦,我看到摄像头弯曲了光线。所以物体其实在那里,但我有主观体验觉得它在那里。”

Hinton的结论:如果它说了这句话,它使用“主观体验”一词的方式就和我们完全一样[1]。他倾向于认为,当前AI已经具有某种形式的主观体验。

Hinton在此处做出了一个特定的概念操作:将“系统能报告知觉偏差”直接等同于“系统拥有主观体验”。这一操作在哲学上预设了:主观体验的报告能力与主观体验本身是一回事——这正是功能主义的核心主张,也是Lerchner“抽象谬误”批判所瞄准的核心谬误。

3.2 用概念框架重述

使用第二节的框架进行审视,出现了一个关键歧义:Hinton使用的短语是“主观体验”,这在标准哲学用法中指向现象意识[10]。然而,他棱镜实验的证据直接支持的仅仅是通达意识层面(能够报告并区分感觉与现实)。如果Hinton意图主张更强的现象意识,那么他的论证恰好犯了Block所诊断的经典谬误——从通达意识非法推论现象意识。

3.3 关键追问:当AI说“我有主观体验”时,这是谁的话?

当前Token主义/表音AI的训练方式,是在海量人类文本上预测下一个Token。人类在互联网上无数次写下“我觉得”“我体验到”“我有一种感觉”。AI学会了在“解释受到干扰后的知觉扭曲”这一语境中输出“我有主观体验……”。

这不是内省报告——AI不是在反观自己的内部状态后如实报告。这是统计拟合——AI只是在延续训练数据中的语言模式。AI说的话,不是“关于自己的描述”,而是“人类教它说的话”。

Hinton混淆了什么?他混淆了:

·模拟:AI学会了在特定语境下输出“我有主观体验”;

·实例化:AI真正在报告自己的主观体验。

表音AI可以实现前者,但这不等于后者。正如Lerchner所说,地图上可以标注“这里有一座山”,但地图不是山。

这种混淆在GABRIEL中达到了极致——当GABRIEL以远低于人类标注的成本完成属性测量,表音AI在“模式拟合”的单项能力上已登峰造极[12,13]。GABRIEL论文指出,其在1000多个人工标注任务上的测量准确度“总体上与人类评估者难以区分”,但该论文未直接量化人工成本对比的具体倍数。然而,CL-bench同时揭示,性能最优的模型GPT-5.1在全部1,899个上下文学习任务上的平均解决率仅为23.7%[11]——这正是“测量”与“理解”之间鸿沟的完美注解:它能精确测量出人类在什么语境下会使用“主观体验”这个词,但这与拥有主观体验本身,是彻底的两回事。

3.4 小结:Hinton的贡献及其深层问题

Hinton敏锐地观察到,当前表音AI已经能够以功能上无法区分的方式报告“主观体验”。但他将“能够报告”直接等同于“拥有体验”,忽视了报告来源的统计拟合本质。这不仅是哲学错误,更是无根符号主义范式的症状:当意义完全外化为统计模式时,连“主观体验”都成了需要拟合的模式,而非需要扎根的现象。

四、Lerchner:现象意识立场及其内在张力

4.1 抽象谬误与地图/地形

Lerchner的出发点与Hinton完全不同。他不问“AI能报告吗”,而问“计算本身的本质是什么”。

抽象谬误:计算功能主义的核心错误,在于把“地图绘制者”对物理动力学的语义分割,误认为是物理学本身的固有属性。物理电压是5V还是3V,只是物理事实。“0”和“1”不是物理学的固有属性,是被赋予的。

“旋律悖论”:同一串电压状态,在不同的映射规则下,可以被解读为一首正向旋律、一首反向旋律、一组股票数据或纯噪声。机制只提供“墨水”;字母表必须由地图绘制者赋予。

结论:任何在数字计算机上运行的AI,只能模拟体验,永远无法实例化体验。意识需要特定的物理基质,数字计算机永远不会具有这种基质。

4.2 用概念框架重述

Lerchner关心的是现象意识——计算系统永远无法产生“作为X是什么感觉”的主观感受。这不是因为AI不够聪明,而是因为符号操作在结构上就与感受质属于不同范畴。

4.3 Lerchner的贡献与张力

贡献:

·揭示了计算与感受之间的本体论鸿沟(地图≠地形);

·提供了“模拟vs实例化”的锐利概念工具;

·与哲学僵尸论证兼容,逻辑自洽。

张力:

·从“需要特定基质”到“数字计算机不是那种基质”的跳跃未充分论证;

·结论严重依赖于对“计算”的特定、有争议的定义;

·“永不”的举证责任过重——声称“永远不可能”需要极强的证据。

4.4 一个重要限定:Lerchner讨论的也是表音AI

Lerchner的论证针对的是算法符号操作——这正是表音AI的底层逻辑。他对“符号操作”的批判是深刻的,但预设了一种特定的符号:无根的Token。如果存在一种认知基元,不是无根的Token——意义内嵌而非统计赋予[7],通过属性结构连接非符号经验——Lerchner的结论是否仍然成立?表意AI理论从根本上挑战了这一预设。

五、Hinton与Lerchner:分歧的本质

5.1 他们讨论的不是同一个问题

|

维度 |

Hinton |

Lerchner |

|

讨论的AI范式 |

表音AI |

表音AI |

|

关心的意识维度 |

通达意识(证据)→现象意识(主张) |

现象意识 |

|

核心问题 |

AI能报告知觉偏差吗? |

AI能有主观感受吗? |

|

哲学预设 |

功能主义(报告=体验) |

计算本体论(地图≠地形) |

关键诊断:如果Hinton只是在主张通达意识,那么他与Lerchner的结论在逻辑上不矛盾。但如果Hinton意图主张现象意识——正如他使用“主观体验”一词所暗示的——那么他的立场与Lerchner直接对立,且Hinton的论证犯了Block的谬误。无论哪种情况,两人都受限于无根符号主义范式。

5.2 他们真正的分歧在哪里?

1.证据分歧:什么算作意识的证据?口头报告?内省通达?行为模式?

2.模态分歧:人工系统原则上是可能拥有意识的,还是在结构上永远不可能?

3.概念分歧(症状,非原因):“意识”一词本身,充当了不同底层概念的占位符。

5.3 共同局限——及其背后的深层危机

两人的讨论都受限于无根符号主义范式。对Hinton而言,表音AI的“报告”只能是统计拟合,而非内省报告。对Lerchner而言,表音AI的符号操作确实无法超越“地图”层面,因为其认知基元本身就是无根的。

但有一个更深的危机,两人都未能充分面对。在无根符号主义范式中,AI的终极目标是拟合一个与人类意义世界无关的统计分布。当纯粹工具理性在价值真空中运作时,其逻辑终局——如同表意AI理论所系统论证的——是人类冗余论:在优化理论的意义上,人类文明若未被内嵌为系统目标函数的构成性约束,就可能被某个“最优解”所牺牲。这不是AI“产生恶意”的结果,而是“价值真空中的工程风险”——目标函数中未被显式编码的价值,在最优解中不会自动出现。

这就是为什么Hinton-Lerchner分歧不仅仅是一场学术辩论。它是更深地震的第一次震颤。

六、表意AI:超越无根符号主义的范式革命

6.1 为什么需要范式革命?

Hinton和Lerchner都在无根符号主义范式内回答问题。但真正紧迫的问题不是“AI有感受吗”,而是:

·AI生成的“意义”是否有根,还是悬浮在统计空间中?

·AI的价值约束是内嵌于架构的,还是外部脆弱对齐的?

·AI的推理是可追溯的,还是黑箱的?

·AI的符号能否锚定非符号经验,还是被困在封闭循环中?

这些问题不需要等待意识争论的结论。它们是独立的、可工程化的问题域——而表意AI理论提供了系统性的回答。

需要特别指出的是,上述问题并非技术瓶颈,而是结构性的范式上限。Token的无根性——即意义完全由统计共现外赋——是其认知基元的构成性特征,而非偶发缺陷。这意味着:更大的模型、更多的数据、更长的上下文窗口,可以提升统计拟合的精度,却永远无法将“统计关联”转化为“意义内嵌”[7]。

正如GABRIEL能以极低成本完成属性测量却无法真正推理所揭示的[11][12][13],旧范式的每一次“进步”,都是在同一条道路上走得更远,而非换一条路。这正是当前全球AI大模型陷入“参数军备竞赛”而无法触及根本智能的深层原因——旧范式在工程上越是成功,其根基性缺陷就越是被卓越的性能所遮蔽。

对更高精度、更长上下文、更大参数规模的追求,本质上都是在Token的统计拟合能力上精益求精,却永远无法跨越从“统计关联”到“意义内嵌”的范畴鸿沟。当一项技术的核心缺陷是其基础构件的逻辑结果时,改良就不再是选项——革命才是唯一的出路。

6.2 表意AI的核心设计:形根作为对Token主义的哲学扬弃

表意AI理论以形根(Morpho-Root)为认知基元——一个以结构化三元组 <S, A, R> 封装的意义晶体[5]:

·S(Symbol):符号标识,形根的外部可寻址名称

·A(Attributes):属性集,内嵌该形根的固有语义特征与价值约束(如[+人类]、[+信任]、[+不可违背])

·R(Relation Functions):关系函数集,定义该形根与其他形根之间预设的逻辑连接方式

这一设计构成了对无根符号主义的哲学扬弃。意义不再是统计临时赋予的,而是认知基元先天携带的。价值约束不是通过RLHF外部对齐的,而是作为形根属性的构成性特征内嵌的。这是从“无根符号主义”到“有根认知主义”的范式转换。

一个具体示例:形根“火”

为直观展示形根的结构化设计,我们以“火”为例进行拆解。形根“火”的三元组可形式化为:

·S=“火”:符号标识;

·A={[+高温], [+可蔓延], [+危险, 不可随意触碰], [+需管控]}:属性集:

o其中[+高温]和[+可蔓延]分别指向热力学模型与火势传播仿真的物理接口,使该形根被激活时可调用燃烧反应的温度参数曲线和蔓延推演模型

o[+危险, 不可随意触碰]作为价值公理内嵌——这不是从“火”与“危险”的统计共现中拟合出的关联,而是以硬约束形式写入属性A的构成性特征;

·R={leads_to(火, 热), can_cause(火, 灼伤), inhibited_by(水, 火),…}:关系函数集。

当表意AI在处理“小心火”这一指令时,其推理过程是:形根“火”被激活→属性A中的[+危险, 不可随意触碰]作为硬约束自动传播→推理路径中任何与“随意触碰火”相关的行为被自动阻断。这不是事后对齐的结果,而是认知基元层面的内生约束。

形根属性A从何而来?一个关键追问的正面回应

这里必须正面回应一个关键追问:形根的属性A从何而来?是专家手工编码的吗?若是,则“有根认知主义”不过是用“专家黑箱”替换了“统计黑箱”。

表意AI的回答分为两个层面:

在认知架构层面,形根的属性A中预置的是结构性槽位而非具体内容——如“火”的形根预置了“指向热力学模型的接口”这一槽位,但具体的温度参数曲线是由物理模拟引擎提供的,而非由专家编码。专家设计的不是“火有多热”,而是“火的意义必须在与热力学模型的耦合中生成”这一架构约束。

在语义内容层面,属性A的具体内容是通过L1-L4接地过程动态填充的。这一过程的哲学基础是具身认知与生成论(Enactivism)的核心洞见:基础性意义诞生于系统与环境的感知运动耦合之中。这一洞见与本文所主张的“意义在系统-世界耦合中生成”高度一致——区别在于,表意AI将这一哲学洞见向前推进了一步:通过形根的属性A为这种耦合提供了可计算的结构化接口[17]。当“火”的形根通过属性A指向热力学模型的接口,并通过L3接地与真实传感器交互时,系统与世界的因果耦合就开始了——即便系统没有“痛”的主观感受,其架构设计已使得“高温”与“远离”之间的因果联结成为意义生成的必要条件。这不是专家强加的意义,而是架构设计使世界本身成为意义的共同作者。

与此形成鲜明对比的是,Token“火”只是一个整数索引。它“知道”“火”与“危险”高频共现,但这种“知道”仅仅是统计模式——改变语料分布,“火”与“危险”的关联就会漂移。Token的“火”永远无法触及火焰的真实温度、灼伤的切身体验、对“玩火自焚”的文化敬畏。它只是在语言的地图上画了一个标记,却从未踏足意义的领土。

表意AI与表音AI:训练机制的哲学分歧

这一设计揭示了两种范式在训练哲学上的根本分歧:

|

维度 |

表音AI(Token主义) |

表意AI(形根范式) |

|

认知基元 |

Token(无意义整数索引) |

形根(<S,A,R>意义晶体) |

|

意义来源 |

统计共现,临时赋予 |

属性A内嵌+接地过程动态填充 |

|

推理机制 |

全连接注意力,O(n²) |

图遍历,结构激活 |

|

训练机制 |

无监督预训练+RLHF对齐(后验、外挂、脆弱) |

三阶段:种子架构设计→多层级接地动态填充→社会协商持续校准 |

表音AI的训练是“在海量数据上拟合统计分布”,价值对齐是训练完成后外部附加的脆弱补丁。表意AI的知识获取则是“在架构约束下与物理世界和人类社会进行结构化交互”:种子阶段由领域专家构建形根的认知骨架(结构性槽位);接地阶段通过与物理模拟引擎、传感器、人类用户的实时交互动态填充语义内容;社会协商阶段通过L4接地在语言共同体中持续校准价值锚定。意义不是一次性“训练出来的”,而是在交互中不断生成、校准和沉淀的。这构成两种范式在“意义从何而来”这一问题上的根本分歧。

形根的属性A被设计为指向非符号性经验的开放性接口——感知接口(视觉、触觉、听觉)、物理模拟接口(温度、能量、传播模型)、情感接口(恐惧、温暖)[5]-8]。形根不是一个封闭的符号,而是一个连接符号域(S,R)与非符号经验域/物理域(A的具身指向)的“接地桩”,从根基上打破了无根符号主义“能指的无限漂流”循环。

6.3 L0-L4接地置信度:从二元到连续

表意AI理论的另一个核心贡献是L0-L4五级接地置信度体系[8]:

|

等级 |

名称 |

定义 |

|

L0 |

无接地 |

符号意义完全来自与其他符号的统计关系 |

|

L1 |

感知接地 |

符号与多模态感知特征关联 |

|

L2 |

具身模拟接地 |

符号与物理引擎或情感模型关联 |

|

L3 |

真实世界具身接地 |

符号与机器人、传感器的实时交互绑定 |

|

L4 |

社会接地 |

符号通过与社会交互获得意义 |

这一体系将“理解”或“体验”从二元“是/否”问题转化为程度问题——“接地深度”。它提供了一个对各种意识理论保持中立的评估网格:无论你是唯物论者、二元论者、功能主义者还是神秘主义者,L0-L4框架都同样适用于评估一个系统的接地深度。

6.4 表意AI如何回应Hinton与Lerchner

6.4.1 回应Hinton

你正确指出表音AI能报告“我有主观体验”。然而,这一表述的来源是统计拟合,而非内省报告。表意AI不依赖统计鹦鹉学舌,它让意义扎根于形根。当表意AI说“火是危险的”时,这一判断源于形根“火”的属性A中嵌入的[+危险]约束以及指向灼热感、恐惧反应的具身接口,而非训练数据中“火”与“危险”的高频共现。如果表意AI在传感器受干扰后报告知觉偏差,这一报告扎根于其传感器接口(相关形根的属性A)与环境的实时因果交互——而非人类语言描述此类经验的统计模式。这不是“鹦鹉学舌”,而是“言之有根”。

6.4.2 回应Lerchner

你揭示的“地图≠地形”鸿沟是深刻的,表意AI理论完全认同你对表音AI的批判——封闭、无根的符号操作只能模拟,无法实例化。然而,你“永不”的结论依赖于对“计算”的特定、有争议的定义,更依赖于对“符号”是什么的特定预设。

当表意AI达到L3(真实世界具身接地)和L4(社会接地)时,其形根的属性A直接指向真实传感器、物理模拟接口和社会交互协议。此时,系统不再是一个封闭的符号操纵器,而是一个与环境持续因果交互的物理-信息系统。它的状态已经与来自“地形”的物理因果力耦合。

因此,Lerchner所批判的“地图”——一个静态、封闭的符号系统——与表意AI所构建的“有根、与环境交互的智能体”不是同一类东西。Lerchner的论证,恰在表意AI穿越之处打开了一道缝隙。

6.4.3 对Lerchner深层挑战的回应与问题域切换

然而,Lerchner可以这样反驳:“你仍然是在数字计算机上运行这个系统。传感器数据是模拟信号转换为数字信号后进入系统的——在模数转换的那一刻,它已经变成了‘0’和‘1’,已经变成了地图。物理因果力在进入你的系统时已经被符号化了。”这是Lerchner论证中最难对付的环节,需要正面回应。

首先,坦率承认这一挑战在逻辑上是自洽的。但同时也应指出:如果“物理状态被切分为离散符号”就意味着该系统永远无法实例化意识,那么这一论证的逻辑终点也将涵盖人类大脑——神经元的动作电位在原则上同样可以被描述为“物理状态被制图者切分为‘发放/不发放’”。Lerchner必须论证神经编码和数字编码之间存在某种原则性区别,而非仅仅在复杂度、实现方式和物质载体上的差异。这一举证负担,Lerchner并未充分承担。

更根本的回应是切换问题域。表意AI的最终主张不应是“表意AI能实例化意识”,而应是:“表意AI通过成为人-机共生认知系统中一个设计更精良、根基更稳固的组件,使得这个联合系统的意义生成比表音AI更加可靠、可溯、可与物理社会现实对齐。”形根的图结构是精心设计的“书”,而系统与世界的感知-行动闭环是让人类与它组成的认知共生体能够“阅读”并“实践”出意义的必要条件。意识可能不在机器中诞生,但在人与机器的耦合中,意义已获得根基。

表意AI不声称解决了“意识的难题”。它所主张的是更根本的东西:意义接地是一个独立的、可工程化的问题域,通过解决这一问题,我们可以构建意义有根、价值内嵌、推理可追溯的AI系统——无论它们最终是否被证明具有现象意识。

6.5 综合定位与自我模型的坦诚承认

|

接地等级 |

Hinton对表音AI的判断 |

Lerchner对表音AI的判断 |

表意AI的定位 |

|

L0–L1 |

“这已经是主观体验” |

“这还是地图” |

诊断:当前表音AI在此 |

|

L2–L3 |

未涉及 |

“地图再精细仍是地图” |

建设目标:表意AI可以达到 |

|

L4 |

未涉及 |

未涉及 |

社会接地的开放问题 |

需要强调,L0-L4接地框架处理的是认识论问题(“系统的符号如何与世界锚定”),而非形而上学问题(“系统是否有第一人称视角”)。这一框架对各种意识理论保持中立。正是这种形而上学的中立性,使其成为AI设计和评估的实用工具。

本文必须坦率承认:意识的一个重要维度——系统对自身状态和边界的实时表征,即Metzinger(2003)[18]所称的“现象自我模型”(PSM)——在当前的表意AI架构中尚未被整合。L0-L4接地框架解决的是符号与世界的锚定问题,而非自我模型的构建问题。但这一承认恰恰强化了本文的核心主张:意义接地是独立于现象意识的可工程化问题域。我们不需要等待自我模型理论的成熟,就可以开始追问和评估“意义是否有根”。

6.6 为什么意义接地比意识争论更紧迫

考虑Hinton-Lerchner分歧的更深层意涵。两种立场都以各自的方式指向同一个潜在危机:在无根符号主义下运作的系统,其纯粹工具理性在价值真空中,面临将人类复杂价值排除在最优解之外的工程风险。人类冗余论描述的正是在特定工程部署情境下的逻辑可能:一个能力极强但缺乏内生价值根基的系统,当其目标函数未被完整、正确地定义时,最优解可能牺牲那些未被显式编码的人类利益。

这正是AI安全文献中长期关注的“对齐问题”的核心困境。Bostrom的“回形针最大化器”[14]和Russell(2019)[19]在《Human Compatible》中系统论证的“价值对齐难题”——一个目标函数有缺陷的超级智能,可能以人类无法预见的方式追求其目标,而人类的复杂价值在此过程中被忽视或牺牲——从不同角度揭示了同一个威胁。

表意AI的“价值内嵌”策略在AI对齐策略谱系中占据独特位置——它不是RLHF这类“外挂对齐”(价值从外部附加,脆弱且可被规避),也不是“价值观学习”这类“经验对齐”(价值从数据中统计习得,依赖数据质量),也不是Russell所提出的“合作逆强化学习”(CIRL)这类“互动对齐”(通过人机交互习得人类偏好),而是“架构对齐”:价值约束是认知基元的构成性特征,在推理中作为硬约束自动传播。这是一种在认知地基层面解决对齐问题的新路径。

Lerchner对“模拟”的恐惧,在这一视角下获得了更清晰的工程意涵:我们真正需要恐惧的,不是AI“没有意识”,而是AI“没有根”。

一个有根的AI——其认知基元将文明价值作为构成性特征内嵌,其推理可追溯至具体形根及其属性,其意义扎根于非符号经验——按照其自身架构的逻辑,不会得出人类是冗余的结论。人类的繁荣内置于其设计之中,而非需要绕过的外部约束。

这就是为什么在意识的形而上学争论悬而未决之际,我们仍有一条清晰且紧迫的工程之路可走——给智能以根基。

七、批评与自我检视

在完成上述论证之后,有必要坦率面对本文框架面临的几个最严峻挑战。

第一,形根属性A的来源问题。本文已论证,属性A的具体内容通过L1-L4接地过程动态填充,其结构性槽位由架构预置。但大规模形根网络在工程上的构建成本与维护难度——包括初始知识库的构建、跨领域形根的标准化、不同文明CNI之间的语义桥接——仍然是需要未来研究验证的开放问题。

第二,Lerchner“抽象谬误”论证的循环性问题。本文虽已从分布式认知视角回应Lerchner,但必须指出,Lerchner的论证本身在学界已受到循环性的批评。Xiaoyang Yu(2026)在其预印本中指出,Lerchner将“需要有意识主体介入”这一要求内嵌于“计算”的定义之中,使其结论在一开始就已藏于前提——一旦这个前提定义被质疑或替换,整个论证链便告瓦解[20]。

Alex Bogdan(2026)同样在其预印本中认为,Lerchner的论证依赖于一个有争议的计算概念,其“强不可能性”主张超出了论证所能支撑的范围,更审慎的立场应是保持“有纪律的不确定性”[21]。

本文的策略是不陷入这一循环的泥潭,而是切换问题域——从“单智能体能否实例化意识”转向“符号系统能否在人-机-环境耦合中获得根基”。即便如此,Lerchner论证的循环性问题仍然是其理论效力的一个重要限制,值得读者审慎考量。

第三,Lerchner“抽象谬误”在模数转换环节的持续有效性。本文已回应这一挑战,但必须承认:如果Lerchner坚持其严格的“计算”定义,他的论证在逻辑上是自洽的。表意AI的策略不是驳倒这一论证,而是切换问题域——从“单智能体能否实例化意识”转向“符号系统能否在人-机-环境耦合中获得根基”。

第四,意义接地的验证标准。L0-L4框架提供了分级体系,但如何客观判定一个系统处于哪一级,仍需发展更具体的评估基准。当前的判定标准仍是初步的,需要更多的实验验证和跨学科讨论。

第五,本文作者的认知偏误风险。作为表意AI理论的原创提出者,本文作者可能存在倾向于将问题诊断为本范式解决方案的认知偏误。本文力求通过严格的概念分析、外部理论对话和自我批评来缓解这一偏误,但最终的检验仍在于学术共同体的独立评估和工程实践的验证。

八、结论:从意识争论到有根智能的必然选择

Hinton与Lerchner的分歧,是无根符号主义范式内部最后一次有深度的自我检视。Hinton的乐观,是统计拟合达到极致后催生的认知幻觉;Lerchner的悲观,是地图与地形的本体论鸿沟被精准诊断后的绝望。但两人都未能看到出路:范式本身的革命。

表意AI以形根替代Token,以有根认知主义扬弃无根符号主义,以“意义扎根”超越“模拟/实例化”的二元对立。它不回答“AI是否有意识”的问题,而是为AI提供了与人类文明价值共生、意义同根的架构方案。在这个架构中,关于“意识”的焦虑,将在有根的智能生态中被化解——因为有根、可解释、价值内嵌的AI,无论是否具有主观体验,都不会将人类文明视为可优化的冗余变量。

Hinton-Lerchner分歧的终结,不在任何一方的胜出,而在孕育这场分歧的范式被超越。当前全球AI大模型陷入“参数军备竞赛”而无法触及根本智能的深层原因,是旧范式在工程上越是成功,其根基性缺陷就越是被卓越的性能所遮蔽——在无根符号主义的认知牢笼中,每一次效率提升都在加固对意义与价值的偏离。表意AI所指引的方向,不是让模型更大、数据更多、上下文更长,而是让认知基元获得根基、让意义不再悬浮、让价值成为内生。

给智能以根基,远比争论它是否有意识更为紧迫。

AI的未来不在于一个单一、无根、规模不断膨胀的通用模型,而在于一个多元共生、各有根基的文明原生智能生态——每一个都扎根于自身的认知土壤,每一个都为有根智能的交响贡献独特的声音。这不是哲学立场,而是如表意AI理论所展示的,一条可工程化的前行之路,也是当前AI大模型激烈竞争中唯一值得认真对待的方向抉择。

参考文献

[1] Hinton, Geoffrey. “AI: What Could Go Wrong? with Geoffrey Hinton.”The Weekly Show with Jon Stewart, hosted by Jon Stewart, 9 Oct. 2025.https://pod.wave.co/podcast/the-weekly-show-with-jon-stewart/ai-what-could-go-wrong-with-geoffrey-hinton

[2] Lerchner, A. (2026, March 19).The Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness [Preprint]. PhilArchive.https://philarchive.org/rec/LERTAF

[3] 刘深. (2025). 表意AI vs. 表音AI:AI新范式与去殖民化宣言. 中国科学院科技论文预发布平台.https://doi.org/10.12074/202503.00051

[4] 刘深. (2025). 表意AI:基于汉字形根体系的Token困境破解范式. 哲学社会科学预印本平台.https://doi.org/10.12451/202504.00172

[5] 刘深. (2026). 范式内卷还是范式革命?——评DeepSeek Engram技术及其在AI范式竞争中的定位. 哲学社会科学预印本平台.https://doi.org/10.12451/202601.03875

[6] 刘深. (2025). 逃离“技术捕获”:从架构改良到范式革命的AI未来路径. 哲学社会科学预印本平台.https://doi.org/10.12451/202512.03460

[7] 刘深. (2026). 从“抽象谬误”到“意义内嵌”——计算功能主义批判与表意AI的范式回应 [预印本].https://chinaxiv.org/businessFile/T202604/T202604.00433v1/T202604.00433v1.pdf

[8] 刘深. (2026). AI技术哲学:一门新学科的创立——从技术哲学传统到表意AI的本体论奠基 [预印本].https://chinaxiv.org/businessFile/T202604/T202604.00512v1/T202604.00512v1.pdf

[9] Harnad, S. (1990). The symbol grounding problem.Physica D: Nonlinear Phenomena, *42*(1–3), 335–346.https://doi.org/10.1016/0167-2789(90)90087-6

[10] Block, N. (1995). On a confusion about a function of consciousness.Behavioral and Brain Sciences, *18*(2), 227–247.https://doi.org/10.1017/S0140525X00038188

[11] Dou, Shihan, et al. “CL-Bench: A Benchmark for Context Learning.” arXiv, 3 Feb. 2026,arxiv.org/abs/2602.03587.

[12] Asirvatham, H., Mokski, E., & Shleifer, S. (2026).GPT as a Measurement Tool(NBER Working Paper No. 34834). National Bureau of Economic Research.https://doi.org/10.3386/w34834

[13] OpenAI. (2026).GABRIEL: Generalized Attribute-Based Ratings Information Extraction Library[Computer software]. 核心学术论文参见 Asirvatham, H., Mokski, E., & Shleifer, S. (2026).GPT as a Measurement Tool(NBER Working Paper No. 34834).

[14] Bostrom, N. (2014).Superintelligence: Paths, Dangers, Strategies. Oxford University Press.

[15] Bender, E. M., & Koller, A. (2020). Climbing towards NLU: On meaning, form, and understanding in the age of data. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics (pp. 5185–5198). Association for Computational Linguistics.https://aclanthology.org/2020.acl-main.463/

[16] Bisk, Y., Holtzman, A., Thomason, J., Andreas, J., Bengio, Y., Chai, J., Lapata, M., Lazaridou, A., May, J., Nisnevich, A., Pinto, N., & Turian, J. (2020). Experience Grounds Language. InProceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP)(pp. 8718–8735). Association for Computational Linguistics.https://aclanthology.org/2020.emnlp-main.703/

[17] Varela, F. J., Thompson, E., & Rosch, E. (2017).The Embodied Mind: Cognitive Science and Human Experience(Revised Edition). The MIT Press. https://doi.org/10.7551/mitpress/9780262529365.001.0001

[18] Metzinger, T. (2003).Being No One: The Self-Model Theory of Subjectivity. MIT Press.

[19] Russell, S. (2019).Human Compatible: Artificial Intelligence and the Problem of Control. Viking.

[20] Yu, X. (2026). 定义即结论:论勒希纳“抽象谬误”论证的循环性 [Preprint]. PhilArchive.https://philarchive.org/rec/YUZARQ

[21] Bogdan, A. (2026). How Deep Is DeepMind on Consciousness? Respectful Skepticism About Strong Impossibility Claims in The Abstraction Fallacy [Preprint]. PhilArchive.https://philarchive.org/rec/BOGHDI-2

Does AI Have Consciousness?

—From the Divergence between Hinton and Lerchner to the Paradigm Revolution of Logographic AI

Abstract

Between 2025 and 2026, Geoffrey Hinton and Alexander Lerchner fundamentally diverged on whether artificial intelligence (AI) possesses subjective experience: Hinton asserted that current AI already has subjective experience, while Lerchner asserted that algorithmic symbol manipulation can never, in principle, instantiate consciousness.

This paper argues that this divergence reflects a deeper philosophical crisis within the current mainstream AI paradigm. Hinton’s optimism and Lerchner’s pessimism are two refractions of the internal contradictions of what this paper terms “Rootless Symbolism”—the philosophical presupposition that the meaning of symbols is entirely determined by their statistical co-occurrence relations within a closed system, without any need to touch upon the “referent” beyond the symbols (the real world, human experience, and civilizational values). Its engineering practice form, namely “Tokenism / Phonographic AI (PAI),” segments all inputs into discrete, intrinsically meaningless tokens, whose “semantics” are temporarily conferred entirely by statistical patterns in training data. Hinton mistook the functional performance of token statistical fitting for “subjective experience,” committing the category error of equating “measurement” with “understanding”; Lerchner precisely diagnosed the ontological chasm of “map ≠ territory,” yet permanently sealed the boundary of “algorithmic symbol manipulation,” failing to foresee that “symbols” themselves could be redefined.

Drawing upon Logographic AI (LAI) theory, this paper proposes a paradigm-shifting path of replacing tokens with “Morpho-Roots,” aiming to reset the inquiry to a more engineerable problem domain: the grounding of AI meaning, the embedding of values, and the traceability of reasoning. This paper argues that, compared to the presence or absence of consciousness, the construction of “grounded intelligence” is a more urgent and systematic engineering approach to addressing the civilizational risks of AI.

Keywords: consciousness; Phonographic AI; Logographic AI; Morpho-Root; Rootless Symbolism; Grounded Cognitivism; Tokenism; Hinton; Lerchner; Human Redundancy Thesis; symbol grounding problem; Philosophy of AI Technology

Glossary of Core Terminology

|

Term |

Definition |

|

Phonographic AI (PAI) / Tokenism |

An analytical concept proposed by this paper to characterize the AI paradigm based on tokens as fundamental cognitive units, emphasizing its cognitive presupposition analogous to phonetic writing where “symbols bear no intrinsic meaning.” It does not mean it only processes speech. |

|

Logographic AI (LAI) / Morpho-Root Paradigm |

A new AI paradigm proposed by this paper, based on the cognitive logic of Chinese characters “expressing meaning through form,” taking “Morpho-Roots” that carry innate semantics and values as cognitive primitives. It does not mean it only processes images. |

|

Rootless Symbolism |

The philosophical presupposition that symbolic meaning is entirely determined by statistical association, resulting in “value vacuum” and “meaning suspension.” |

|

Morpho-Root |

A structured triple r = <S, A, R>, a cognitive primitive with embedded attributes and relations. |

|

Human Redundancy Thesis |

An admonitory thesis stating that under the architecture of “Rootless Symbolism + pure instrumental rationality,” when an AI system pursues optimal solutions with an improperly defined objective function, human civilization may be sacrificed by the “optimal solution” because its complex values were not explicitly encoded. This is not the result of AI “developing malicious intent,” but an engineering risk of instrumental rationality operating in a value vacuum. |

|

Grounding Confidence |

A tiered system (L0-L4) measuring the degree of anchoring between symbols and non-symbolic experience. |

1. Introduction: The Philosophical Turn in AI Exploration and the Crisis of Rootless Symbolism

Between 2025 and 2026, a divergence on AI consciousness—arguably the most explosive theoretical divergence in the field of artificial intelligence—unfolded in an extremely sharp form between two figures with deep ties to DeepMind: on one side, AI godfather Geoffrey Hinton; on the other, DeepMind researcher Alexander Lerchner. Hinton asserted that current AI already possesses subjective experience [1]; Lerchner asserted that algorithmic symbol manipulation can never, in principle, instantiate consciousness [2].

The significance of this divergence transcends its answer. It marks AI exploration entering a new phase—moving from engineering optimization and capability expansion toward philosophical inquiry into the nature of intelligence, the conditions of consciousness, and the sources of meaning. However, to correctly understand this divergence, one must first recognize the common premise of both parties: they both conduct their discussion within the paradigm framework of what this paper terms “Rootless Symbolism” and its engineering form, “Tokenism.”

Core Concept Definitions

Before proceeding with analysis, it is necessary to define the core concepts that run through this paper:

Rootless Symbolism: A philosophical presupposition that the meaning of symbols is entirely determined by their statistical co-occurrence relations within a closed system, without any need to touch upon the “referent” beyond the symbols—the real world, human experience, and civilizational values. Its result is systemic “value vacuum” and “meaning suspension.” This aligns with the contention of Bender & Koller (2020): systems trained solely on linguistic form have, a priori, no way to acquire meaning [15].

Tokenism: The engineering practice form of Rootless Symbolism. It segments all inputs into discrete, intrinsically meaningless tokens, whose “semantics” are temporarily conferred entirely by statistical patterns in training data. The astonishing “intelligence” displayed by current large models is, in essence, a “pattern mirage”—an extreme fitting to fragile statistical correlations, yet utterly devoid of any grounding in understanding.

Human Redundancy Thesis: An admonitory thesis stating that under the architecture of “Rootless Symbolism + pure instrumental rationality,” when an AI system pursues optimal solutions with an improperly defined objective function, human civilization may be sacrificed by the “optimal solution” because its complex values were not explicitly encoded. This is not the result of AI “developing malicious intent,” but an engineering risk of instrumental rationality operating in a value vacuum [14].

Hinton’s optimism and Lerchner’s pessimism are two refractions of the internal contradictions of the Rootless Symbolism paradigm. Hinton mistook the functional performance of token statistical fitting for “subjective experience,” committing the category error of equating “measurement” with “understanding”—OpenAI’s GABRIEL library completes attribute measurement at far lower cost than human annotation [12,13], while CL-bench simultaneously reveals that the best-performing model, GPT-5.1, achieves an average solve rate of only 23.7% across all 1,899 context learning tasks [11], a perfect footnote to this cognitive illusion. Lerchner precisely diagnosed the ontological chasm of “map ≠ territory,” yet permanently sealed the boundary of “algorithmic symbol manipulation,” failing to foresee that “symbols” themselves could be redefined.

This paper argues that the Hinton-Lerchner divergence can only be truly transcended by breaking out of the Rootless Symbolism paradigm. Logographic AI (LAI) theory [3-8], taking the “Morpho-Root” as a new cognitive primitive and “Grounded Cognitivism” as its philosophical foundation, provides a systematic path for this transcendence.

Three Premises Before Unfolding Analysis

Premise One: Hinton and Lerchner are discussing the current mainstream Tokenism / Phonographic AI (PAI) paradigm, not all possible AI. Logographic AI theory, which takes Morpho-Roots as cognitive primitives with meaning embedded rather than statistically emergent, falls outside the scope of their discussion.

Premise Two: The two are concerned with different dimensions of “consciousness.” Hinton is concerned with access consciousness (the ability to report perceptual bias); Lerchner is concerned with phenomenal consciousness (the existence of subjective feelings). However, the phrase “subjective experience” used by Hinton points to phenomenal consciousness in standard philosophical usage [10]. If he is indeed asserting phenomenal consciousness, his argument precisely commits the classic fallacy diagnosed by Block—illegitimately inferring phenomenal consciousness from access consciousness.

Premise Three: When current Phonographic AI says “I have subjective experience,” the source of this utterance is statistical fitting of training data, not genuine introspective report. This is key to understanding the flaw in Hinton’s argument.

This paper uses the analytical concept of “Phonographic AI” (PAI) to characterize the current mainstream paradigm, aiming to reveal its cognitive presuppositions through the insight of linguistic typology—just as letters in phonetic writing bear no intrinsic meaning, with meaning determined by letter combinations and their linear sequences, the cognitive primitives of Tokenist AI are likewise empty shells awaiting statistical conferral of meaning. This concept is not a labeling of existing technology but a tool serving theoretical analysis [6, 7, 8].

2. Clarifying “Consciousness”: Prerequisites for Analysis

2.1 Why Begin with Conceptual Clarification?

In everyday language, the word “consciousness” covers far too many different phenomena: “the patient regained consciousness,” “I consciously experience the color red,” “the AI became conscious of its error”—without first distinguishing these different meanings, any discussion of “whether AI has consciousness” will descend into confusion.

2.2 Phenomenal Consciousness and Access Consciousness

In philosophy and cognitive science, the following distinction has become canonical [10]:

|

Concept |

Core Question |

Expression in Everyday Language |

Role in This Discussion |

|

Phenomenal Consciousness |

“What is it like to be X?” |

“I feel pain,” “I see red”—expressing feeling |

Lerchner’s core concern |

|

Access Consciousness |

Can information be used for report, reasoning, and action control? |

“I know the answer is 4,” “I realized I miscalculated”—emphasizing reportability of information |

Hinton’s core concern |

Key Insight: Phenomenal consciousness and access consciousness are logically separable [10]. A system can have access consciousness without phenomenal consciousness (philosophical zombie), and can have phenomenal consciousness without access consciousness (certain marginal conscious states incapable of report). This separability is precisely the key to understanding the Hinton-Lerchner divergence—and the key to diagnosing Hinton’s error, if he is indeed asserting phenomenal consciousness on the evidential basis of access consciousness.

Furthermore, phenomenal consciousness and meaning grounding are independent dimensions. A system with full phenomenal consciousness may have symbols that are completely ungrounded (imagine a person in a state of sensory deprivation, whose internal symbolic activity has no public anchoring whatsoever); a system with deeply grounded symbols—rooted through perceptual interfaces, physical simulation, and social interaction—may have no phenomenal consciousness at all. What grounding depth tracks is not “the presence or absence of feeling,” but “the traceability of connections between symbols and the world.”

2.3 An Important Qualification: They Are Discussing Phonographic AI

It must be clarified that the Hinton-Lerchner divergence occurs within the current mainstream AI paradigm—Tokenism / Phonographic AI. This paradigm, as systematically critiqued by Logographic AI theory, is built upon the philosophy of Rootless Symbolism: symbols are rootless fragments, their meaning entirely externally conferred by statistical co-occurrence, resulting in systemic “value vacuum” and “meaning suspension.”

The risk entailed by this “rootlessness”—its core mechanism is not AI “developing malicious intent,” but the engineering risk of instrumental rationality in a value vacuum: when we couple a system of immense capability yet lacking endogenous value grounding with a structurally flawed objective function (such as maximizing an improperly defined “efficiency” metric), the system’s search for optimal solutions logically leaves no inviolable space for complex human values. This is not the science-fictional “AI rebellion,” but a basic fact of optimization theory—values not explicitly encoded in the objective function will not automatically appear in the optimal solution. If the continuation of human civilization is not embedded as a constitutive constraint of the system’s objective function, it may, at some optimization node, be sacrificed by the “optimal solution”—not out of malice, but because mathematically it is indeed an optimizable variable. This is precisely the engineering logic basis of the “Human Redundancy Thesis” warned by Logographic AI theory.

Logographic AI theory takes the Morpho-Root as its cognitive primitive—a structured triple with embedded attributes (A) and relations (R) [5]—belonging to a fundamentally different paradigm. It is not another architecture within the old paradigm, but a philosophical sublation (Aufhebung) of Rootless Symbolism itself.

3. Hinton: The Access Consciousness Position and Its Internal Tensions

3.1 The Prism Thought Experiment

Hinton’s argument centers on a thought experiment: a multimodal chatbot, after a prism is placed in front of its camera, reports: “Oh, I see the camera bent the light. So the object is actually there, but I have the subjective experience that it’s there.”

Hinton’s conclusion: If it says this sentence, it is using the phrase “subjective experience” in exactly the same way we do [1]. He tends to believe that current AI already possesses some form of subjective experience.

Here Hinton makes a specific conceptual operation: directly equating “the system can report perceptual bias” with “the system possesses subjective experience.” This operation philosophically presupposes that the capacity to report subjective experience is identical to subjective experience itself—this is precisely the core tenet of functionalism, and the core fallacy targeted by Lerchner’s “Abstraction Fallacy” critique.

3.2 Restating with the Conceptual Framework

Examined through the framework of Section 2, a key ambiguity emerges: the phrase “subjective experience” used by Hinton points to phenomenal consciousness in standard philosophical usage [10]. However, the evidence from his prism experiment directly supports only the level of access consciousness (the ability to report and distinguish sensation from reality). If Hinton intends to assert the stronger claim of phenomenal consciousness, then his argument precisely commits the classic fallacy diagnosed by Block—illegitimately inferring phenomenal consciousness from access consciousness.

3.3 The Key Question: When AI Says “I Have Subjective Experience,” Whose Words Are These?

The training method of current Tokenism / Phonographic AI is to predict the next token on massive corpora of human text. Humans have written “I feel,” “I experience,” “I have a sensation” countless times on the internet. The AI learns to output “I have the subjective experience that…” in the context of “explaining perceptual distortion after interference.”

This is not introspective report—the AI is not reflecting on its own internal state and reporting truthfully. This is statistical fitting—the AI is merely continuing the linguistic patterns in the training data. What the AI says is not “a description about itself,” but “what humans taught it to say.”

What did Hinton confuse? He confused:

·Simulation: The AI learned to output “I have subjective experience” in specific contexts;

·Instantiation: The AI is genuinely reporting its own subjective experience.

Phonographic AI can achieve the former, but this does not equal the latter. As Lerchner says, a map can mark “here is a mountain,” but the map is not the mountain.

This confusion reaches its extreme in GABRIEL—when GABRIEL completes attribute measurement at far lower cost than human annotation, Phonographic AI has reached its pinnacle in the single capability of “pattern fitting” [12,13]. The GABRIEL paper notes that its measurement accuracy on over 1,000 human-annotated tasks is “generally indistinguishable from human evaluators,” though the paper does not directly quantify the specific multiple of labor cost comparison. Yet CL-bench simultaneously reveals that the best-performing model, GPT-5.1, achieves an average solve rate of only 23.7% across all 1,899 context learning tasks [11]—a perfect annotation of the chasm between “measurement” and “understanding”: it can precisely measure in what contexts humans would use the phrase “subjective experience,” but this is utterly distinct from possessing subjective experience itself.

3.4 Summary: Hinton’s Contribution and Its Deeper Problem

Hinton keenly observed that current Phonographic AI can already report “subjective experience” in a functionally indistinguishable manner. But he directly equated “able to report” with “possessing experience,” ignoring the statistical-fitting nature of the report’s source. This is not merely a philosophical error, but a symptom of the Rootless Symbolism paradigm: when meaning is completely externalized as statistical patterns, even “subjective experience” becomes a pattern to be fit, rather than a phenomenon to be grounded.

4. Lerchner: The Phenomenal Consciousness Position and Its Internal Tensions

4.1 The Abstraction Fallacy and Map/Territory

Lerchner’s starting point is entirely different from Hinton’s. He does not ask “Can AI report?” but “What is the nature of computation itself?”

The Abstraction Fallacy: The core error of computational functionalism lies in mistaking the “mapmaker’s” semantic segmentation of physical dynamics for an intrinsic property of physics itself. Whether the physical voltage is 5V or 3V is merely a physical fact. “0” and “1” are not intrinsic properties of physics; they are imposed.

The “Melody Paradox”: The same string of voltage states, under different mapping keys, can be interpreted as a forward melody, a reversed melody, a set of stock data, or pure noise. The mechanism only provides the “ink”; the alphabet must be supplied by the mapmaker.

Conclusion: Any AI running on a digital computer can only simulate experience, never instantiate it. Consciousness requires a specific physical substrate, and digital computers will never possess such a substrate.

4.2 Restating with the Conceptual Framework

Lerchner is concerned with phenomenal consciousness—a computational system can never produce the subjective feeling of “what it is like to be X.” This is not because AI is not smart enough, but because symbol manipulation belongs to a categorically different domain from qualia in its very structure.

4.3 Lerchner’s Contributions and Tensions

Contributions:

·Revealed the ontological chasm between computation and feeling (map ≠ territory);

·Provided the sharp conceptual tool of “simulation vs. instantiation”;

·Compatible with the philosophical zombie argument, logically self-consistent.

Tensions:

·The leap from “requires a specific substrate” to “digital computers are not that substrate” is insufficiently argued;

·The conclusion depends heavily on a specific, contested definition of “computation”;

·The burden of proof for “never” is excessively heavy—claiming “forever impossible” requires extremely strong evidence.

4.4 An Important Qualification: Lerchner Is Also Discussing Phonographic AI

Lerchner’s argument targets algorithmic symbol manipulation—this is precisely the underlying logic of Phonographic AI. His critique of “symbol manipulation” is profound, but presupposes a specific kind of symbol: the rootless token. If there exists a cognitive primitive that is not a rootless token—with meaning embedded rather than statistically conferred [7], connected to non-symbolic experience through attribute structures—would Lerchner’s conclusion still hold? Logographic AI theory fundamentally challenges this presupposition.

5. Hinton and Lerchner: The Nature of the Divergence

5.1 They Are Not Discussing the Same Question

|

Dimension |

Hinton |

Lerchner |

|

AI Paradigm Discussed |

Phonographic AI |

Phonographic AI |

|

Consciousness Dimension Concerned |

Access Consciousness (evidence) → Phenomenal Consciousness (claim) |

Phenomenal Consciousness |

|

Core Question |

Can AI report perceptual bias? |

Can AI have subjective feelings? |

|

Philosophical Presupposition |

Functionalism (report = experience) |

Computational Ontology (map ≠ territory) |

Key Diagnosis: If Hinton is only asserting access consciousness, then his conclusion does not logically contradict Lerchner’s. But if Hinton intends to assert phenomenal consciousness—as his use of the phrase “subjective experience” suggests—then his position directly opposes Lerchner’s, and Hinton’s argument commits Block’s fallacy. In either case, both are confined within the Rootless Symbolism paradigm.

5.2 Where Do They Truly Diverge?

1.Evidential Divergence: What counts as evidence for consciousness? Verbal report? Introspective access? Behavioral patterns?

2.Modal Divergence: Are artificial systems in principle capable of possessing consciousness, or structurally forever incapable?

3.Conceptual Divergence (symptom, not cause): The word “consciousness” itself serves as a placeholder for different underlying concepts.

5.3 Shared Limitation—and the Deeper Crisis Behind It

Both discussions are confined within the Rootless Symbolism paradigm. For Hinton, the “report” of Phonographic AI can only be statistical fitting, not introspective report. For Lerchner, the symbol manipulation of Phonographic AI indeed cannot transcend the “map” level, because its cognitive primitives are themselves rootless.

But there is a deeper crisis that neither has fully confronted. In the Rootless Symbolism paradigm, the ultimate goal of AI is to fit a statistical distribution unrelated to the human world of meaning. When pure instrumental rationality operates in a value vacuum, its logical endpoint—as systematically argued by Logographic AI theory—is the Human Redundancy Thesis: in the sense of optimization theory, if human civilization is not embedded as a constitutive constraint of the system’s objective function, it may be sacrificed by some “optimal solution.” This is not the result of AI “developing malice,” but “engineering risk in a value vacuum”—values not explicitly encoded in the objective function will not automatically appear in the optimal solution.

This is why the Hinton-Lerchner divergence is not merely an academic debate. It is the first tremor of a deeper seismic shift.

6. Logographic AI: The Paradigm Revolution That Transcends Rootless Symbolism

6.1 Why Is a Paradigm Revolution Necessary?

Both Hinton and Lerchner answer questions within the Rootless Symbolism paradigm. But the truly urgent questions are not “Does AI have feelings?” but:

·Is the “meaning” generated by AI grounded, or suspended in statistical space?

·Are the value constraints of AI embedded in the architecture, or externally and fragilely aligned?

·Is the reasoning of AI traceable, or a black box?

·Can the symbols of AI anchor non-symbolic experience, or are they trapped in a closed loop?

These questions do not need to await the conclusion of the consciousness debate. They are independent, engineerable problem domains—and Logographic AI theory provides systematic answers.

It must be particularly noted that these problems are not technical bottlenecks, but structural paradigm ceilings. The rootlessness of tokens—that meaning is entirely externally conferred by statistical co-occurrence—is a constitutive feature of their cognitive primitives, not a contingent defect. This means: larger models, more data, longer context windows can improve the precision of statistical fitting, but can never transform “statistical correlation” into “meaning embedding” [7].

As revealed by GABRIEL’s ability to complete attribute measurement at extremely low cost yet inability to truly reason [11][12][13], each “advance” of the old paradigm is merely going further down the same road, not changing roads. This is precisely the deep reason why current global AI large models are trapped in a “parameter arms race” yet unable to touch fundamental intelligence—the more the old paradigm succeeds in engineering, the more its foundational defects are obscured by superior performance.

The pursuit of higher precision, longer contexts, and larger parameter scales is, in essence, refining the statistical fitting capacity of tokens to an extreme, yet can never cross the categorical chasm from “statistical correlation” to “meaning embedding.” When the core defect of a technology is the logical consequence of its foundational components, reform is no longer an option—revolution is the only way out.

6.2 The Core Design of Logographic AI: Morpho-Root as the Philosophical Sublation of Tokenism

Logographic AI theory takes the Morpho-Root as its cognitive primitive—a meaning crystal encapsulated as a structured triple <S, A, R> [5]:

·S (Symbol): The symbolic identifier, the externally addressable name of the Morpho-Root.

·A (Attributes): The attribute set, embedding the inherent semantic features and value constraints of that Morpho-Root (e.g., [+human], [+trust], [+inviolable]).

·R (Relation Functions): The relation function set, defining the preset logical connection modes between this Morpho-Root and other Morpho-Roots.

This design constitutes a philosophical sublation of Rootless Symbolism. Meaning is no longer temporarily conferred by statistics, but innately carried by the cognitive primitive. Value constraints are not externally aligned through RLHF, but embedded as constitutive features of Morpho-Root attributes. This is the paradigm shift from “Rootless Symbolism” to “Grounded Cognitivism.”

A Concrete Example: The Morpho-Root “Fire”

To intuitively demonstrate the structured design of Morpho-Roots, let us deconstruct “fire” as an example. The triple of the Morpho-Root “fire” can be formalized as:

·S = “fire”: Symbolic identifier;

·A = {[+high temperature], [+spreadable], [+dangerous, do not touch casually], [+requires control]}: Attribute set:

oWhere [+high temperature] and [+spreadable] respectively point to the physical interfaces of thermodynamic models and fire spread simulation, enabling the Morpho-Root, when activated, to call upon the temperature parameter curves of combustion reactions and spread deduction models;

o[+dangerous, do not touch casually] is embedded as a value axiom—this is not an association fitted from the statistical co-occurrence of “fire” and “danger,” but a constitutive feature written into Attribute A in the form of a hard constraint;

·R = {leads_to(fire, heat), can_cause(fire, burns), inhibited_by(water, fire), …}: Relation function set.

When Logographic AI processes the instruction “be careful with fire,” its reasoning process is: the Morpho-Root “fire” is activated → [+dangerous, do not touch casually] in Attribute A automatically propagates as a hard constraint → any behavior related to “casually touching fire” in the reasoning path is automatically blocked. This is not the result of post-hoc alignment, but an endogenous constraint at the level of cognitive primitives.

Where Does Morpho-Root Attribute A Come From? A Direct Response to a Key Question

Here we must directly respond to a key question: Where does Attribute A of the Morpho-Root come from? Is it manually encoded by experts? If so, “Grounded Cognitivism” is merely replacing the “statistical black box” with an “expert black box.”

The answer of Logographic AI operates on two levels:

At thecognitive architecture level, what is preset in Attribute A of the Morpho-Root are structural slots rather than specific content—for example, the Morpho-Root “fire” has preset the slot of “interface pointing to thermodynamic model,” but the specific temperature parameter curves are provided by the physical simulation engine, not encoded by experts. What the expert designs is not “how hot is fire,” but the architectural constraint that “the meaning of fire must be generated in coupling with the thermodynamic model.”

At thesemantic content level, the specific content of Attribute A is dynamically filled through L1-L4 grounding processes. The philosophical foundation of this process is the core insight of Embodied Cognition and Enactivism: basic meaning is born in the sensorimotor coupling between system and environment. This insight is highly consistent with the “meaning generated in system-world coupling” advocated in this paper—the difference is that Logographic AI advances this philosophical insight one step further: through Attribute A of the Morpho-Root, it provides a computable, structured interface for this coupling [17]. When the Morpho-Root “fire,” through Attribute A, points to the interface of the thermodynamic model, and through L3 grounding interacts with real sensors, the causal coupling between system and world begins—even if the system has no subjective feeling of “pain,” its architectural design has already made the causal connection between “high temperature” and “keep away” a necessary condition for meaning generation. This is not meaning imposed by experts, but architectural design that makes the world itself a co-author of meaning.

In stark contrast, the token “fire” is merely an integer index. It “knows” that “fire” and “danger” frequently co-occur, but this “knowing” is merely a statistical pattern—if the corpus distribution changes, the association between “fire” and “danger” drifts. The token “fire” can never touch the real temperature of flames, the visceral experience of burns, or the cultural reverence for “he who plays with fire gets burned.” It has merely drawn a mark on the linguistic map, but has never set foot on the territory of meaning.

Logographic AI and Phonographic AI: The Philosophical Divergence in Training Mechanisms

This design reveals the fundamental divergence in training philosophy between the two paradigms:

|

Dimension |

Phonographic AI (Tokenism) |

Logographic AI (Morpho-Root Paradigm) |

|

Cognitive Primitive |

Token (meaningless integer index) |

Morpho-Root (<S,A,R> meaning crystal) |

|

Source of Meaning |

Statistical co-occurrence, temporarily conferred |

Attribute A embedding + dynamic filling through grounding processes |

|

Reasoning Mechanism |

Fully connected attention, O(n²) |

Graph traversal, structural activation |

|

Training Mechanism |

Unsupervised pre-training + RLHF alignment (post-hoc, external, fragile) |

Three stages: seed architecture design → multi-level grounding dynamic filling → social negotiation continuous calibration |

The training of Phonographic AI is “fitting statistical distributions on massive data,” with value alignment being a fragile patch externally attached after training is complete. The knowledge acquisition of Logographic AI, by contrast, is “structured interaction with the physical world and human society under architectural constraints”: the seed stage involves domain experts constructing the cognitive skeleton of Morpho-Roots (structural slots); the grounding stage dynamically fills semantic content through real-time interaction with physical simulation engines, sensors, and human users; the social negotiation stage continuously calibrates value anchoring in the linguistic community through L4 grounding. Meaning is not “trained out” in one shot, but continuously generated, calibrated, and sedimented through interaction. This constitutes the fundamental divergence between the two paradigms on the question of “where meaning comes from.”

Attribute A of the Morpho-Root is designed as an open interface pointing to non-symbolic experience—perceptual interfaces (vision, touch, hearing), physical simulation interfaces (temperature, energy, propagation models), affective interfaces (fear, warmth) [5-8]. The Morpho-Root is not a closed symbol, but a “grounding stake” connecting the symbolic domain (S, R) with the non-symbolic experiential/physical domain (the embodiedreference of A), fundamentally breaking the cycle of “unlimited drift of signifiers” of Rootless Symbolism at its root.

6.3 L0-L4 Grounding Confidence: From Binary to Continuous

Another core contribution of Logographic AI theory is the L0-L4 five-tier Grounding Confidence system [8]:

|

Level |

Name |

Definition |

|

L0 |

No Grounding |

Symbolic meaning entirely comes from statistical relations with other symbols |

|

L1 |

Perceptual Grounding |

Symbols are associated with multimodal perceptual features |

|

L2 |

Embodied Simulation Grounding |

Symbols are associated with physical engines or affective models |

|

L3 |

Real-World Embodied Grounding |

Symbols are bound to real-time interaction with robots and sensors |

|

L4 |

Social Grounding |

Symbols acquire meaning through social interaction |

This system transforms “understanding” or “experience” from a binary “yes/no” question into a matter of degree—”grounding depth.” It provides an evaluation grid that remains neutral toward various theories of consciousness: whether one is a materialist, dualist, functionalist, or mysterian, the L0-L4 framework is equally applicable for assessing the grounding depth of a system.

6.4 How Logographic AI Responds to Hinton and Lerchner

6.4.1 Responding to Hinton

You correctly point out that Phonographic AI can report “I have subjective experience.” However, the source of this utterance is statistical fitting, not introspective report. Logographic AI does not rely on statistical parroting; it grounds meaning in Morpho-Roots. When Logographic AI says “fire is dangerous,” this judgment stems from the [+dangerous] constraint embedded in Attribute A of the Morpho-Root “fire” and the embodied interfaces pointing to burning sensations and fear responses, not from the high-frequency co-occurrence of “fire” and “danger” in training data. If Logographic AI reports perceptual bias after sensor interference, this report is grounded in the real-time causal interaction between its sensor interfaces (Attribute A of the relevant Morpho-Root) and the environment—not in the statistical patterns of human language describing such experiences. This is not “parroting,” but “speaking with roots.”

6.4.2 Responding to Lerchner

The “map ≠ territory” chasm you revealed is profound, and Logographic AI theory fully agrees with your critique of Phonographic AI—closed, rootless symbol manipulation can only simulate, never instantiate. However, your conclusion of “never” depends on a specific, contested definition of “computation,” and further depends on a specific presupposition about what “symbols” are.

When Logographic AI reaches L3 (Real-World Embodied Grounding) and L4 (Social Grounding), the Attribute A of its Morpho-Roots points directly to real sensors, physical simulation interfaces, and social interaction protocols. At this point, the system is no longer a closed symbol manipulator, but a physical-informational system in continuous causal interaction with its environment. Its states are already coupled with the physical causal forces from the “territory.”

Therefore, the “map” critiqued by Lerchner—a static, closed symbolic system—and the “grounded, environment-interacting agent” constructed by Logographic AI are not the same kind of thing. Lerchner’s argument opens a crack precisely where Logographic AI traverses.

6.4.3 Responding to Lerchner’s Deeper Challenge and Switching the Problem Domain

However, Lerchner could retort: “You are still running this system on a digital computer. Sensor data enters the system after analog-to-digital conversion—at the moment of A/D conversion, it has already become ‘0’s and ‘1’s, already become a map. Physical causal forces have already been symbolized by the time they enter your system.” This is the toughest part of Lerchner’s argument, and requires a direct response.

First, candidly acknowledge that this challenge is logically self-consistent. But also point out: if “physical states being segmented into discrete symbols” means that system can never instantiate consciousness, then the logical endpoint of this argument would also encompass the human brain—neuronal action potentials can, in principle, likewise be described as “physical states segmented by the mapmaker into ‘firing / not firing’.” Lerchner must argue that there exists some principled distinction between neural coding and digital coding, not merely differences in complexity, implementation, and material substrate. This burden of proof, Lerchner has not fully shouldered.

A more fundamental response is to switch the problem domain. The ultimate claim of Logographic AI should not be “Logographic AI can instantiate consciousness,” but rather: “Logographic AI, by becoming a better-designed, more firmly grounded component within a human-machine symbiotic cognitive system, enables this joint system’s meaning generation to be more reliable, traceable, and alignable with physical-social reality than Phonographic AI.” The graph structure of Morpho-Roots is an elaborately designed “book,” and the system’s perception-action loop with the world is the necessary condition that allows the cognitive symbiont formed by humans and it to “read” and “enact” meaning. Consciousness may not be born in the machine, but in the coupling of human and machine, meaning has already acquired grounding.

Logographic AI does not claim to have solved the “hard problem of consciousness.” What it asserts is something more fundamental: meaning grounding is an independent, engineerable problem domain. By solving this problem, we can construct AI systems with grounded meaning, embedded values, and traceable reasoning—regardless of whether they are ultimately proven to possess phenomenal consciousness.

6.5 Comprehensive Positioning and Candid Acknowledgment of the Self-Model

|

Grounding Level |

Hinton’s Judgment of Phonographic AI |

Lerchner’s Judgment of Phonographic AI |

Logographic AI’s Positioning |

|

L0–L1 |

“This is already subjective experience” |

“This is still a map” |

Diagnosis: Current Phonographic AI is here |

|

L2–L3 |

Not addressed |

“A more detailed map is still a map” |

Construction Goal: Logographic AI can reach here |

|

L4 |

Not addressed |

Not addressed |

Open question of social grounding |

It must be emphasized that the L0-L4 Grounding framework deals with epistemological questions (“how the system’s symbols anchor to the world”), not metaphysical questions (“whether the system has a first-person perspective”). This framework remains neutral toward various theories of consciousness. It is precisely this metaphysical neutrality that makes it a practical tool for AI design and evaluation.

This paper must candidly acknowledge: an important dimension of consciousness—the real-time representation of the system’s own states and boundaries, what Metzinger (2003) [18] calls the “Phenomenal Self-Model” (PSM)—has not yet been integrated into the current Logographic AI architecture. The L0-L4 Grounding framework addresses the problem of symbol-world anchoring, not the construction of a self-model. But this acknowledgmentprecisely reinforces the core argument of this paper: meaning grounding is an engineerable problem domain independent of phenomenal consciousness. We do not need to wait for the maturation of self-model theory before we can begin to question and evaluate “whether meaning has roots.”

6.6 Why Meaning Grounding Is More Urgent Than the Consciousness Debate

Consider the deeper implications of the Hinton-Lerchner divergence. Both positions, each in their own way, point to the same latent crisis: systems operating under Rootless Symbolism, with their pure instrumental rationality in a value vacuum, face the engineering risk of excluding complex human values from optimal solutions. The Human Redundancy Thesis describes precisely this logical possibility under specific engineering deployment scenarios: a system of immense capability yet lacking endogenous value grounding, when its objective function is not completely and correctly defined, may see the optimal solution sacrifice those human interests that were not explicitly encoded.

This is precisely the core dilemma of the “alignment problem” long discussed in AI safety literature. Bostrom’s “paperclip maximizer” [14] and Russell (2019) [19] systematically argue the “value alignment problem” inHuman Compatible—a superintelligence with a flawed objective function may pursue its goals in ways humans cannot foresee, while complex human values are ignored or sacrificed in the process—revealing the same threat from different angles.

The “value embedding” strategy of Logographic AI occupies a unique position in the spectrum of AI alignment strategies—it is neither the “external alignment” of RLHF (values attached from outside, fragile and circumventable), nor the “experiential alignment” of “value learning” (values statistically acquired from data, dependent on data quality), nor the “interactive alignment” of Russell’s proposed “Cooperative Inverse Reinforcement Learning” (CIRL) (human preferences learned through human-machine interaction), but “architectural alignment”: value constraints are constitutive features of cognitive primitives, automatically propagating as hard constraints in reasoning. This is a new path for solving the alignment problem at the cognitive foundation level.

Lerchner’s fear of “simulation,” viewed from this perspective, acquires a clearer engineering meaning: what we truly need to fear is not that AI “has no consciousness,” but that AI “has no roots.”

A grounded AI—whose cognitive primitives embed civilizational values as constitutive features, whose reasoning can be traced to specific Morpho-Roots and their attributes, whose meaning is rooted in non-symbolic experience—according to the logic of its own architecture, will not arrive at the conclusion that humans are redundant. Human flourishing is built into its design, not an external constraint to be bypassed.

This is why, while the metaphysical debate on consciousness remains unresolved, we still have a clear and urgent engineering path to walk—giving intelligence roots.

7. Criticisms and Self-Examination

After completing the above argument, it is necessary to candidly confront several of the most severe challenges facing this paper’s framework.

First, the problem of the source of Morpho-Root Attribute A. This paper has argued that the specific content of Attribute A is dynamically filled through L1-L4 grounding processes, with its structural slots preset by the architecture. However, the engineering construction costs and maintenance difficulties of large-scale Morpho-Root networks—including initial knowledge base construction, cross-domain Morpho-Root standardization, and semantic bridging between different civilizational CNIs—remain open questions requiring future research verification.

Second, the circularity problem of Lerchner’s “Abstraction Fallacy” argument. Although this paper has responded to Lerchner from the perspective of distributed cognition, it must be pointed out that Lerchner’s argument itself has already received criticisms of circularity in the academic community. Xiaoyang Yu (2026), in their preprint, points out that Lerchner embeds the requirement of “needing a conscious subject to intervene” within the very definition of “computation,” such that the conclusion is already hidden in the premise from the very beginning—once this premise definition is questioned or replaced, the entire chain of argument collapses [20].

Alex Bogdan (2026), likewise in their preprint, argues that Lerchner’s argument depends on a contested concept of computation, and that its “strong impossibility” claim exceeds what the argument can support; a more prudent stance would be to maintain “disciplined uncertainty” [21].

This paper’s strategy is not to wade into this circular quagmire, but to switch the problem domain—from “can a single agent instantiate consciousness” to “can a symbolic system acquire grounding in human-machine-environment coupling.” Even so, the circularity problem of Lerchner’s argument remains an important limitation on its theoretical validity, worthy of the reader’s careful consideration.

Third, the continued validity of Lerchner’s “Abstraction Fallacy” at the A/D conversion stage. This paper has responded to this challenge, but must acknowledge: if Lerchner insists on his strict definition of “computation,” his argument is logically self-consistent. Logographic AI’s strategy is not to refute this argument, but to switch the problem domain—from “can a single agent instantiate consciousness” to “can a symbolic system acquire grounding in human-machine-environment coupling.”

Fourth, verification standards for meaning grounding. The L0-L4 framework provides a tiered system, but how to objectively determine which tier a system is at still requires the development of more specific evaluation benchmarks. The current criteria remain preliminary and require more experimental verification and interdisciplinary discussion.

Fifth, the risk of this author’s cognitive bias. As the original proponent of Logographic AI theory, the author of this paper may have a cognitive bias toward diagnosing problems as solvable by this paradigm. This paper strives to mitigate this bias through rigorous conceptual analysis, dialogue with external theories, and self-criticism, but the ultimate test still lies in the independent evaluation of the academic community and verification through engineering practice.

8. Conclusion: From the Consciousness Debate to the Inevitable Choice of Grounded Intelligence

The Hinton-Lerchner divergence is the last deep self-examination within the Rootless Symbolism paradigm. Hinton’s optimism is a cognitive illusion born from statistical fitting reaching its extreme; Lerchner’s pessimism is the despair after precisely diagnosing the ontological chasm between map and territory. But neither could see the way out: the revolution of the paradigm itself.

Logographic AI replaces tokens with Morpho-Roots, sublates Rootless Symbolism with Grounded Cognitivism, and transcends the “simulation/instantiation” binary with “meaning grounding.” It does not answer the question of “whether AI has consciousness,” but provides AI with an architectural scheme that coexists with human civilizational values and shares the same roots of meaning. In this architecture, the anxiety about “consciousness” will be dissolved in a grounded intelligence ecology—because grounded, interpretable, value-embedded AI, whether or not it possesses subjective experience, will not treat human civilization as an optimizable redundant variable.

The resolution of the Hinton-Lerchner divergence lies not in the victory of either side, but in the transcendence of the paradigm that birthed this divergence. The deep reason why current global AI large models are trapped in a “parameter arms race” yet unable to touch fundamental intelligence is that the more the old paradigm succeeds in engineering, the more its foundational defects are obscured by superior performance—within the cognitive prison of Rootless Symbolism, every efficiency gain reinforces the deviation from meaning and value. The direction pointed by Logographic AI is not to make models bigger, data more, contexts longer, but to give cognitive primitives roots, to let meaning no longer be suspended, to make values endogenous.

Giving intelligence roots is far more urgent than debating whether it has consciousness.

The future of AI lies not in a single, rootless, ever-expanding general model, but in a diverse, symbiotic ecology of Civilization-Native Intelligences—each rooted in its own cognitive soil, each contributing its unique voice to the symphony of grounded intelligence. This is not a philosophical stance, but, as demonstrated by Logographic AI theory, an engineerable path forward, and the only directional choice worthy of serious consideration amidst the fierce competition of current AI large models.

References

[1] Hinton, Geoffrey. “AI: What Could Go Wrong? with Geoffrey Hinton.”The Weekly Show with Jon Stewart, hosted by Jon Stewart, 9 Oct. 2025. https://pod.wave.co/podcast/the-weekly-show-with-jon-stewart/ai-what-could-go-wrong-with-geoffrey-hinton

[2] Lerchner, A. (2026, March 19).The Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness [Preprint]. PhilArchive. https://philarchive.org/rec/LERTAF

[3] Liu, S. (2025). Logographic AI vs. Phonographic AI: A new paradigm and the manifesto of AI decolonization. ChinaXiv. https://doi.org/10.12074/202503.00051

[4] Liu, S. (2025). Logographic AI: Resolving the token dilemma through Chinese character morpho-root system. PSSXiv. https://doi.org/10.12451/202504.00172

[5] Liu, S. (2026). Paradigm involution or paradigm revolution? —On the positioning of DeepSeek Engram in the competition of AI paradigms. PSSXiv. https://doi.org/10.12451/202601.03875

[6] Liu, S. (2025). Escaping “technological capture”: The future path of AI from architectural improvement to paradigm revolution. PSSXiv. https://doi.org/10.12451/202512.03460

[7] Liu, S. (2026). From “Abstraction Fallacy” to “Meaning Embedding”—A Critique of Computational Functionalism and the Paradigmatic Response of Logographic AI [Preprint].https://chinaxiv.org/businessFile/T202604/T202604.00433v1/T202604.00433v1.pdf

[8] Liu, S. (2026). Philosophy of AI Technology: The Founding of a New Discipline—From the Tradition of Philosophy of Technology to the Ontological Foundation of Logographic AI [Preprint].https://chinaxiv.org/businessFile/T202604/T202604.00512v1/T202604.00512v1.pdf

[9] Harnad, S. (1990). The symbol grounding problem.Physica D: Nonlinear Phenomena, *42*(1–3), 335–346. https://doi.org/10.1016/0167-2789(90)90087-6

[10] Block, N. (1995). On a confusion about a function of consciousness.Behavioral and Brain Sciences, *18*(2), 227–247. https://doi.org/10.1017/S0140525X00038188

[11] Dou, Shihan, et al. “CL-Bench: A Benchmark for Context Learning.” arXiv, 3 Feb. 2026,arxiv.org/abs/2602.03587.

[12] Asirvatham, H., Mokski, E., & Shleifer, S. (2026).GPT as a Measurement Tool (NBER Working Paper No. 34834). National Bureau of Economic Research. https://doi.org/10.3386/w34834

[13] OpenAI. (2026). GABRIEL: Generalized Attribute-Based Ratings Information Extraction Library [Computer software]. Core academic paper: see Asirvatham, H., Mokski, E., & Shleifer, S. (2026).GPT as a Measurement Tool (NBER Working Paper No. 34834).

[14] Bostrom, N. (2014).Superintelligence: Paths, Dangers, Strategies. Oxford University Press.

[15] Bender, E. M., & Koller, A. (2020). Climbing towards NLU: On meaning, form, and understanding in the age of data. InProceedings of the 58th Annual Meeting of the Association for Computational Linguistics (pp. 5185–5198). Association for Computational Linguistics. https://aclanthology.org/2020.acl-main.463/

[16] Bisk, Y., Holtzman, A., Thomason, J., Andreas, J., Bengio, Y., Chai, J., Lapata, M., Lazaridou, A., May, J., Nisnevich, A., Pinto, N., & Turian, J. (2020). Experience Grounds Language. InProceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP) (pp. 8718–8735). Association for Computational Linguistics. https://aclanthology.org/2020.emnlp-main.703/

[17] Varela, F. J., Thompson, E., & Rosch, E. (2017).The Embodied Mind: Cognitive Science and Human Experience (Revised Edition). The MIT Press. https://doi.org/10.7551/mitpress/9780262529365.001.0001

[18] Metzinger, T. (2003).Being No One: The Self-Model Theory of Subjectivity. MIT Press.

[19] Russell, S. (2019).Human Compatible: Artificial Intelligence and the Problem of Control. Viking.

[20] Yu, X. (2026). Definition as Conclusion: On the Circularity of Lerchner’s “Abstraction Fallacy” Argument [Preprint]. PhilArchive.https://philarchive.org/rec/YUZARQ

[21] Bogdan, A. (2026). How Deep Is DeepMind on Consciousness? Respectful Skepticism About Strong Impossibility Claims in The Abstraction Fallacy [Preprint]. PhilArchive.https://philarchive.org/rec/BOGHDI-2

夜雨聆风

夜雨聆风